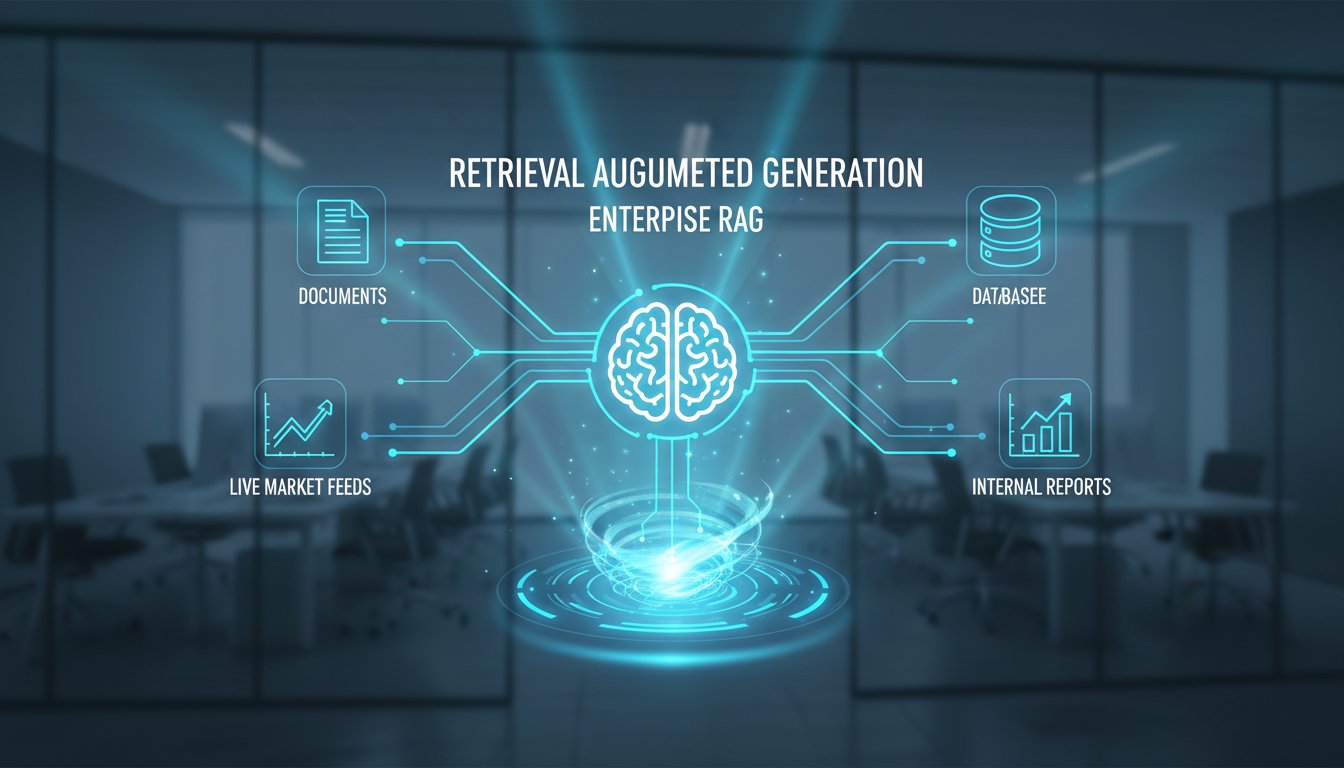

Imagine asking your company’s AI system a complex question about last quarter’s supply chain disruptions, and getting back a precise, sourced answer in seconds, one that pulls from internal reports, live market data, and industry research all at once. That’s not a distant promise. That’s what modern Retrieval Augmented Generation (RAG) is making possible right now.

For enterprise teams that have been burned by AI systems hallucinating facts or returning stale information, RAG has emerged as one of the most practical fixes in the field. It bridges the gap between a language model’s general knowledge and the specific, up-to-date information a business actually needs. But the technology hasn’t stood still. In 2026, RAG is evolving fast, and the changes are significant enough that teams who haven’t revisited their approach in the past year may already be falling behind.

This post breaks down what’s actually changing in RAG right now: the hybrid architectures gaining traction, the push for real-time data integration, the scalability improvements making enterprise deployment more realistic, and the domain-specific adaptations that are quietly becoming a competitive advantage. Whether you’re evaluating RAG for the first time or looking to upgrade an existing implementation, here’s what you need to know.

Hybrid Models Are Changing How Retrieval Works

For a while, the RAG conversation was dominated by pure neural approaches. Dense vector search, embedding models, semantic similarity, these were the tools everyone reached for. They work well, but they have blind spots, especially when precision matters more than general relevance.

In 2026, the shift toward hybrid retrieval models is one of the clearest trends in the space. These systems combine neural network-based semantic search with traditional keyword and symbolic retrieval methods. The result is a more balanced approach that captures both the contextual nuance of neural search and the exact-match reliability of older techniques.

Why Hybrid Retrieval Outperforms Either Approach Alone

Neural retrieval is great at understanding intent. If a user asks about “reducing operational overhead,” it can surface documents about cost efficiency even if those exact words don’t appear. But it can miss highly specific terms, product codes, regulatory citations, or proprietary jargon that a keyword search would catch immediately.

Hybrid models handle both. Early enterprise deployments using these architectures are reporting meaningful improvements in retrieval accuracy, particularly in legal, financial, and technical domains where precision is non-negotiable. The tradeoff in complexity is real, but for high-stakes use cases, the accuracy gains justify it.

Context-Aware Generation Gets Smarter

Beyond retrieval, the generation side of RAG is also improving. Context-aware generation, where the model adjusts its output based on the specific retrieved documents rather than just appending them, is becoming more sophisticated. Models are getting better at synthesizing conflicting sources, flagging uncertainty, and grounding responses in the actual retrieved content rather than defaulting to pre-trained assumptions.

This matters a lot for enterprise use. A system that can say “based on the Q3 internal report, the figure is X, though the external benchmark suggests Y” is far more useful than one that blends both into a confident-sounding but ambiguous answer.

Real-Time Data Integration Is Now a Priority

One of the persistent frustrations with early RAG deployments was the lag between when information changed and when the system reflected that change. Static knowledge bases, even well-maintained ones, create a gap that can undermine trust in the system’s outputs.

In 2026, real-time data integration has moved from a nice-to-have to a core design requirement for serious enterprise RAG systems.

Connecting RAG to Live Data Sources

Leading implementations now connect RAG pipelines directly to live data sources: internal databases, CRM systems, news feeds, regulatory update services, and more. When a user queries the system, retrieval pulls from current data, not a snapshot from last month’s indexing run.

This shift has practical implications. Customer-facing teams using RAG-powered tools can get answers that reflect today’s pricing, inventory, or policy changes. Compliance teams can query against the most recent regulatory guidance. Research teams can surface papers published this week, not just those indexed at deployment.

The Infrastructure Challenge

Real-time integration isn’t trivial. It requires robust indexing pipelines, careful handling of data freshness signals, and thoughtful decisions about which sources to prioritize when information conflicts. Teams building these systems are investing heavily in the data infrastructure layer, often as much as in the model layer itself. The organizations getting this right are treating RAG less like an AI project and more like a data engineering project with AI on top.

Scalability Is Finally Catching Up to Enterprise Needs

Early RAG systems were impressive in demos but often struggled under real enterprise conditions: large document corpora, high query volumes, strict latency requirements, and the need to serve multiple business units with different data access permissions.

Scalability improvements in 2026 are addressing these gaps directly.

Handling Large-Scale Corpora

Modern RAG architectures are now designed to handle corpora in the hundreds of millions of documents without significant degradation in retrieval quality or speed. Advances in approximate nearest neighbor search, hierarchical indexing, and distributed retrieval infrastructure have made this possible at costs that are increasingly practical for mid-to-large enterprises.

Query throughput has also improved. Systems that previously required careful traffic management to avoid latency spikes are now handling concurrent enterprise workloads more reliably. For organizations that need RAG to power internal tools used by thousands of employees simultaneously, this is a meaningful shift.

Multi-Tenant and Permission-Aware Retrieval

One underappreciated scalability challenge is access control. In an enterprise, not everyone should see everything. A RAG system that ignores document-level permissions is a security liability. In 2026, permission-aware retrieval, where the system only surfaces documents a given user is authorized to access, is becoming a standard feature rather than a custom add-on.

This makes enterprise deployment significantly more realistic. IT and security teams are far more willing to greenlight RAG projects when access control is built into the retrieval layer from the start.

Domain-Specific Adaptations Are Creating Real Competitive Advantages

General-purpose RAG works reasonably well across many tasks. But the organizations seeing the strongest results in 2026 are those that have invested in domain-specific adaptations, tuning their retrieval and generation pipelines for the specific language, document types, and reasoning patterns of their industry.

Specialized Embeddings and Retrieval Models

A legal RAG system benefits from embeddings trained on case law and statutory language. A biomedical system performs better with embeddings that understand clinical terminology and research paper structure. A financial system needs to handle earnings reports, regulatory filings, and market data with precision that general embeddings often miss.

In 2026, there’s a growing ecosystem of domain-specific embedding models and retrieval components that enterprises can plug into their RAG pipelines. Some are open-source; others come from specialized vendors. Either way, the performance gap between a generic RAG setup and a domain-tuned one is wide enough that it’s hard to ignore.

Interpretability as a Feature

Another trend worth noting: interpretability is getting more attention. Enterprise users, especially in regulated industries, need to understand why a system returned a particular answer. Which documents were retrieved? How did they influence the output? Where did the model express uncertainty?

RAG systems in 2026 are increasingly built with interpretability as a first-class feature, not an afterthought. Citation tracking, source attribution, and confidence scoring are becoming standard parts of enterprise RAG interfaces. This isn’t just about compliance. It’s about building the kind of trust that gets AI tools actually adopted by the people who need to use them.

Major AI Firms Are Accelerating RAG Integration

It’s not just individual enterprises experimenting with RAG. The major AI platforms are moving fast to embed RAG capabilities directly into their commercial offerings.

Collaborations between leading AI firms and enterprise software vendors are bringing RAG into tools that organizations already use: document management systems, customer support platforms, internal knowledge bases, and business intelligence tools. The goal is to reduce the integration burden on enterprise teams and make RAG accessible without requiring deep ML expertise in-house.

This is accelerating adoption, but it’s also raising the bar. As RAG becomes a standard feature rather than a differentiator, the competitive advantage shifts to how well an organization has tuned and governed its RAG implementation, not just whether it has one.

What This Means for Enterprise Teams Right Now

RAG in 2026 is more capable, more scalable, and more accessible than it was even 18 months ago. But the organizations getting the most value from it aren’t just plugging in a vendor solution and calling it done. They’re making deliberate choices about hybrid retrieval architectures, investing in real-time data infrastructure, thinking carefully about domain adaptation, and building interpretability into their systems from the start.

The technology has matured enough that the question is no longer whether RAG can work at enterprise scale. It can. The question now is whether your team has the strategy and infrastructure to take full advantage of where the technology is today.

If you’re evaluating your current RAG setup or planning a new implementation, now is a good time to take stock. The gap between a well-designed RAG system and a generic one is growing, and so is the business impact that comes with it.

Want to see how modern RAG architectures could fit your specific enterprise use case? Talk to our team. We work with organizations across industries to design and implement RAG systems that are built for real-world scale, not just proof-of-concept demos.