Imagine this: Your enterprise RAG proof-of-concept worked flawlessly. The demos impressed the C-suite, and your team celebrated a major AI milestone. You rolled it out to the first production users, and that’s when the silence began. Tickets trickled in. “The agent lost the thread of the conversation.” “It’s giving contradictory answers.” “This is taking forever and costing a fortune.” Your beautiful, high-recall retrieval system, the one you spent months tuning with the latest embedding models and vector databases, is failing the moment real work begins.

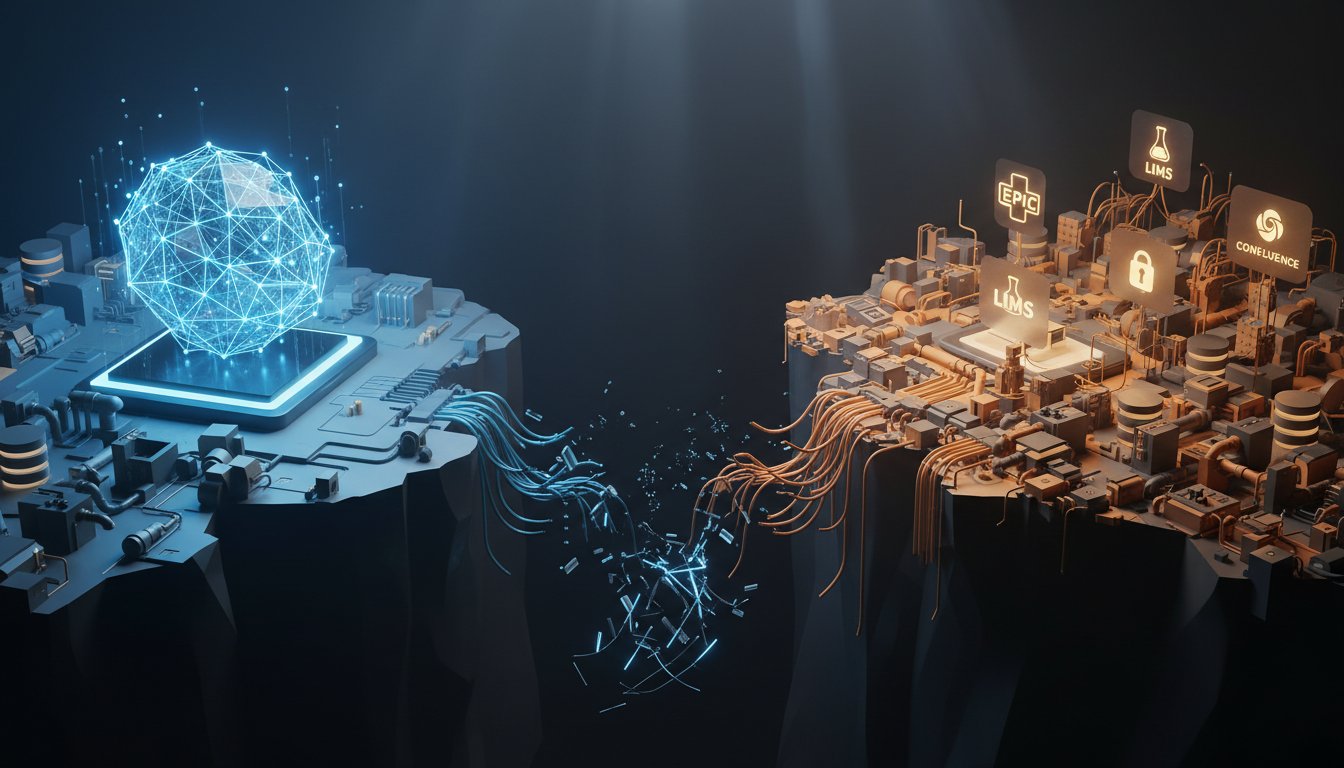

This is the quiet crisis in enterprise AI right now. Teams are pouring resources into a race for retrieval perfection, chasing marginal gains in embedding accuracy, while the real bottleneck quietly erodes trust and inflates costs. The problem isn’t finding the right information. It’s knowing what to do with it once you have it. The future of scalable, trustworthy enterprise RAG doesn’t lie in better search. It lies in smarter orchestration.

The Retrieval Trap: Why Better Embeddings Won’t Save Your RAG

For the last two years, the enterprise RAG narrative has been dominated by retrieval. The logic seemed sound: better embeddings lead to better context, which leads to better answers. Teams have benchmarked countless models, from OpenAI’s text-embedding-3 to open-source options like BGE-M3, all in pursuit of that perfect semantic match.

Yet a striking pattern has emerged from production deployments. According to the latest Enterprise RAG Performance Benchmark for Q1 2026, 68% of production RAG systems experience a greater than 40% drop in answer accuracy after just the third conversational turn when relying on static chunking and vector search alone.

This metric exposes the fundamental flaw in retrieval-first thinking. It treats each query as an independent, atomic event. But enterprise work isn’t like that. It’s conversational, iterative, and contextual. An analyst doesn’t ask “What were Q3 sales?” and then “What were the top products?” as two separate, unrelated questions. The second question is tied directly to the first. Static retrieval systems, no matter how accurate, lack the memory and routing intelligence to maintain that thread. They pull fresh, potentially overlapping chunks for each turn, flooding the context window with noise and forcing the LLM to reconcile contradictions it created itself. You’re not solving a search problem. You’re facing a context management crisis.

The Agentic Workflow Reality Check

This failure mode gets worse in agentic systems, where an AI is tasked with completing multi-step workflows. Dr. Elena Rostova, Lead AI Architect at the Enterprise AI Consortium, put it plainly in April 2026: “Vector retrieval is no longer the bottleneck. Context decay and routing inefficiency are bleeding enterprise AI budgets. The next generation of RAG isn’t about better embeddings, it’s about smarter context orchestration.”

An agent writing a report needs to carry forward findings from its research phase into its drafting phase. A static RAG pipeline built for single-turn Q&A will force the agent to re-retrieve, and likely re-interpret, critical information at every step. That destroys coherence and explodes token costs.

Context Decay Explained: What’s Actually Breaking in Production

To fix the problem, you have to diagnose it correctly. The core issue is context decay: the progressive degradation of semantic coherence and task-specific relevance across a multi-turn interaction. It’s the difference between a focused dialogue and a series of disconnected statements.

Retrieving vs. Routing: The Critical Distinction

Most RAG pipelines are strong retrievers but terrible routers. They can find documents related to a keyword or concept, but they can’t intelligently decide which subset of all potentially relevant information is actually necessary for the current step in a conversation or workflow. This leads to two costly outcomes:

- Context Window Pollution: The system retrieves 10 relevant chunks. All ten get sent to the LLM, but only two are crucial for answering the specific nuance of the user’s follow-up question. The other eight act as noise, confusing the model and degrading answer quality.

- Semantic Fragmentation: In turn #1, the system retrieves chunk A, which defines a key term. In turn #2, it retrieves chunks B and C, which use that term but don’t redefine it. The LLM, now missing chunk A from its context, has to infer or hallucinate the term’s meaning, leading to inconsistency.

This isn’t hypothetical. A Tier-1 financial services firm traced a 14.2% hallucination rate in its customer service chatbot directly to this fragmentation. The system knew the facts but couldn’t keep them organized across a conversation.

Dynamic Context Routing (DCR): The New Enterprise Standard

The industry’s response to context decay is a shift from static Retrieval-Augmented Generation to dynamic Context-Augmented Generation. The cornerstone of this new architecture is Dynamic Context Routing (DCR). DCR moves beyond “fetch everything vaguely related” to “intelligently manage, prioritize, and route the context needed right now.”

The DCR Architecture Breakdown

A solid DCR system isn’t a single tool. It’s a layered orchestration framework built on three core pillars:

- Graph-Augmented Memory Layer: This is your system’s long-term, structured memory. Instead of relying solely on vector embeddings, key entities (projects, clients, products, regulations) and their relationships are stored in a knowledge graph. This graph lets the system understand connections, not just similarities. When a user asks about “Project Alpha’s compliance status,” the graph knows that “Project Alpha” links to “SOC 2 report Q4-2025” and “Data Privacy Officer Jane Smith,” enabling precise, relational retrieval.

- Intent-Based Routing Engine: This component classifies the user’s current intent within the broader workflow. Is this a request for a definition, a comparison, or the next step? Based on that intent and the conversation history, the router decides which sources to query (vector store, graph, cached conversation history, external API) and how to weight or filter the results. It acts as a context traffic controller.

- Fallback and Validation Layers: These are your guardrails. A fallback mechanism ensures that if the graph or intent router fails, basic vector search still works. A validation layer, often a lightweight classifier or rule set, checks proposed context chunks for relevance or potential conflict before they’re sent to the expensive LLM.

The Proven Impact: Real-World Metrics

The financial services firm mentioned earlier implemented a DCR system to replace its static chunking pipeline. The results, validated over a full quarter of production traffic, were significant:

- Hallucination Rate: Reduced from 14.2% to 2.1%.

- Conversational Coherence: Accuracy degradation after turn #3 fell from >40% to under 12%.

- Operational Efficiency: Token consumption per complex conversation dropped by 34%.

- Cost Savings: Fewer redundant LLM calls and lower token usage translated to a direct monthly inference cost saving of $118,000.

These aren’t lab metrics. They’re the business outcomes of fixing context decay.

How to Implement DCR Without Rewriting Your Stack

Moving to a dynamic context model doesn’t require tearing everything down. It’s a strategic evolution. Here’s a four-step playbook for enterprise AI teams.

Step 1: Audit Your Current Context Window Utilization

You can’t manage what you don’t measure. Before writing a line of new code, instrument your existing RAG pipeline to log what’s actually happening in production.

- Tool: Use the open-source RAGAS framework or TruLens to evaluate conversation traces.

- Key Metric: Calculate the Context Relevance Score per retrieved chunk. How many chunks in each LLM call are actually cited in the final answer? If your score is consistently low (below 0.3, for example), you have severe window pollution.

- Action: Build a dashboard tracking accuracy vs. conversation turn length. This will visually confirm the decay curve.

Step 2: Implement Hybrid Retrieval with a Basic Router

Start layering in intelligence. Keep your vector store, but stop using it as the only source of truth.

- Tactics:

- Add a Conversation Cache: Dedicate a small, fast key-value store (like Redis) to hold the explicit conversation history for each session. Always prepend the last two turns of Q&A to the prompt as primary context.

- Introduce a Graph Layer: Use a library like NetworkX or a purpose-built graph database like Neo4j or Amazon Neptune to start storing core entity relationships extracted from your documents.

- Build a Rule-Based Router: Create a simple classifier (using a small model or regex rules) to detect intents like “follow-up,” “definition,” or “comparison.” Based on intent, write rules like:

IF intent=="follow-up" THEN query conversation_cache FIRST, THEN graph for related entities, FINALLY fallback to vector search.

Step 3: Add Automated Context Decay Monitoring

Make degradation visible. Run automated checks on a sample of production conversations.

- Framework: Define a Context Decay Index (CDI). One simple formula:

(Accuracy at Turn 1 - Accuracy at Turn N) / Accuracy at Turn 1. Set an alert threshold (CDI > 0.25, for instance). - Automation: Use a workflow tool like Prefect or Airflow to run your RAGAS evaluations nightly on logged conversations and flag any sessions that breach your CDI threshold for review.

Step 4: Benchmark with Enterprise-Ready Metrics

Move beyond academic benchmarks. Define what success looks like for your business and measure it consistently.

- Essential Metrics to Track:

- Business Accuracy: The percentage of answers validated as correct by subject matter experts for your core use cases.

- Cost per Successful Query: (Infrastructure + LLM cost) / (number of queries with Business Accuracy > 90%).

- Mean Conversation Length to Resolution: How many turns does it take to solve a user’s problem? This should decrease with better context management.

- Router Efficiency: What percentage of queries were resolved by the conversation cache or graph layer alone, avoiding a costly vector search?

The Cost Reality: Where Your AI Budget Is Actually Bleeding

The push for DCR isn’t just about accuracy. It’s a fundamental cost optimization strategy. Follow the money.

The Token Waste of Redundant Retrieval

Every chunk retrieved and sent to the LLM costs money. In a static system handling a five-turn series of questions about a single project, it’s common to see 60-80% overlap in the retrieved chunks across turns. You’re paying, repeatedly, for the same information to be processed. A DCR system with an effective conversation cache and graph can cut this redundancy sharply, directly lowering your per-conversation token bill.

Infrastructure Overhead vs. Accuracy Gains

There’s a fair concern here: aren’t graph databases and routing engines just more infrastructure to manage? The key is total cost of ownership (TCO). Yes, a Neo4j cluster has a cost. But compare it to the alternative: scaling your vector database and LLM inference capacity to handle the exponentially higher, less efficient query load required to hit the same accuracy level with a naive system. The DCR infrastructure cost tends to be fixed or linear, while the LLM cost savings from reduced tokens and fewer failed queries grow at scale.

The ROI Timeline for Migration

Based on observed deployments, the ROI for implementing DCR follows a clear pattern:

- Months 1-2 (Pilot): Initial setup and tuning cost. Some added complexity.

- Month 3 (Scale): Token costs for piloted use cases drop by 20-30%. Accuracy metrics stabilize.

- Months 4-6 (Production): Full deployment. Monthly inference savings surpass the operational cost of the new DCR infrastructure. Net positive ROI achieved.

- Ongoing: Fewer support tickets, higher user trust, and the ability to support more complex, valuable agentic workflows become the primary long-term returns.

What Enterprise AI Teams Should Do Next

The gap between a working RAG proof-of-concept and a production-ready, scalable AI assistant isn’t filled with more powerful retrieval. It’s filled with more intelligent context management. The teams that will win in 2026 and beyond are those that stop fine-tuning the fetch and start mastering the flow. They’ll build systems that remember, reason, and route, turning fragmented data into coherent, trustworthy dialogue.

Start with an audit. We’ve compiled the essential checks into a Context Routing Audit Checklist. This downloadable framework walks you through the four-step playbook: from measuring your current pipeline’s decay to designing your first hybrid router. Stop letting context decay eat into your ROI. [Download the checklist here] and lay the foundation for the dynamic, cost-effective enterprise RAG system your business actually needs.