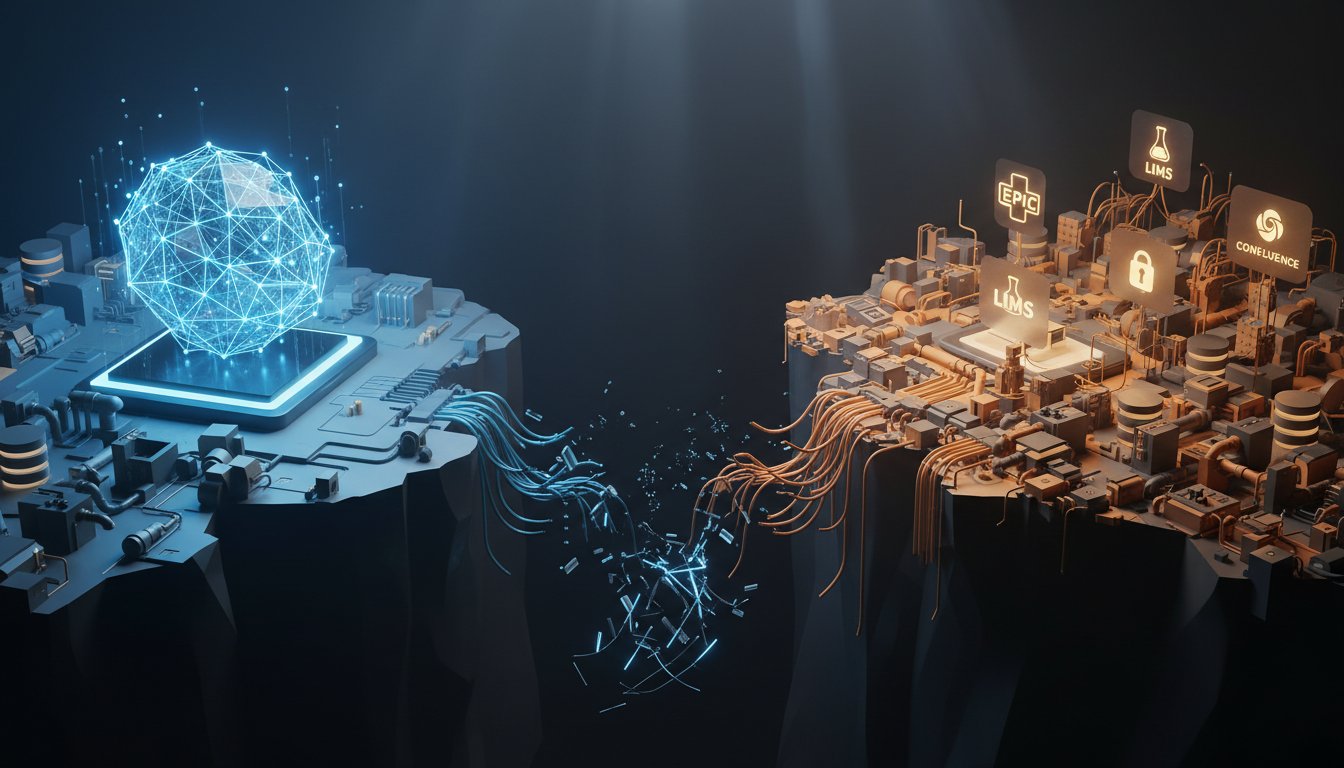

A Fortune 500 healthcare client came to us recently with a problem that’s becoming terrifyingly common. Their new RAG system, built on a leading cloud platform, could answer questions about their public-facing documentation with impressive accuracy. But when they tried to connect it to their core enterprise systems, things fell apart fast. The secure patient records in Epic, the clinical trial data locked in a proprietary LIMS, the internal research wikis on Confluence — none of it worked. The AI either hallucinated wildly or returned a polite, “I don’t have information on that.”

For three months, their data engineering team wrote custom API connectors, bespoke data loaders, and fragile synchronization scripts. What they ended up with was a brittle, expensive, and nearly impossible-to-audit pipeline that took weeks to update whenever a source system changed. The project was declared a failure weeks before launch. Not because the AI was unintelligent, but because the bridge between the AI and the enterprise data simply couldn’t be built with traditional tools.

This isn’t a story about a flawed LLM or poor vector search. It’s the story of a silent infrastructure crisis playing out across enterprise AI: the gap between large language models and the messy, siloed reality of corporate data. Most teams spend 80% of their RAG effort here, wrestling with custom integrations that become technical debt on day one.

The solution emerging from this chaos isn’t another proprietary API or a new vector database. It’s a standardized protocol. The Model Context Protocol (MCP) represents a fundamental architectural shift, moving from one-off, brittle integrations to plug-and-play, auditable, and secure data bridges. If you’re building enterprise RAG, this isn’t a feature to consider later. It’s the foundational layer you need to get right now.

Why Your Custom RAG Integrations Are Failing

Enterprise RAG systems aren’t monolithic applications. They’re complex federations. A single query might need context from a SQL database, a ticketing system like Jira, a document repository like SharePoint, and a real-time analytics dashboard. The traditional approach of writing a custom Python script or Lambda function for each source creates a house of cards.

The Hidden Costs of Connector Sprawl

Every custom connector you write is a liability. First, there’s development velocity. As one AI Infrastructure Lead at Kanerika put it, “If your retrieval layer can’t trace back to exact document boundaries with RBAC enforcement, you’re building a liability, not an asset.” Each connector requires unique authentication logic, rate-limiting handling, error recovery, and data transformation code. A team might start with three connectors but quickly finds itself maintaining twenty.

Then there’s the audit and compliance nightmare. When an AI provides an answer, compliance officers need to know: which source system provided this data? When was it last updated? Was the user who asked the question authorized to see this specific record? In a custom integration sprawl, answering those questions means tracing through layers of bespoke code, making compliance audits slow, expensive, and often inconclusive.

The Performance and Accuracy Trap

Beyond maintenance, ad-hoc integrations destroy performance and accuracy. A connector that works well for batch-loading documents from a file server might completely fail when asked for real-time query results from a customer database. Without a standard way to describe a data source’s capabilities, like whether it can be searched, filtered, or queried live, the RAG system can’t intelligently route requests. The result is high latency, timeouts, and incomplete context that leads directly to AI hallucinations.

What Model Context Protocol Solves (And Why It’s Different)

MCP isn’t a product or a vendor platform. It’s an open standard, a set of specifications for how any tool (an LLM, an IDE, an analytics dashboard) can securely discover, query, and interact with any data source or service. Think of it as USB-C for enterprise AI: a universal plug that allows any compliant “device” (your data source) to work smoothly with any compliant “host” (your LLM application).

The Core Shift: From Integration to Orchestration

MCP fundamentally changes the relationship between your AI and your data. Instead of your RAG application containing hardcoded logic to talk to Salesforce, the Salesforce environment hosts an MCP server, a small, standardized service that speaks the MCP language. Your RAG application, acting as an MCP client, simply discovers this server and asks it questions using a universal protocol.

This inversion is powerful. The team that owns the Salesforce instance, who best understands its data model, security policies, and quirks, owns the MCP server. They can update it without breaking the RAG system. The AI team can now focus on prompt engineering and evaluation, not maintaining brittle data pipes.

Real-World Capabilities: Beyond Simple Fetching

MCP servers aren’t just fancy query endpoints. The protocol defines rich interactions:

– Tools: Servers can expose specific functions. A GitHub server might expose “search_issues,” “get_file,” and “create_pull_request.”

– Resources: Servers can make data streams or documents available, like “readme.md” or “daily_sales_report.”

– Prompts: Servers can provide pre-built prompt templates tailored to their data, like “summarize_this_pull_request.”

This allows your RAG system to do more than retrieve text. It can ask a database server to run an aggregation query, request a BI tool to generate this week’s KPI chart, or instruct a CRM to update a contact record. The LLM becomes a true orchestrator of enterprise workflows.

5 Strategies to Cut RAG Costs with MCP Architecture

Adopting MCP isn’t just about cleaner architecture. It has a direct, measurable impact on the total cost of ownership of your RAG system. Here’s how a standardized protocol drives real efficiency.

1. Eliminate Custom Connector Development

The most immediate savings is developer time. Instead of spending weeks building and testing a connector for ServiceNow, you can implement or deploy an existing open-source MCP server for it. Development effort shifts from “how do we talk to this system?” to “how do we best use the capabilities this system exposes?” This can cut the data integration phase of a RAG project by 60-70%, allowing teams to move from proof-of-concept to production in weeks, not quarters.

2. Implement Intelligent, Cost-Aware Routing

With MCP, every data source describes its own capabilities and costs. A server can indicate if a query is computationally expensive or if it accesses premium, metered data. Your RAG orchestrator can use this metadata to make smarter decisions. For example, it can avoid hitting a costly commercial API for simple fact-checks that a cheaper internal wiki could answer. AWS Machine Learning Engineering teams have observed that “hallucination rates drop by up to 60% when enterprises implement LLM-based semantic similarity detectors alongside traditional vector search.” MCP provides the framework to build such hybrid, cost-optimized retrieval strategies natively.

3. Reduce Token Consumption with Structured Data

A major hidden cost in RAG is context window inflation. Dumping raw API JSON or entire document chunks into a prompt wastes tokens and money. MCP servers can handle data transformation at the source. A database server can return a concise natural language summary instead of a raw table. An analytics server can return “Q3 sales grew 15% YoY” instead of a massive CSV. This source-side preprocessing, guided by the LLM’s needs via the protocol, can reduce context token counts by 30-50% per query, which directly lowers LLM inference costs.

4. Simplify Auditing and Lower Compliance Risk

As noted in the AugmentCode Production Systems Guide, “Multimodal RAG isn’t a luxury anymore; it’s a compliance requirement.” MCP builds audit trails into the fabric of the system. Every piece of context retrieved is tagged with its source server and the tools used. This creates a natural, immutable log for compliance: “Answer X was generated using data from the Epic MCP server via the ‘get_patient_summary’ tool.” The cost of manual compliance verification drops significantly, and the risk of costly compliance failures is contained.

5. Enable Dynamic, Serverless Data Pipelines

Static vector indexes are expensive to rebuild and keep fresh. MCP enables a more dynamic, cost-effective pattern. Instead of batch-dumping data into a vector store, your RAG client can query live MCP servers on demand. For rapidly changing data, this eliminates re-indexing costs entirely. For stable reference data, you can still use a vector cache, but the MCP server acts as the single source of truth for cache invalidation and updates. This hybrid approach, championed by AWS and Oracle’s serverless vector search initiatives, improves both cost and data freshness.

Building Your First MCP-Enabled RAG System: A Practical Start

Transitioning doesn’t require a full rewrite. Start with a single, high-value data source.

Step 1: Identify and Isolate a Pilot Source

Choose an internal system with a well-defined API that’s critical to business queries but painful to integrate, like your company’s internal IT knowledge base (a Confluence instance, for example) or customer support ticket system (Zendesk). The goal is to prove the pattern on a manageable scope.

Step 2: Deploy or Build an MCP Server

First, check the growing ecosystem of open-source MCP servers (for tools like GitHub, PostgreSQL, Slack, and others). If one exists for your source, deploy it. If not, use an MCP SDK (available in Python, TypeScript, and Go) to build a simple server. Your first server might only expose one tool: search_knowledge_base(query: str). The implementation is just a wrapper around the source system’s existing API.

Step 3: Integrate an MCP Client into Your RAG Orchestrator

Modify your existing RAG application, or build a new one using a framework like LangChain or LlamaIndex (both are adding MCP support), to act as an MCP client. Configure it to connect to your new server. Then replace the custom code that called the knowledge base API with a call to the MCP server’s tool.

Step 4: Evaluate and Iterate

Measure the difference. Track:

– Developer Hours Saved: In maintenance and future modifications.

– Query Latency: Compare the MCP-mediated call to the old direct API call.

– Token Efficiency: Is the server returning more concise, useful data?

– Operational Clarity: Is logging and debugging easier?

Use this data to build a business case for expanding the pattern to your next three data sources.

The Future of Enterprise RAG Is Federated, Not Centralized

The trajectory is clear. The enterprise RAG market, projected to hit $1.94B, is being driven by demands for granular access control, dynamic content adaptation, and production-grade evaluation. The monolithic RAG application that tries to own all data integration is a dead-end model.

The future belongs to federated systems where business units own their data interfaces via MCP servers, and AI teams orchestrate intelligence across them. That’s how you build systems that are secure, auditable, cost-effective, and able to evolve as fast as your business does.

Just like the healthcare client who hit the integration wall, your enterprise’s most valuable data is locked behind the silos of legacy systems and modern SaaS applications. The choice is no longer between building custom bridges or going without. MCP offers a third path: a standardized, secure, and sustainable way to connect your AI to the heart of your business. Start by bridging one silo. You’ll quickly find it’s the architecture that finally makes enterprise RAG not just possible, but genuinely powerful.

Ready to move beyond brittle integrations? Explore our implementation guides and architecture reviews to see how leading teams are deploying MCP to turn their data silos into connected intelligence. The bridge is built. You just need to start using it.