Your enterprise RAG system retrieves documents flawlessly. It returns precise answers from your knowledge base. Your retrieval metrics look solid. Then someone asks about the diagram in last quarter’s engineering review. Or wants to analyze customer service call recordings. Or needs insights from product demo videos.

Your RAG system goes silent.

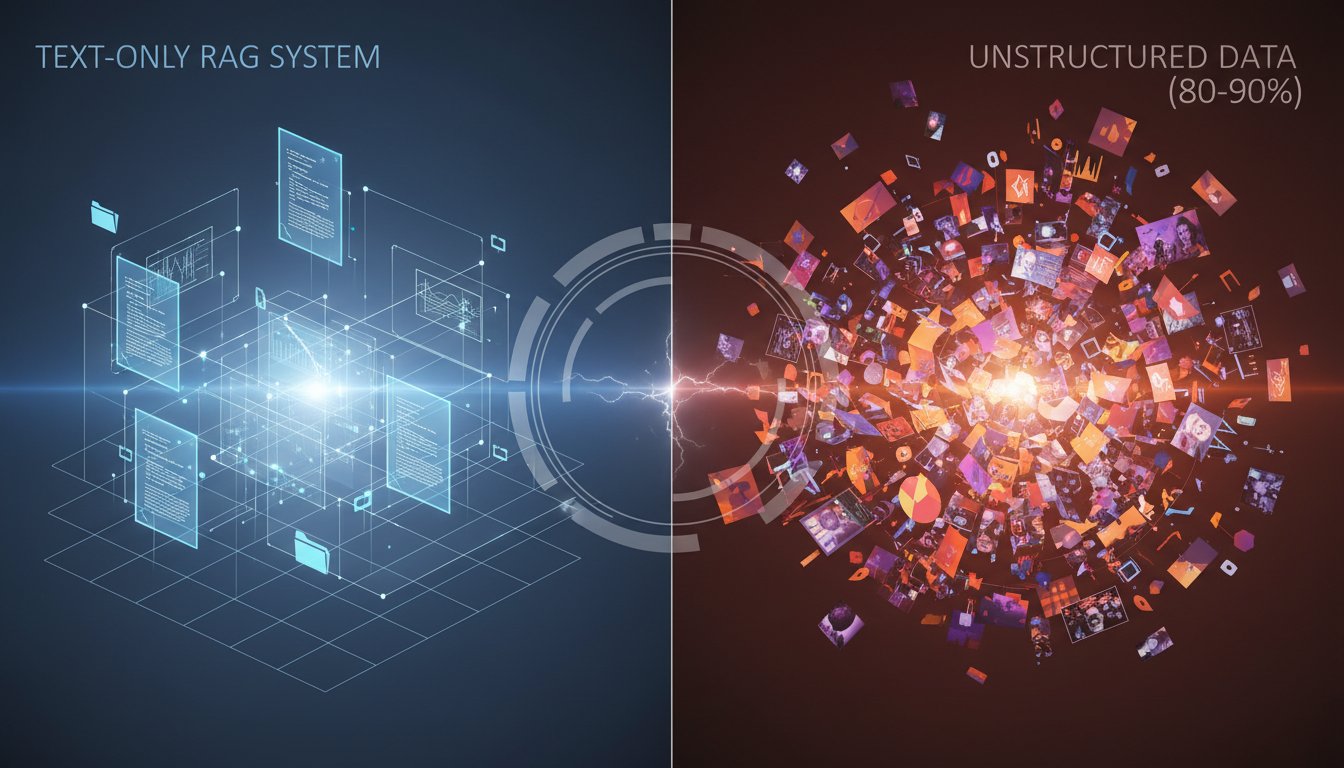

This isn’t a failure of your implementation. It’s a fundamental architectural limitation that 80% of enterprise RAG deployments share: you built a text-only retrieval system for a world where 80-90% of enterprise data is unstructured multimodal content, including images, audio, video, and complex documents that blend all three.

Terminology matters here. A “text-only RAG” system can only embed, index, and retrieve textual information. It might handle PDFs or Word documents, but it’s extracting and processing only the words, ignoring charts, diagrams, images, formatting, and visual context. A “multimodal RAG” system can natively process, embed, and retrieve across text, images, audio, and video within a unified architecture.

On March 9, 2026, Teradata announced capabilities that make this limitation impossible to ignore. Their Enterprise Vector Store now enables AI agents to autonomously process text, images, and audio at enterprise scale, with general availability planned for April 2026. The announcement highlights what many RAG architects already know but haven’t prioritized: the retrieval gap isn’t just about finding the right document anymore. It’s about finding the right information regardless of format.

This matters because the data composition in your enterprise has fundamentally shifted. Text-only architectures made sense when documents were predominantly text. They don’t make sense when your customer service insights live in call recordings, your product knowledge exists in demo videos, and your institutional knowledge is captured in slide decks with more diagrams than words.

The Hidden Cost of Format Fragmentation

Most enterprise teams don’t start with a multimodal RAG requirement. They start with a clear use case: “We need to retrieve information from our documentation.” The initial implementation works. Retrieval accuracy hits acceptable thresholds. The project moves to production.

Then the scope expands. Marketing wants to search presentation archives. Customer success needs to query support call recordings. Product teams want to find specific features demonstrated in video walkthroughs. Engineering needs to locate diagrams buried in technical specifications.

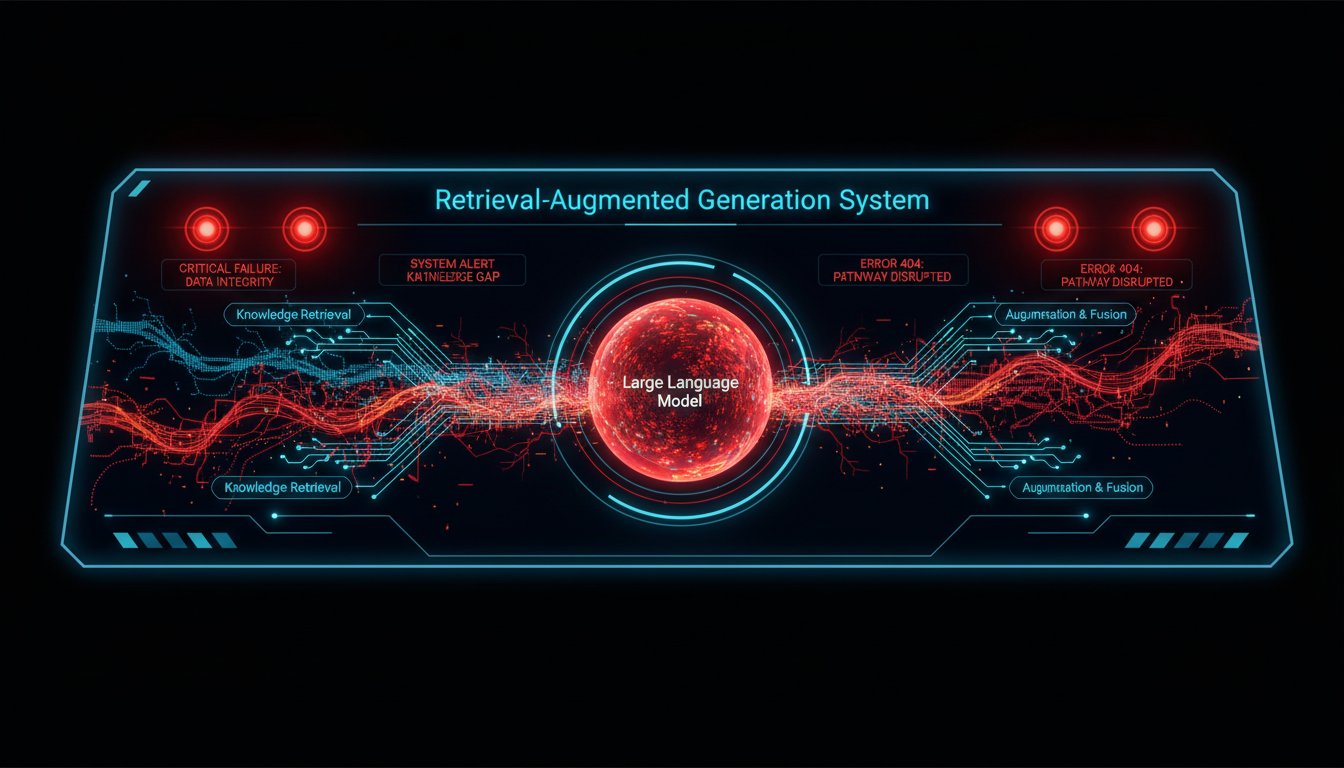

The typical response is to build separate systems. A text RAG system for documents. An image search tool for visual assets. A speech-to-text pipeline feeding a different retrieval system for audio. Video gets its own workflow. Each system has its own vector database, its own embedding model, its own infrastructure, and its own maintenance burden.

The cost implications are substantial. Industry analyses suggest that maintaining separate systems for different data types costs 15-30% more than unified multimodal approaches, due to duplicated infrastructure, separate maintenance cycles, and integration complexity. But the real cost isn’t just financial. It’s the lost connections between modalities.

When your systems are fragmented by format, you lose the ability to reason across modalities. You can’t connect what was said in a customer call with what’s shown in the product documentation diagram. You can’t link the audio from an engineering standup with the architecture diagram in the follow-up email. Your retrieval becomes siloed by format, not by semantic relevance.

This fragmentation creates what we call “format-specific blind spots.” A user query like “Show me how our authentication flow handles multi-factor scenarios” should return the written specification, the architecture diagram, the implementation walkthrough video, and the security audit recording. In a text-only RAG system, you get the specification. Everything else requires manual searching across disconnected systems.

What Multimodal Actually Means in Practice

The term “multimodal RAG” gets thrown around without much clarity about what it actually requires at an architectural level. The distinction matters because there are fundamentally different approaches, each with different tradeoffs.

Approach 1: Text Conversion Pipeline

The simplest multimodal approach converts everything to text. Images get captioned via image-to-text models. Audio gets transcribed via speech-to-text. Videos get frame-sampled and captioned. Everything flows into a traditional text-only vector database.

This works for simple retrieval tasks. If someone searches for “quarterly revenue discussion,” transcribed meeting audio will surface. But this approach fundamentally limits what you can retrieve. You can only find what the conversion pipeline captured in text form. Complex diagrams reduce to simple captions. Nuanced audio tones and emphasis disappear into flat transcripts. Visual relationships in images are lost.

This is sometimes called “multimodal input” rather than true multimodal RAG, because the retrieval mechanism itself remains text-only. You’re accepting multiple input formats but reducing them to a single modality for processing.

Approach 2: Separate Modality Stores

A more sophisticated approach maintains separate vector stores for each modality. Text goes into one vector database with text embeddings. Images go into another with vision embeddings like CLIP. Audio gets its own store with audio-specific embeddings.

Retrieval happens across all stores in parallel, then results get re-ranked and merged based on relevance scores. This preserves modality-specific information that conversion pipelines lose. An image search can find visually similar diagrams, not just textually similar captions.

The challenge is integration complexity. You’re managing multiple embedding models, multiple vector databases, and complex re-ranking logic. Query performance depends on the slowest modality store. And most critically, you still lose cross-modality semantic connections. The relationship between what’s written in a document and what’s shown in a related diagram exists only in your re-ranking logic, not in the embeddings themselves.

Approach 3: Unified Multimodal Embeddings

The most advanced approach uses models that create unified embeddings across modalities. These models map text, images, and audio into a shared vector space where semantic similarity works across format boundaries.

A text query like “authentication architecture” can retrieve textually similar documents, visually similar architecture diagrams, and audio discussions about authentication, all ranked by semantic relevance in a single unified retrieval operation.

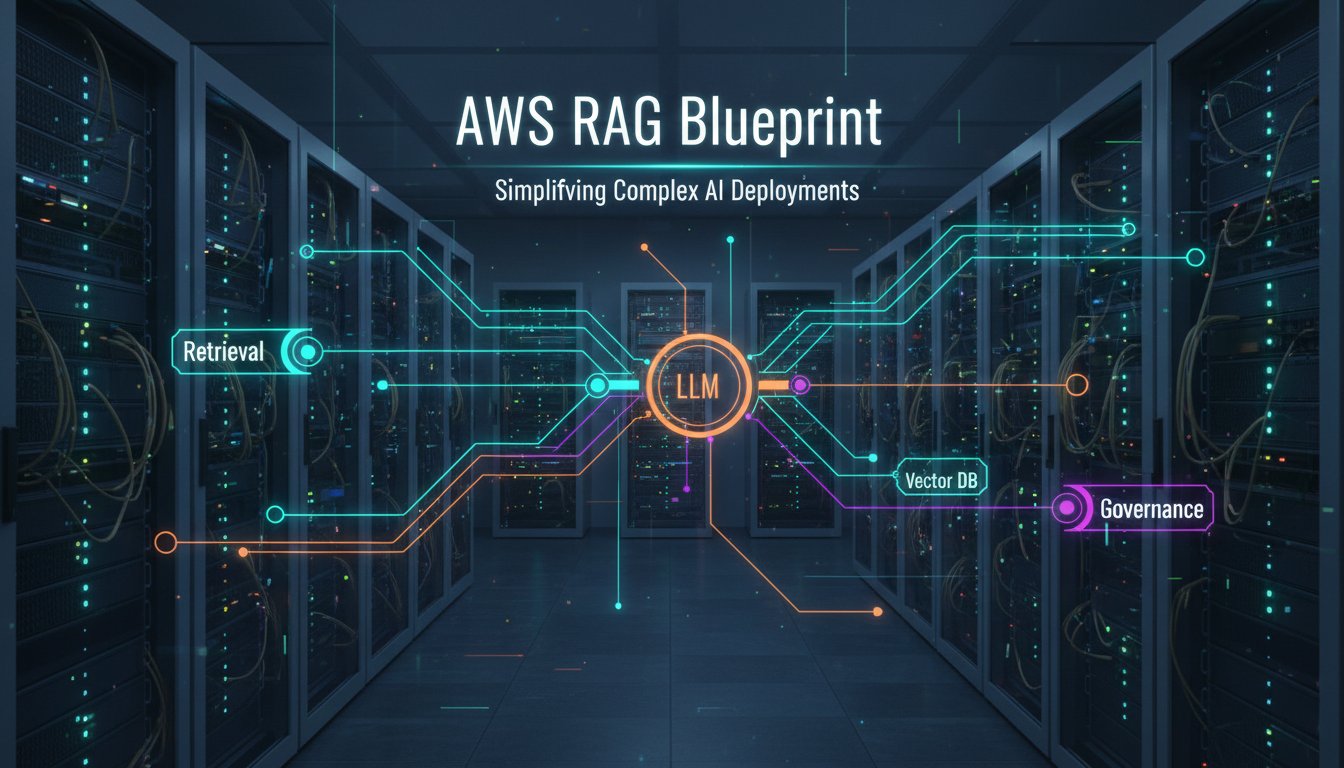

This is what Teradata’s announcement enables. Their Enterprise Vector Store supports multi-modal embeddings for text, image, and audio with up to 8K dimensions each, combined with hybrid search that integrates semantic, lexical, and metadata-driven retrieval within a unified platform. The integration with LangChain allows these capabilities to plug directly into existing RAG pipelines without requiring complete architectural rewrites.

The technical specification matters here: 8K-dimension embeddings provide substantially more representational capacity than typical 1536-dimension text embeddings. This additional dimensionality enables richer semantic capture across modalities, though it also increases computational requirements for similarity search at scale.

The Performance Equation Nobody Discusses

Multimodal RAG introduces performance considerations that don’t exist in text-only systems. The computational cost of processing images, audio, and video is orders of magnitude higher than text. A typical text document might be a few hundred kilobytes. A single high-resolution image is several megabytes. A five-minute audio recording is tens of megabytes. A ten-minute video is hundreds of megabytes to gigabytes.

This isn’t just a storage question. It’s a fundamental retrieval performance question. When your vector database needs to search across billions of high-dimensional multimodal embeddings, query latency becomes critical.

This is where Teradata’s massively parallel processing architecture becomes relevant. According to their technical specifications, the platform handles billions of vectors and supports thousands of concurrent queries while scaling linearly without performance degradation. For enterprise teams used to vector databases that slow down as data grows, this represents a fundamentally different performance profile.

But here’s the performance consideration most teams miss: retrieval latency in multimodal systems depends not just on vector search speed, but on embedding generation latency. When a user submits a query, you need to generate embeddings for that query, which is fast for text, slower for images, and substantially slower for audio and video.

Smart multimodal RAG architectures pre-compute embeddings for all stored content, so retrieval speed depends only on vector search, not on real-time embedding generation. But this requires substantial upfront processing. Ingesting a new video into your RAG system might require hours of embedding computation, compared to seconds for a text document.

The partnership Teradata announced with Unstructured addresses this ingestion challenge. Unstructured specializes in multimodal data ingestion and processing, handling the complex pipeline of extracting, processing, and preparing diverse document types, images, and audio for embedding generation. This integration means enterprises don’t need to build custom ingestion pipelines for every document format and media type they encounter.

When Text-Only Is Actually Good Enough

Despite the compelling case for multimodal RAG, there are legitimate scenarios where text-only architectures remain the right choice. Knowing when not to invest in multimodal capabilities is just as important as knowing when to invest.

If your enterprise data is genuinely text-heavy, think legal documents, contracts, policies, written technical specifications, and your retrieval use cases focus on textual information, the added complexity and cost of multimodal processing may not be justified. Converting occasional diagrams or images to text descriptions might be sufficient.

If your team lacks the expertise to manage multimodal embedding models, vector stores, and complex ingestion pipelines, starting with text-only reduces implementation risk. You can always evolve to multimodal later as your team’s capabilities mature.

If latency requirements are extremely strict, say sub-100ms retrieval, text-only systems offer simpler performance optimization paths. Multimodal retrieval introduces additional latency sources that are harder to fine-tune.

But here’s the critical question: are you choosing text-only because it genuinely fits your requirements, or because it’s what you know how to build? Many enterprise RAG projects default to text-only not because of thoughtful architectural analysis, but because it’s the path of least resistance. The teams building these systems have text embedding expertise. They know how to implement text retrieval. Multimodal feels like unknown territory.

The Teradata announcement matters because it lowers the barrier to multimodal capability. Integration with LangChain means teams already using LangChain for RAG pipelines can add multimodal retrieval without abandoning their existing architecture. Native Python and SQL support means developers can work with familiar tools rather than learning entirely new frameworks.

Real-World Multimodal Use Cases from the Teradata Announcement

The Teradata press release highlighted four specific enterprise applications that illustrate where multimodal RAG delivers value text-only systems simply can’t match.

Healthcare Visual Q&A: Medical professionals query patient imaging alongside textual clinical notes and diagnostic audio recordings. A cardiologist asks, “Show me cases similar to this echocardiogram with elevated troponin levels.” The system retrieves visually similar echocardiogram images, textual case notes mentioning troponin, and audio recordings of case discussions, all ranked by clinical relevance.

This use case fails completely in text-only RAG. Image captions like “echocardiogram showing cardiac activity” lose all the visual diagnostic information that makes the image valuable. Radiologists and specialists rely on visual pattern recognition that can’t be reduced to text descriptions.

Insurance Claims Automation: Claims processors query damage photos, written incident reports, and recorded customer statements simultaneously. A query like “Find claims involving rear-end collision with frame damage under $10K” returns photos showing frame damage, textual damage assessments, customer audio describing the accident, and repair estimates.

The business value here is claims processing speed. Instead of manually searching through photos, reading reports, and listening to recordings, adjusters get ranked relevant information across all formats in a single retrieval operation. Industry estimates suggest this type of multimodal retrieval can reduce claims processing time by 30-40%.

Defense Intelligence: Intelligence analysts correlate satellite imagery, mission reports, and intercepted communications. A query like “Show activity near this facility in the last 30 days” retrieves satellite images showing vehicle movements, textual intelligence reports mentioning the facility, and audio intercepts from the area.

This is a scenario where cross-modality semantic connections are critical. The satellite image might show something suspicious, the intelligence report might provide context, and the audio intercept might confirm intent. Text-only RAG breaks this analysis into separate manual steps. Multimodal RAG enables unified reasoning across intelligence sources.

Business Loyalty Agents: Customer service agents query purchase history, product images, customer service call recordings, and chat transcripts to understand customer context. When a customer calls about a product issue, the agent’s RAG-powered assistant retrieves the product image, previous calls about similar issues, chat transcripts showing troubleshooting steps already attempted, and relevant knowledge base articles.

The customer experience difference is dramatic. Instead of asking the customer to repeat information they’ve already provided in previous calls or chats, the agent has complete multimodal context. That’s the kind of experience that distinguishes world-class customer service from frustrating repeated explanations.

The Migration Path from Text-Only to Multimodal

For enterprise teams currently running text-only RAG systems, the question isn’t whether to eventually support multimodal retrieval. It’s when and how. Ripping out a working production RAG system to rebuild with multimodal capability is a non-starter for most organizations. The migration path needs to be incremental and low-risk.

Phase 1: Assess Your Data Composition

Start by understanding what percentage of your enterprise data is actually non-text. Many teams assume their data is text-heavy without validating that assumption. Run an analysis of your document repositories, shared drives, and content management systems. Categorize by format: text documents, presentations with heavy visual content, images, audio recordings, video.

If more than 30% of your data is non-text, multimodal capability will likely deliver measurable value. If less than 10% is non-text, text-only might remain appropriate. The 10-30% range requires deeper analysis of specific use cases.

Phase 2: Identify High-Value Multimodal Use Cases

Not all multimodal data is equally valuable. Focus on use cases where retrieval across formats directly impacts business outcomes. Customer service scenarios where agents need visual context. Product development workflows where engineers need to find specific features in demo videos. Sales enablement where reps need to locate specific slides from presentation libraries.

Prioritize use cases where the current workaround is particularly painful, whether that’s manual searching across multiple systems, repeated requests to colleagues who “know where that diagram is,” or abandoned searches because finding the right video takes too long.

Phase 3: Pilot with a Single Additional Modality

Don’t try to go from text-only to fully multimodal overnight. Add one additional modality to your existing RAG system. If presentations with diagrams are a pain point, add image retrieval. If customer call analysis is valuable, add audio.

This incremental approach lets you learn multimodal embedding models, tune retrieval parameters, and understand performance implications without the risk of a complete architectural overhaul. The Teradata-LangChain integration is specifically designed for this incremental approach. You can add multimodal retrieval to existing LangChain RAG pipelines without rewriting from scratch.

Phase 4: Evaluate Unified vs. Separate Stores

Once you’ve validated multimodal retrieval value with a pilot, decide whether to continue with separate modality stores or migrate to unified multimodal embeddings. This decision depends on your specific performance requirements, data volumes, and cross-modality retrieval importance.

If cross-modality semantic connections are critical, like in the defense intelligence use case, unified embeddings deliver better results. If modalities are largely independent, where image search is always visual similarity and text search is always textual, separate stores might perform better.

Phase 5: Scale Infrastructure Appropriately

Multimodal RAG at enterprise scale requires infrastructure that can handle substantially higher computational and storage requirements than text-only systems. This is where the performance specifications of platforms like Teradata’s become critical, covering billions of vectors, thousands of concurrent queries, and linear scaling without performance degradation.

Many teams underestimate infrastructure requirements and hit performance walls after initial pilots succeed but production scaling fails. Plan for 5-10x higher storage requirements and 3-5x higher compute requirements compared to equivalent text-only RAG systems.

What the Teradata Announcement Signals About Enterprise RAG Evolution

Beyond the specific technical capabilities, the Teradata announcement signals a broader shift in how enterprise platforms are approaching RAG infrastructure. Several aspects deserve attention.

Autonomous Agent Processing: The announcement emphasizes AI agents autonomously processing multimodal data, not just retrieving it. This suggests a shift from RAG as a retrieval tool to RAG as an agent capability platform. Agents don’t just answer questions. They process images, analyze audio, correlate across formats, and take action based on multimodal understanding.

This is consistent with broader industry movement from RAG-as-retrieval to RAG-as-infrastructure for agentic AI. When agents need to understand context to make decisions, that context increasingly exists across multiple formats.

Hybrid Search Architecture: The Teradata platform combines semantic, lexical, and metadata-driven search. This matters because pure semantic search, or vector similarity, doesn’t always return the best results. Sometimes users want exact keyword matches. Sometimes they want to filter by metadata like creation date, author, or document type.

Mature enterprise RAG systems need all three retrieval mechanisms working together. The hybrid search approach suggests the industry is moving beyond pure vector search to more sophisticated retrieval architectures.

Massive Parallel Processing for Vector Search: Traditional vector databases are designed for vector search workloads. Teradata is bringing massively parallel processing architecture, originally designed for analytical database workloads, to vector search. This is a different architectural approach that prioritizes enterprise-scale concurrent query performance over pure single-query latency.

For enterprise RAG deployments serving thousands of users simultaneously, this architectural choice potentially matters more than raw vector search speed.

April 2026 General Availability: The near-term availability, announced March 9, 2026 with April 2026 GA, signals these capabilities are production-ready, not research projects. For enterprise teams evaluating multimodal RAG options, this means capabilities are available for 2026 roadmap planning, not speculative future consideration.

Making the Multimodal Decision for Your RAG Architecture

Every enterprise RAG team needs to answer this question: does multimodal capability matter for our use cases, or is text-only sufficient?

Here’s a practical decision framework.

Choose text-only if:

– More than 90% of your retrieval queries target textual information

– Your enterprise data is genuinely text-heavy, covering legal, contracts, written specifications

– Your team lacks multimodal embedding and processing expertise

– Your performance requirements demand sub-100ms retrieval latency

– Your infrastructure budget is constrained and text-only meets requirements

Choose multimodal if:

– More than 30% of your enterprise data is non-text, including images, audio, video

– Your use cases require cross-format retrieval, like diagrams plus specifications or calls plus transcripts

– You’re building agent-based systems that need to understand visual and audio context

– Current workarounds, meaning separate systems per format, create friction for users

– You’re seeing user complaints about not being able to find non-text content

Pilot multimodal incrementally if:

– You’re in the 10-30% non-text data range

– You have specific high-value use cases but limited broader requirements

– You want to build team expertise before full commitment

– You need to validate ROI before infrastructure investment

– Your existing RAG architecture can extend to multimodal, like through LangChain integration

The Teradata announcement makes the incremental pilot path more viable. Teams using LangChain can add Teradata’s Enterprise Vector Store as a retriever without architectural rewrites. The April 2026 availability means you can evaluate in Q2 2026 for potential Q3-Q4 implementation.

The Broader Implication: Format Shouldn’t Determine Findability

The deeper issue multimodal RAG addresses isn’t just technical. It’s a fundamental user experience problem. In text-only RAG systems, whether users can find information depends on what format that information happens to be stored in. If it’s in a text document, retrieval works. If it’s in a diagram, an audio recording, or a video, retrieval fails or requires different tools.

This creates arbitrary limitations based on format rather than semantic relevance. Users shouldn’t need to think about where information might be in terms of file type. They should be able to ask questions and get relevant answers regardless of whether those answers exist as text, images, audio, or video.

Multimodal RAG moves toward format-agnostic retrieval. The user asks a question. The system retrieves semantically relevant information across all formats. The barrier between “text search” and “image search” and “video search” disappears from the user’s perspective.

This is particularly important as AI agents become more prevalent. Agents don’t have the human ability to intuitively know “this information is probably in a presentation, so I should look at slide decks.” They need retrieval systems that work uniformly across formats.

The enterprises that figure out multimodal RAG in 2026 will have infrastructure that supports increasingly sophisticated agent-based workflows. The enterprises that stay text-only will find their agents arbitrarily limited by format boundaries that humans can work around but agents cannot.

For enterprise RAG architects, the Teradata announcement isn’t just about new technical capabilities. It’s a signal that the industry is moving decisively toward multimodal as the expected baseline for enterprise RAG infrastructure. Text-only will increasingly be seen as a legacy limitation rather than a reasonable architectural choice.

The question isn’t whether your RAG system will eventually need to handle multiple modalities. The question is whether you’re planning that transition now, or whether you’ll be forced into it later when the gap between your capabilities and your requirements becomes impossible to ignore. If you’re ready to evaluate where multimodal RAG fits in your 2026 roadmap, the tools and platforms to do it incrementally are available now.

Your enterprise data is already multimodal. Your RAG system needs to catch up.