The Pentagon handles some of the most sensitive data on the planet. Classified intelligence, operational plans, personnel records — the kind of information that, if compromised, could have consequences far beyond a corporate data breach. So when defense officials started looking at AI to help analysts work faster and smarter, one question immediately surfaced: how do you get the benefits of large language models without exposing classified data to the open internet?

That’s the problem a handful of AI startups are now racing to solve. Their answer centers on Retrieval-Augmented Generation, or RAG — a technical approach that lets AI systems pull from a controlled, internal knowledge base rather than relying on publicly trained models. Instead of sending queries out to a cloud server somewhere, the system retrieves relevant documents from a secure, air-gapped environment and generates responses entirely within that closed network.

It sounds straightforward in theory. In practice, building something that actually works inside classified networks — where connectivity is restricted, hardware is tightly controlled, and the margin for error is essentially zero — is a different challenge entirely. These companies aren’t just writing software. They’re engineering systems that have to meet the security standards of some of the most demanding institutions in the world.

This post breaks down who’s doing this work, how their technical approaches differ, and what the security architecture actually looks like when you’re building AI for the Pentagon. With key developments expected to wrap up by April 2026, the window for understanding this space — and what it means for defense AI more broadly — is right now.

Why the Pentagon Turned to RAG in the First Place

Large language models are genuinely useful for analysts. They can summarize long documents, surface connections across large datasets, and answer questions in plain language. But the standard way these models work creates an obvious problem for classified environments: they’re trained on public data, they often rely on cloud infrastructure, and they can inadvertently expose sensitive inputs through logging, telemetry, or model updates.

RAG sidesteps a lot of these issues. Rather than baking all knowledge into the model’s weights during training, RAG systems retrieve relevant information at query time from a curated document store. The model itself doesn’t need to know classified information — it just needs to be able to read and synthesize documents that are retrieved securely from within the network.

For the Pentagon, this architecture has a few key advantages. First, the underlying model can be smaller and more controllable, since it doesn’t need to memorize everything. Second, the document store can be updated without retraining the model, which matters when intelligence changes fast. Third, and most critically, the entire retrieval and generation process can happen inside a classified network with no external calls.

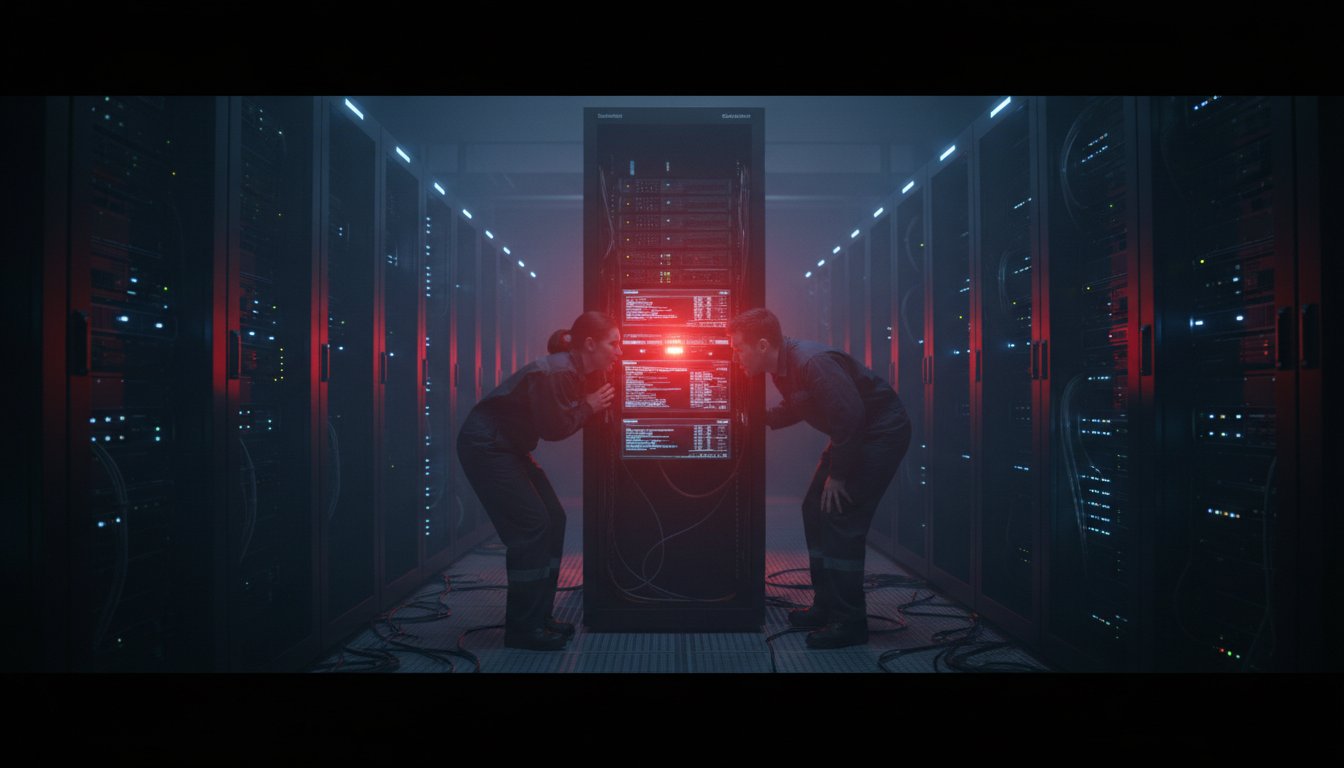

The Air-Gap Requirement

Air-gapped networks — systems physically isolated from the public internet — are standard in classified defense environments. Building AI that works in these conditions isn’t just a software problem. It requires models that can run on local hardware, retrieval systems that don’t depend on external APIs, and deployment pipelines that can operate without cloud connectivity.

Several startups working in this space have built their entire product around this constraint. Their models are optimized to run on on-premise GPU clusters, their vector databases are self-hosted, and their update mechanisms are designed to work through secure, offline transfer processes rather than live network connections.

The Companies Leading This Work

A small group of AI startups has moved into this space, each with a slightly different angle on the core problem.

Some are coming from a pure AI background, adapting existing RAG frameworks to meet defense security requirements. Others started in defense contracting and are building AI capabilities on top of existing classified infrastructure relationships. A few are taking a more vertical approach, building end-to-end systems specifically for intelligence analysis workflows rather than trying to adapt general-purpose tools.

What they share is a focus on compliance from the ground up. These aren’t companies trying to retrofit a consumer AI product for government use. They’re building with FedRAMP, IL4, IL5, and in some cases IL6 authorization requirements in mind from day one — which shapes everything from how data is stored to how model outputs are logged and audited.

Technical Differentiation

The RAG architecture itself has several components where companies are making different technical bets. The retrieval layer — how documents are indexed, searched, and ranked — is one area of active differentiation. Some companies are using dense vector search, others are combining vector search with traditional keyword retrieval in hybrid approaches that tend to perform better on specialized domain content.

The generation layer is another. Smaller, fine-tuned models that run efficiently on local hardware are generally preferred over large frontier models that require significant compute. Some startups are fine-tuning open-source base models on declassified defense documents to improve domain relevance without touching classified data during training.

Context window management — how the system decides which retrieved documents to include in a given prompt — is also a meaningful technical challenge. In intelligence analysis, the most relevant document isn’t always the most semantically similar one. Building retrieval systems that account for recency, source credibility, and classification level adds real complexity.

Security Architecture Inside Classified Networks

The security requirements for these systems go well beyond standard enterprise data protection. When you’re operating inside a classified network, every component of the stack has to be evaluated and approved, and the approval process itself can take months or years.

Access controls are granular. Not every analyst has access to every classification level, and the RAG system has to enforce those distinctions at query time — making sure that a user cleared for Secret doesn’t inadvertently retrieve Top Secret documents through a poorly scoped search.

Audit logging is non-negotiable. Every query, every retrieved document, every generated response has to be logged in a way that’s tamper-resistant and reviewable. This isn’t just a compliance checkbox — it’s how you detect misuse and investigate incidents after the fact.

Handling Multi-Level Security

One of the harder technical problems in this space is multi-level security, or MLS. In a typical enterprise RAG system, all documents in the knowledge base are accessible to all users. In a classified environment, documents exist at different classification levels, and the system has to prevent lower-cleared users from accessing higher-classified content — even indirectly, through model outputs that might synthesize information from documents they shouldn’t see.

Solving this properly requires more than just filtering documents before retrieval. It requires thinking carefully about how the model itself might leak information through its responses, and building guardrails that operate at the output level as well as the retrieval level. Some startups are addressing this with separate model instances for different classification levels. Others are building classification-aware retrieval pipelines that tag and filter at every step.

On-Premise Deployment and Hardware Constraints

Running AI on classified networks also means working with whatever hardware is available in those environments, which isn’t always the latest generation of GPUs. Startups in this space have had to optimize their models and inference pipelines to run efficiently on older or more constrained hardware — a very different engineering challenge than building for cloud-scale infrastructure.

This has pushed some companies toward smaller, more efficient model architectures, and toward inference optimization techniques like quantization that reduce memory and compute requirements without significantly degrading output quality.

What April 2026 Means for This Space

Several of the key programs and contracts in this area are expected to reach significant milestones by April 2026. For some companies, that means completing the authorization process for operating at higher classification levels. For others, it means moving from pilot deployments to full operational capability within specific Pentagon programs.

The April 2026 timeline also matters because it coincides with broader defense AI policy developments. The Department of Defense has been working through its AI adoption framework, and the outcomes of these early RAG deployments will likely shape how the Pentagon approaches AI procurement and integration going forward.

If these systems perform well — if they demonstrably help analysts work faster and more accurately without creating new security risks — it opens the door for much wider adoption across the intelligence community. If they run into problems, whether technical or security-related, it could slow the whole field down.

Implications for Defense AI Procurement

The companies succeeding in this space are building something more than a product. They’re building a track record and a set of relationships that are genuinely hard to replicate. Getting a system authorized to operate on classified networks is a multi-year process. Maintaining that authorization requires ongoing compliance work. The companies that get through that process first have a real structural advantage.

For defense AI procurement more broadly, the RAG approach these startups are pioneering could become a template. It offers a way to bring AI capabilities into classified environments without the risks associated with training models on sensitive data or connecting classified systems to external infrastructure. That’s a model that could extend well beyond the Pentagon to other parts of the intelligence community and allied defense organizations.

Where This Is Headed

The work these startups are doing right now is genuinely hard. Building AI that’s useful enough to change how analysts work, secure enough to operate in classified environments, and reliable enough to meet defense-grade standards is a narrow target to hit. Most companies attempting it won’t get there.

But the ones that do are building something with long-term strategic value. The Pentagon’s data problems aren’t going away. The volume of information that analysts need to process keeps growing, and the pressure to make faster, better-informed decisions keeps increasing. AI systems that can help with that — securely, inside classified networks — are going to be in demand for a long time.

The RAG approach isn’t perfect. It has limitations around context length, retrieval accuracy, and the challenge of keeping knowledge bases current. But it’s currently the most practical path to bringing AI into classified environments without creating unacceptable security risks. And the startups working on it are learning things about secure AI deployment that nobody else knows yet.

By April 2026, we’ll have a much clearer picture of which approaches actually work at scale and which companies have built something that the Pentagon trusts enough to rely on. That’s the real test — and it’s coming up fast.

If you’re evaluating AI solutions for secure or regulated environments and want to understand how RAG architectures can work within your specific compliance requirements, now is the time to get into the details. The technical decisions made at the architecture stage determine what’s possible later — and the companies getting this right are making those decisions now. Reach out to explore how these approaches apply to your organization’s needs.