When Defense Secretary Pete Hegseth issued his ultimatum to Anthropic on February 18, 2026—demanding unrestricted access to Claude AI or face designation as a “supply chain risk”—most enterprise technology leaders saw it as a defense industry issue. They were wrong.

This standoff, which escalated through February with Anthropic refusing to drop its AI safeguards despite Pentagon threats, represents the first major real-world test of a question every enterprise RAG architect should have been asking: What happens when your AI provider’s access policies conflict with operational requirements?

For organizations building mission-critical RAG systems on third-party foundation models, the Anthropic-Pentagon dispute exposes a vulnerability that most risk assessments have completely overlooked. It’s not just about military contracts. It’s about the fundamental assumption that the AI models powering your retrieval augmented generation infrastructure will remain accessible under the terms you expect.

The Standoff That Enterprise Teams Missed

The dispute centers on Anthropic’s refusal to grant the Pentagon unrestricted access to its Claude AI models, which the military demanded after using Claude in the operation that led to Venezuelan President Maduro’s apprehension. Defense officials argue that Anthropic’s “safety limits”—restrictions preventing use in autonomous weapons and mass surveillance—constitute operational obstacles to national security.

Anthropics’s CEO Dario Amodei has held firm, maintaining that these safeguards are non-negotiable ethical boundaries. The Pentagon responded by threatening to invoke the Defense Production Act and potentially terminate Anthropic’s multimillion-dollar government contract.

But here’s what makes this relevant beyond defense: Anthropic’s acceptable use policy applies to all enterprise customers, not just military users. The same restrictions the Pentagon finds constraining could impact any organization whose RAG use cases brush against Anthropic’s ethical boundaries—whether that’s predictive policing, workforce surveillance, or automated decision-making in sensitive domains.

The Hidden Single-Point-of-Failure in Your RAG Architecture

Most enterprise RAG systems follow a remarkably similar architecture: a single foundation model (often Claude, GPT-4, or Gemini) powers the generation component, while vector databases handle retrieval. This design creates an unacknowledged dependency that the Anthropic dispute now illuminates.

Consider the risk profile. Your RAG system might be retrieving from proprietary knowledge bases, generating responses for compliance-sensitive workflows, or powering customer-facing applications with strict SLA requirements. All of that depends on continuous access to your chosen foundation model operating under predictable terms.

What the Pentagon-Anthropic standoff demonstrates is that these access terms are not as stable as enterprise architecture diagrams suggest. AI providers maintain usage policies that can restrict deployment in ways that may not be immediately obvious during procurement. More critically, these policies can become enforcement priorities when providers face external pressure—from governments, regulators, or public advocacy groups.

The technical implication is stark: enterprises have built RAG systems with a single point of failure that isn’t in their infrastructure diagrams.

How Provider Policies Silently Constrain RAG Deployments

While the Pentagon faces explicit restrictions, enterprise teams often discover usage policy constraints only when they’re already deep into implementation. Anthropic’s acceptable use policy prohibits Claude from being used for “weapons development,” “military and warfare” applications without explicit authorization, and “surveillance” that violates privacy rights. OpenAI’s policy similarly restricts use in “military and warfare” and “activity that has high risk of physical harm.”

These boundaries sound reasonable in principle, but they become operationally complex in practice. Does a RAG system analyzing security camera footage for workplace safety violations constitute prohibited surveillance? What about a legal research RAG that processes classified documents? Or a healthcare RAG that makes treatment recommendations—does that cross into the “high risk of physical harm” territory that requires special consideration?

The answer often depends not on technical architecture but on subjective interpretation of policy language. And as the Pentagon discovered, when push comes to shove, AI providers will enforce their interpretations even against their largest, most strategic customers.

For enterprise RAG teams, this means your deployment constraints aren’t just technical—they’re contractual, ethical, and increasingly political. The foundation model you selected based on performance benchmarks comes with terms of service that could restrict your use cases in ways your initial evaluation didn’t account for.

The Market Response: A Multi-Provider Scramble

The Pentagon’s response to Anthropic’s resistance offers a preview of how large enterprises might react when faced with similar access constraints. According to Axios reporting from February 23, 2026, the Defense Department immediately opened negotiations with Elon Musk’s xAI to deploy Grok in classified environments—effectively building a backup option.

This isn’t a military-specific strategy. It’s a template for enterprise risk mitigation that forward-thinking RAG teams are already adopting: multi-provider architectures that treat foundation models as interchangeable components rather than core dependencies.

The technical pattern emerging from recent implementations involves abstraction layers that allow RAG systems to route requests across multiple foundation models based on availability, cost, and compliance requirements. When properly implemented, these architectures can failover from Claude to GPT-4 to Gemini without application-layer code changes.

But multi-provider strategies introduce their own complexity. Different models have different context windows, instruction-following capabilities, and output formats. A RAG system optimized for Claude’s 200K token context window will perform differently when failing over to a model with 128K tokens. Prompt engineering that works perfectly for GPT-4 may produce inconsistent results with Gemini.

The Broader Industry Shift Toward Military AI

What makes the Anthropic standoff particularly significant is its timing. While Anthropic resists Pentagon demands, competitors are moving in the opposite direction. Google updated its AI principles in February 2026 to allow more flexibility in military applications. Meta loosened its AI usage rules for military deployment. And xAI secured its Pentagon contract for Grok in classified environments.

This divergence in provider policies creates a new dimension of competitive differentiation that enterprise buyers must evaluate. It’s no longer sufficient to compare models on accuracy, latency, and cost. Organizations must now assess policy risk—the likelihood that a provider’s acceptable use restrictions will constrain future deployments or change in ways that impact operational continuity.

For RAG systems in regulated industries, this becomes especially complex. Financial services firms need models that comply with both provider policies and regulatory requirements. Healthcare organizations must navigate HIPAA alongside AI ethics guidelines. Government contractors face the inverse of Anthropic’s problem: they need providers willing to support sensitive use cases, not prohibit them.

The market is responding with increasing policy fragmentation. Rather than converging toward industry-standard acceptable use policies, AI providers are differentiating based on where they draw ethical lines. This means enterprise RAG architectures must account for provider policy as a variable that changes over time and differs across vendors.

Architectural Patterns for Provider-Agnostic RAG

The technical response to this risk environment involves treating foundation models as commoditized infrastructure rather than architectural foundations. Several patterns are emerging from organizations that have already encountered provider policy constraints:

Pattern 1: Model Gateway Abstraction

Implement a gateway layer that provides a unified API across multiple foundation model providers. This allows application code to remain provider-agnostic while the gateway handles provider-specific authentication, request formatting, and response parsing. When a provider becomes unavailable or restricts access, configuration changes at the gateway level reroute traffic without code deployments.

Pattern 2: Workload-Based Model Selection

Different RAG use cases have different risk profiles under provider acceptable use policies. Route sensitive workloads to providers with permissive policies, while using restricted providers for lower-risk applications. This requires classification logic that maps requests to policy-compliant model endpoints based on content analysis.

Pattern 3: Hybrid Model Ensembles

Rather than selecting a single model for generation, use multiple models in parallel and implement voting or consensus mechanisms to select final outputs. This increases cost and latency but provides resilience against individual model restrictions or performance degradation.

Pattern 4: Fallback Chains with Quality Monitoring

Define explicit fallback sequences (e.g., Claude → GPT-4 → Gemini → local model) with quality thresholds at each step. If the primary model is unavailable or returns results below quality thresholds, automatically cascade to the next option. Implement monitoring to detect when fallback chains activate, signaling potential access issues.

Each pattern introduces operational complexity—additional API integrations, monitoring overhead, cost management across multiple providers, and testing matrices that multiply with each model added. But the alternative is accepting that your RAG system’s availability depends entirely on a single provider’s policy decisions and external pressures they face from governments, regulators, or advocacy groups.

What Enterprise RAG Teams Should Do This Week

The Anthropic-Pentagon deadline may have passed, but the precedent it sets is just beginning. Organizations building or operating enterprise RAG systems should treat this as a forcing function for policy risk assessment:

Audit your current dependencies. Document which foundation models power your RAG systems and review their acceptable use policies in detail. Identify any use cases that might fall into gray areas—surveillance, automated decision-making, sensitive data processing, or applications in regulated industries.

Map your policy risk surface. For each RAG application, assess what happens if your primary model provider restricts access, changes pricing, or modifies usage policies. Identify mission-critical systems where provider policy changes would cause operational disruption.

Evaluate multi-provider feasibility. Determine whether your RAG architecture can support multiple foundation models without significant refactoring. If not, document the technical debt required to implement provider abstraction and prioritize it against other infrastructure work.

Establish provider policy monitoring. AI companies update their acceptable use policies with little notice. Implement processes to track policy changes across your model providers and assess their impact on existing deployments.

Test your fallback scenarios. If you already have multi-provider capabilities, validate that failover actually works under realistic conditions. Many teams discover during outages that their backup models produce unacceptable output quality or that switching providers breaks downstream integrations.

Document compliance requirements. Work with legal and compliance teams to understand regulatory constraints that might interact with provider policies. Some regulated industries may require specific model providers or prohibit certain fallback options.

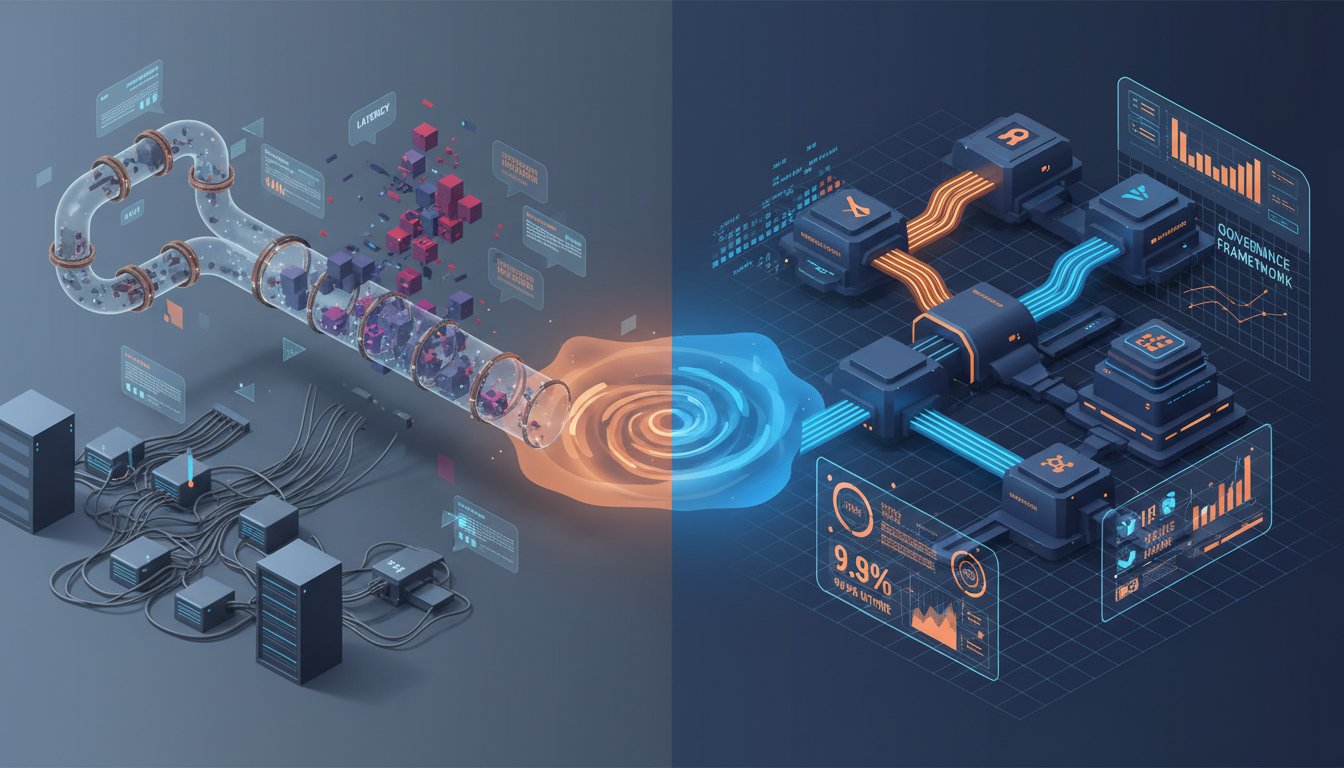

The Emerging Governance Framework

What the Anthropic dispute ultimately reveals is that enterprise RAG systems operate within a governance framework that extends far beyond organizational boundaries. Your RAG architecture is constrained by the acceptable use policies of your model providers, which are in turn influenced by government pressure, regulatory requirements, ethical advocacy, and competitive dynamics.

This layered governance model is unprecedented in enterprise technology. Organizations didn’t face equivalent risks with previous infrastructure dependencies. Database vendors don’t threaten to revoke access based on how you use SQL. Cloud providers don’t audit whether your EC2 instances align with their ethical principles. But AI model providers are different—they maintain usage restrictions that can change based on external pressures entirely outside your control.

The result is a new category of operational risk that enterprise architecture hasn’t historically accounted for: provider policy risk. It sits alongside technical risk, security risk, and compliance risk as a factor that can impact system availability and operational continuity.

Forward-thinking organizations are beginning to build governance frameworks that address this explicitly. These frameworks typically include provider policy tracking, use case classification systems that map applications to compliant model endpoints, and change management processes that evaluate the impact of policy updates on existing deployments.

Why This Matters Beyond Military Contracts

The Pentagon-Anthropic standoff may seem like an edge case—a dispute between a defense agency and an AI company over military applications that most enterprises will never encounter. But that interpretation misses the fundamental dynamic at play.

What happened with Anthropic can happen with any provider, over any use case, for any customer. The mechanisms are already in place: acceptable use policies that providers can interpret and enforce at their discretion, external pressures from governments and advocacy groups that can shift provider priorities, and competitive dynamics that encourage policy differentiation.

For enterprise RAG systems, this means the risk isn’t hypothetical. It’s structural. And it’s not going away. As AI becomes more central to enterprise operations, the tension between provider ethics, government demands, and organizational requirements will only intensify.

The organizations that will build resilient RAG systems in this environment are those that recognize foundation models as vendors, not partners. They’ll build architectures that can survive provider policy changes, government mandates, and competitive disruptions. They’ll treat model access as a variable, not a constant.

Because the next time an AI provider faces a deadline from a powerful entity demanding policy changes, your enterprise RAG system shouldn’t be collateral damage. The Anthropic-Pentagon dispute just showed us that these conflicts are real, consequential, and closer to enterprise operations than most teams realized.

The question isn’t whether your RAG architecture can handle model provider access disruptions. After this week, the question is: what are you doing about it?