Every enterprise AI team celebrates the same milestone: their RAG proof-of-concept works beautifully. Queries return relevant context. LLMs generate accurate responses. Stakeholders nod approvingly at the demo. Then comes the mandate to scale to production, and that’s when the infrastructure reality hits like a freight train.

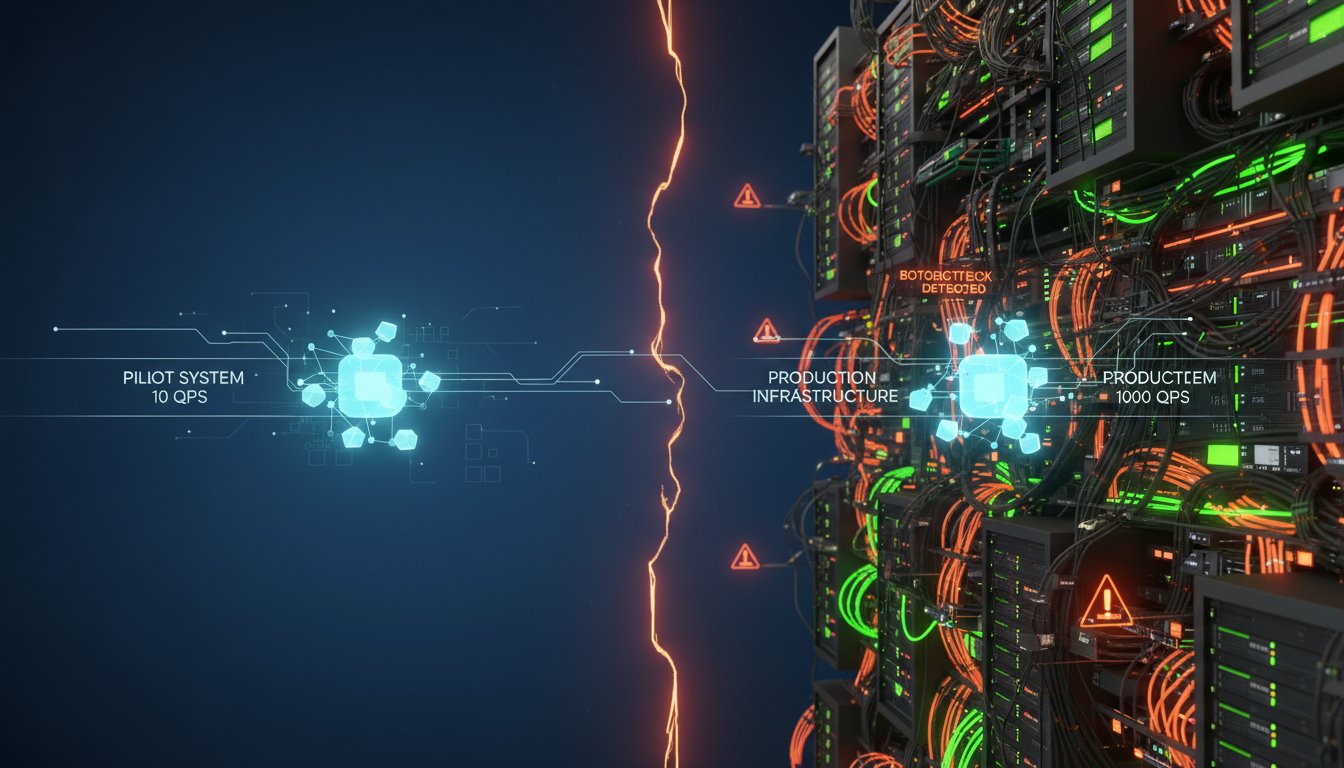

The uncomfortable truth that emerged from recent enterprise deployments in early 2026 is that the infrastructure assumptions baked into successful RAG pilots actively sabotage production systems. Your CPU-based vector search that handles 10 queries per second becomes a bottleneck at 1,000. Your elegant single-agent architecture crumbles when you need multi-agent coordination with persistent memory. Your cost projections, based on pilot-scale data volumes, explode when production workloads hit.

This isn’t a software problem masquerading as an infrastructure challenge—it’s an infrastructure crisis that most organizations discover too late. The announcement of VAST Data and NVIDIA’s CNode-X platform in late February 2026, featuring the first fully CUDA-accelerated AI data stack, exposed this hidden fault line in enterprise RAG deployments. The platform’s 44% reduction in query time and 80% reduction in query cost compared to traditional architectures isn’t just impressive marketing—it’s quantifiable proof that infrastructure, not retrieval algorithms, determines production RAG success.

The shift from simple retrieval-augmented generation to agentic workflows with persistent memory has fundamentally changed the infrastructure equation. When NVIDIA CEO Jensen Huang stated that CNode-X enables “AI agents with persistent memory, allowing them to work on complex problems over extended periods without forgetting,” he articulated the architectural gap that pilot systems never expose: stateless RAG versus stateful agent orchestration.

The CPU-to-GPU Infrastructure Reckoning

Most RAG pilots run on CPU-based infrastructure because the initial requirements seem modest. A vector database on standard servers, a few API calls to an LLM provider, and basic retrieval logic can demonstrate value quickly. But this approach makes three catastrophic assumptions that only become visible at scale.

First, it assumes linear scaling. If 100 documents work well, surely 100,000 will too—just add more servers. Reality disagrees violently. CPU-based vector similarity search degrades non-linearly as dimensionality and corpus size increase. The computational overhead of comparing high-dimensional embeddings across millions of vectors creates latency spikes that cascade through multi-agent systems.

VAST Data’s CNode-X platform addresses this with GPU-native vector search acceleration using NVIDIA’s cuVS libraries. When every layer of the data stack—from SQL queries to vector operations—runs on CUDA-accelerated infrastructure, the performance profile transforms. This isn’t incremental improvement; it’s architectural transformation. According to the technical specifications released with the CNode-X announcement, the platform integrates AMD EPYC 9005 processors with multiple NVIDIA RTX PRO 6000 Blackwell GPUs specifically to eliminate the CPU bottleneck that pilot systems never encounter.

Second, CPU-based pilots assume stateless operations. Each query arrives, gets processed, returns results, and disappears. Agents don’t remember previous interactions because the pilot doesn’t require it. But production agentic workflows demand persistent memory across sessions, context retention across multi-turn conversations, and coordination state across agent clusters.

The infrastructure implications are profound. Persistent memory requires high-speed access to context that spans gigabytes per agent, multiplied across potentially hundreds of concurrent agents. CPU-based architectures struggle because they’re optimized for request-response patterns, not stateful orchestration. GPU-accelerated platforms like CNode-X integrate NVIDIA BlueField-4 DPUs specifically to handle the Context Memory (CMX) Platform that enables agents to maintain state without the memory bottleneck.

Third, pilot systems assume cost scales proportionally with value. If the pilot costs $5,000 monthly and delivers measurable value, production at 100x scale should cost $500,000 and deliver proportional returns. This assumption collapses when infrastructure inefficiencies compound at scale. Inefficient vector search multiplied by thousands of queries per second doesn’t just cost more—it costs exponentially more as you add compute resources to compensate for architectural limitations.

The 80% cost reduction that VAST Data reports for CNode-X deployments compared to traditional infrastructure isn’t about cheaper hardware. It’s about eliminating the compounding inefficiencies that only manifest at production scale. When your infrastructure is purpose-built for GPU-accelerated AI workloads rather than retrofitted from general-purpose servers, the economics fundamentally change.

The Agentic Memory Architecture Gap

The transition from simple RAG to multi-agent systems with persistent memory represents the second infrastructure discontinuity that pilots rarely expose. A stateless RAG system retrieves context, passes it to an LLM, returns a response, and forgets everything. An agentic system maintains conversation state, coordinates with other agents, learns from interactions, and persists knowledge across sessions.

Gartner’s identification of “multiagent systems” as a top technology trend for 2026 reflects the enterprise shift already underway. Organizations aren’t deploying single-purpose RAG systems anymore—they’re building agent ecosystems that require fundamentally different infrastructure.

Consider the memory hierarchy an agentic system requires. Short-term memory holds the current conversation context—typically the last few turns in a dialogue. Working memory maintains task state across a session—the steps completed, decisions made, and intermediate results. Long-term memory persists learned patterns, user preferences, and historical interactions across sessions. Coordination memory enables multiple agents to share state and avoid duplicating work.

CPU-based infrastructures struggle with this hierarchy because memory access patterns are radically different from stateless retrieval. A traditional RAG query might access a vector database once, retrieve 10-20 chunks, and terminate. An agentic workflow might access working memory dozens of times per second, update coordination state continuously, and persist learning incrementally throughout a session.

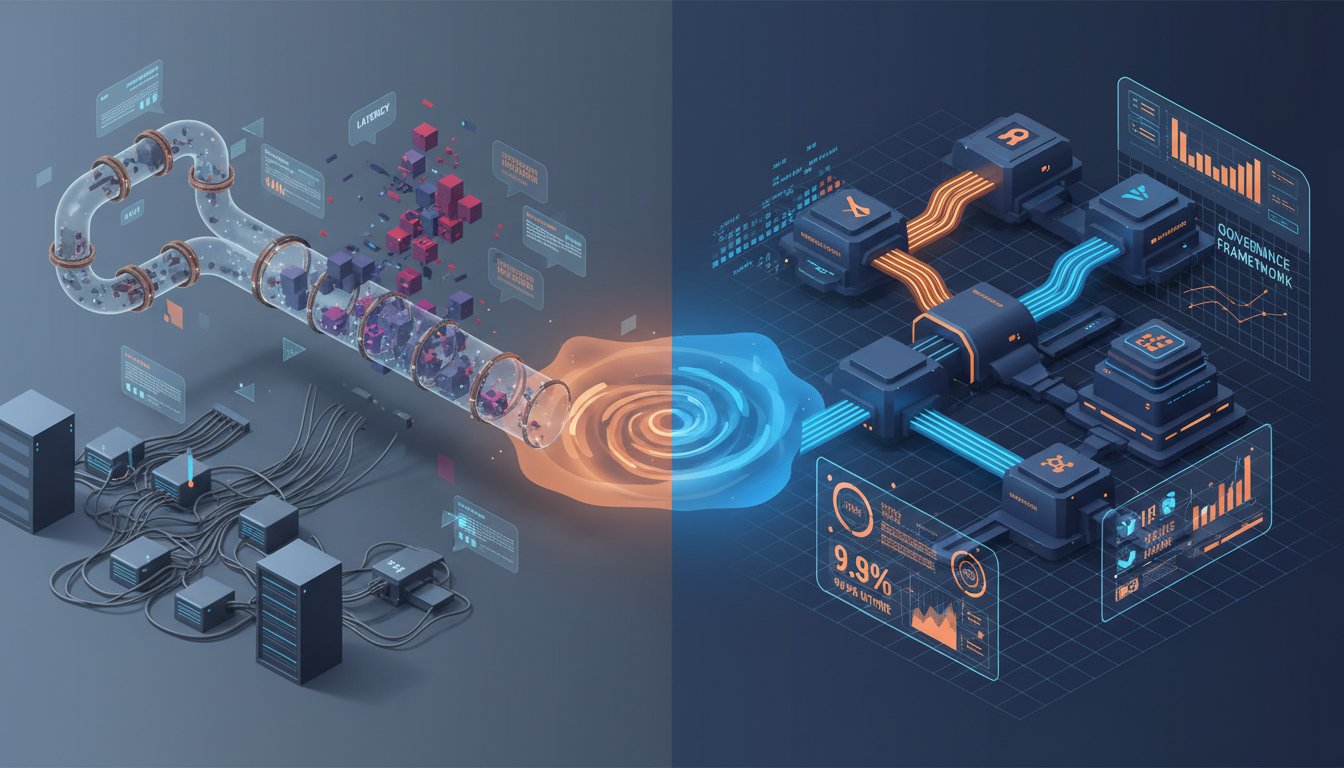

VAST CEO Renen Hallak described CNode-X as enabling “customers to operationalize retrieval, analytics, and agentic workflows as one coherent pipeline.” The critical phrase is “coherent pipeline.” Pilot systems cobble together disparate components—a vector database here, an LLM API there, some state management somewhere else. Production agentic systems require integrated infrastructure where memory, compute, and coordination operate as a unified architecture.

The technical implementation matters enormously. CNode-X integrates NVIDIA NIM microservices for agent deployment alongside the GPU-native SQL engine using cuDF libraries. This means an agent can query structured data, perform vector similarity search, update persistent state, and coordinate with other agents—all within the same CUDA-accelerated environment. The latency reduction from eliminating cross-system data movement enables interaction patterns impossible on traditional infrastructure.

Deloitte’s 2026 prediction that “AI agents will outpace their regulatory guardrails” reflects this capability gap. Agents running on infrastructure optimized for stateful orchestration can perform complex multi-turn reasoning at speeds that traditional systems can’t match. The infrastructure doesn’t just make agents faster—it makes entirely new agent architectures feasible.

The Hidden Cost Explosion Nobody Warns You About

Pilot ROI calculations are seductive because they’re based on constrained workloads that hide infrastructure inefficiencies. A pilot might process 1,000 queries daily with average response times under 500 milliseconds at a cost of $0.10 per query. Extrapolate to production at 1 million queries daily, and the financial case looks compelling: $100,000 daily in value delivered for perhaps $150,000 in infrastructure costs.

Then production launches and the actual costs arrive. Query response times spike to 3-5 seconds during peak load, forcing you to add compute capacity. The additional servers increase costs but don’t proportionally improve performance because the bottleneck is architectural, not resource-constrained. Agent coordination overhead grows quadratically as you add more agents to handle load. Suddenly you’re spending $400,000 monthly to deliver $200,000 in value, and stakeholders are questioning the entire initiative.

The VAST-NVIDIA announcement quantified this gap explicitly: 44% faster query times and 80% lower costs. These aren’t separate achievements—they’re causally linked. Faster queries mean fewer resources needed to handle the same load. Lower resource requirements mean lower costs. But the key insight is that these improvements come from infrastructure architecture, not optimization tweaks.

A GPU-native AI data stack processes queries faster because the data never leaves GPU memory. Traditional architectures shuttle data between CPU memory, GPU memory for embedding generation, back to CPU for vector search, potentially to GPU again for reranking, and finally to CPU for LLM inference. Each transfer adds latency and resource overhead.

When the entire pipeline—from data ingestion through vector search to agent orchestration—runs on CUDA-accelerated infrastructure, the data movement overhead disappears. The cost reduction isn’t about buying cheaper GPUs; it’s about eliminating the architectural tax that traditional systems impose.

This becomes existential for agentic workflows because agents generate far more internal queries than user-facing requests. A simple RAG system might process one vector search per user query. An agentic system might perform dozens: retrieving relevant context, checking working memory, coordinating with other agents, updating persistent state, and validating results. If each operation carries the overhead of cross-system data movement, the cost multiplier is catastrophic.

Supermicro’s integration with the CNode-X platform, providing GPU-accelerated servers with their SuperCloud Suite for lifecycle management, addresses the operational cost dimension. Infrastructure that’s difficult to deploy, monitor, and maintain imposes hidden costs that pilot systems never reveal. When you’re managing hundreds of servers supporting production agentic workflows, operational overhead becomes a significant cost driver.

The Production Migration Decision Tree

The question facing enterprise AI teams isn’t whether to invest in production-grade infrastructure—it’s when and how. The VAST-NVIDIA announcement creates a clear decision point, but the right choice depends on your specific deployment context.

For organizations still in pilot phase, the decision tree is straightforward. If your roadmap includes scaling beyond simple stateless RAG to multi-agent systems with persistent memory, starting with GPU-native infrastructure avoids the painful migration later. The incremental cost of GPU-accelerated infrastructure at pilot scale is modest compared to the re-architecture cost of migrating a production system.

If your pilot is demonstrating value and stakeholders are demanding production deployment, the infrastructure decision becomes urgent. Scaling your pilot architecture to production volumes will expose the bottlenecks discussed earlier. The question is whether to invest in incremental improvements to your existing architecture or make the architectural leap to GPU-native infrastructure.

The decision factors include query volume, latency requirements, agentic complexity, and cost tolerance. If you’re processing fewer than 10,000 queries daily with simple stateless RAG and sub-second latency isn’t critical, traditional infrastructure might suffice. But if any of those constraints tighten—higher volume, stricter latency requirements, multi-agent coordination, or cost pressure—GPU-native infrastructure shifts from nice-to-have to necessity.

For organizations already running production RAG systems on traditional infrastructure, the migration calculation is more complex. You have working systems delivering value, but you’re likely experiencing the scaling challenges described earlier. The migration cost—both financial and operational—must be weighed against the ongoing inefficiency tax.

The 80% cost reduction metric from VAST Data provides a quantifiable decision framework. If your current infrastructure costs $500,000 annually and migration to CNode-X would cost $200,000 in effort plus $100,000 annually in ongoing costs, the payback period is under a year. More importantly, the performance improvements enable capabilities your current architecture can’t support, creating new value opportunities.

The agentic roadmap question is perhaps most critical. If your strategy includes deploying multi-agent systems with persistent memory—and Gartner’s inclusion of multiagent systems in their top 2026 trends suggests most enterprises are heading this direction—then your infrastructure decision should account for future requirements, not just current needs.

CNode-X’s integration of NVIDIA BlueField-4 DPUs for context memory specifically enables persistent agent memory at scale. If your roadmap includes agents that “work on complex problems over extended periods without forgetting,” as Jensen Huang described, then infrastructure capable of supporting that architecture becomes a strategic enabler, not just a cost center.

The Coherent Pipeline Imperative

Renen Hallak’s phrase “coherent pipeline” captures the fundamental shift in how we should think about RAG infrastructure. Pilot systems succeed despite being cobbled together from disparate components because the workload doesn’t stress the integration points. Production agentic systems fail when the pipeline fragments under load.

A coherent pipeline means data flows through retrieval, analytics, and agentic workflows without architectural discontinuities. Vector search happens in the same execution environment as SQL analytics. Agent memory updates happen in the same context as LLM inference. Coordination state persists in the same infrastructure as long-term knowledge.

This integration enables optimization impossible in fragmented architectures. When your SQL engine (cuDF), vector search (cuVS), and agent orchestration (NIM microservices) all run on CUDA-accelerated infrastructure, the compiler can optimize across the entire pipeline. Data structures stay in GPU memory. Execution plans account for the full workflow, not just individual components.

The performance implications compound in agentic workflows. An agent might execute a workflow like: query SQL database for customer history, perform vector search for relevant product documentation, update working memory with findings, coordinate with another agent to validate recommendations, generate a response, and persist the interaction to long-term memory. If each step requires marshaling data between different execution environments, the latency accumulates catastrophically.

On coherent infrastructure, that entire workflow executes in microseconds because the data never leaves GPU memory and the execution plan is optimized end-to-end. The performance difference isn’t incremental—it’s the difference between agents that feel instantaneous and agents that feel broken.

The Infrastructure-First Mindset

The most important lesson from the VAST-NVIDIA announcement isn’t about specific products—it’s about recognizing infrastructure as a first-order concern for enterprise RAG success. Too many organizations treat infrastructure as a afterthought: build the application logic, demonstrate value, then figure out how to deploy it at scale.

This approach worked in the pre-AI era when application performance was primarily software-determined. Optimize your algorithms, add some caching, scale horizontally, and you could usually achieve acceptable performance. AI workloads, particularly agentic systems with persistent memory, break this assumption.

Infrastructure determines what’s architecturally possible. You can’t build agents with rich persistent memory on infrastructure optimized for stateless request-response patterns. You can’t achieve sub-100ms query latency on CPU-based vector search at scale. You can’t economically support thousands of concurrent agents on traditional architectures.

The infrastructure-first mindset means evaluating infrastructure capabilities before finalizing application architecture. If your infrastructure can’t support sub-second vector search across 10 million documents, don’t design an agent workflow that requires it. If your infrastructure can’t maintain gigabytes of agent working memory with microsecond access latency, don’t architect complex multi-agent coordination that depends on it.

Conversely, investing in infrastructure that enables new capabilities opens design space. When you know your infrastructure can support persistent agent memory with minimal latency overhead, you can architect agents that learn continuously from interactions. When your infrastructure provides coherent pipelines from data ingestion through agent orchestration, you can design workflows that would be infeasible on fragmented architectures.

The market data supports this shift. MarketsandMarkets projects the RAG market reaching $9.86 billion by 2030, while ResearchAndMarkets forecasts the broader market surpassing $40 billion by 2035 with multimodal RAG growing at 25.7% CAGR. These projections reflect enterprise adoption moving from pilots to production at scale—exactly the inflection point where infrastructure decisions become critical.

Looking Forward: The Agent Infrastructure Evolution

The VAST-NVIDIA partnership represents the first wave of purpose-built infrastructure for agentic AI workflows. The technical architecture—CUDA-accelerated at every layer, integrated context memory, coherent data pipelines—will likely become the template for next-generation AI infrastructure.

But this is just the beginning. As multi-agent systems become more sophisticated, infrastructure requirements will evolve in predictable directions. Agent coordination will require distributed consensus mechanisms with latency measured in microseconds, not milliseconds. Persistent memory will need to scale to terabytes per agent cluster while maintaining consistent access patterns. Learning from interactions will require real-time model updates integrated directly into the agent execution environment.

The infrastructure pattern of bringing compute to data, rather than moving data to compute, will intensify. GPU-native data stacks eliminate data movement between storage and compute. Future architectures will likely push this further, integrating learning and inference directly into storage controllers to eliminate even intra-system data movement.

The regulatory implications Deloitte highlighted—agents outpacing their guardrails—will create new infrastructure requirements. When agents operate at speeds where human oversight is impractical, the infrastructure must enforce constraints: rate limiting, approval workflows, audit logging, and safety checks integrated at the execution layer, not bolted on externally.

Physical AI, which Gartner identifies as a key 2026 trend, will stress infrastructure in novel ways. Agents controlling physical systems require guaranteed response times, fail-safe architectures, and real-time coordination across sensors and actuators. The infrastructure patterns emerging for enterprise RAG will need to extend to edge deployments with radically different latency and reliability constraints.

Conclusion: The Infrastructure Clarity Moment

Every enterprise AI team faces the same infrastructure decision, whether they recognize it or not. You can scale your pilot architecture and discover its limitations in production, or you can architect for production requirements from the start. The VAST-NVIDIA CNode-X announcement provides a clarity moment: GPU-native, CUDA-accelerated, coherent pipeline infrastructure isn’t a luxury for organizations with unlimited budgets—it’s the baseline for production agentic AI.

The metrics tell the story: 44% faster, 80% cheaper, with capabilities like persistent agent memory that traditional architectures simply can’t support. But the deeper insight is about recognizing infrastructure as the foundation that enables or constrains everything else. Your retrieval algorithms, agent architectures, and orchestration patterns all operate within the possibility space your infrastructure defines.

The organizations that will successfully deploy production agentic RAG systems at scale in 2026 and beyond aren’t necessarily those with the most sophisticated ML teams or the largest training budgets. They’re the ones that recognized infrastructure as a strategic enabler and invested accordingly. They understood that the infrastructure decisions made during pilot phase determine production feasibility far more than the retrieval algorithms they chose.

Your RAG pilot’s success created momentum and stakeholder buy-in. The infrastructure choices you make now determine whether that momentum carries through to production success or crashes into the architectural limitations that pilots never expose. The GPU-native, coherent pipeline infrastructure pattern is emerging as the production standard. The question is whether you’ll adopt it proactively or reactively—after your pilot architecture fails at scale.

The infrastructure awakening is happening across enterprise AI. The teams that embrace it early, building on foundations designed for agentic workflows with persistent memory, will define what’s possible. The teams that defer the infrastructure decision, hoping to scale pilot architectures through incremental optimization, will discover that some problems can’t be optimized away—they require architectural transformation. Make your infrastructure choices with production requirements in mind, and your agents will have the foundation they need to work on complex problems over extended periods without forgetting—exactly as Jensen Huang envisioned.