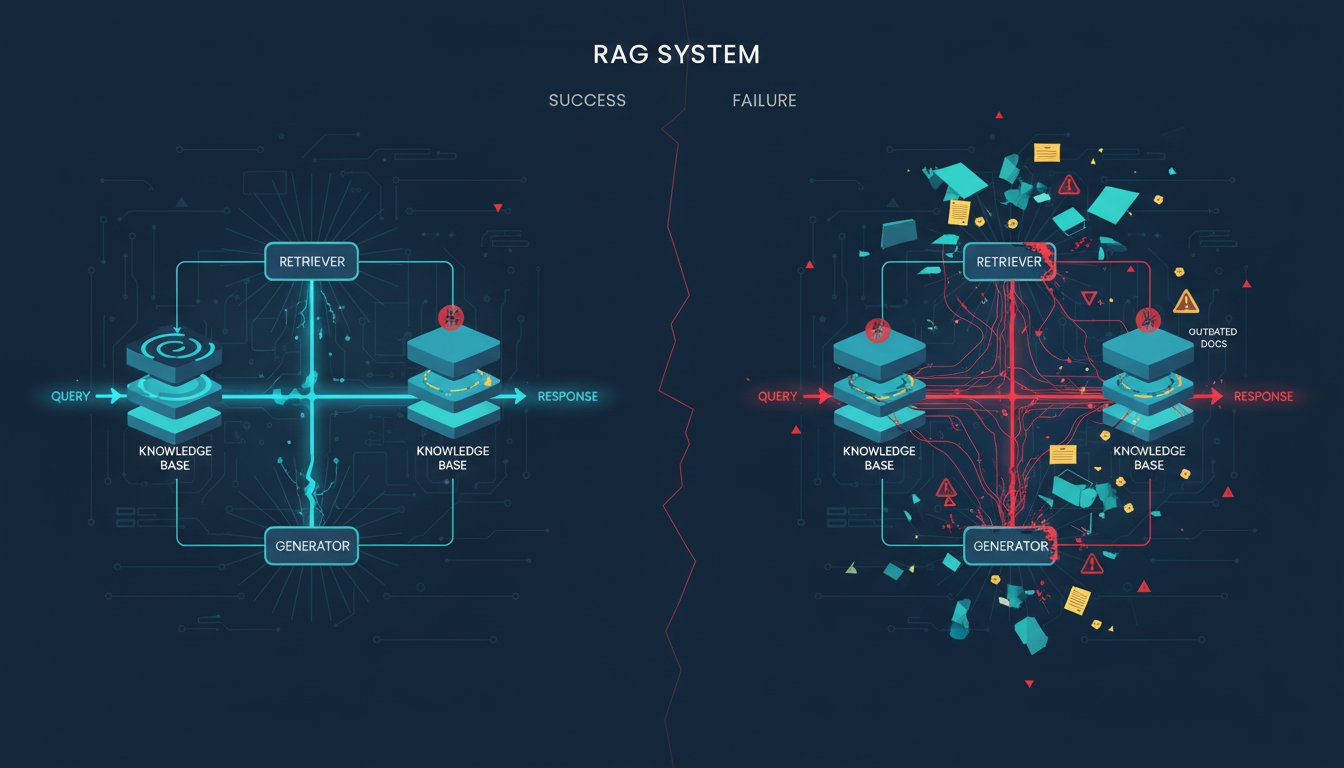

The gap between a working RAG prototype and a production system that actually delivers business value is wider than most organizations realize. You can build something that works beautifully in a demo environment—retrieving documents, generating responses, passing internal tests—and still watch it fail spectacularly when enterprises put real data through it at scale.

Here’s the uncomfortable truth: it’s not the AI models failing. It’s the engineering. Approximately 73-80% of enterprise RAG deployments fail in production, and research consistently shows that these failures stem not from model quality but from infrastructure decisions made in the first few weeks of development. The teams that succeed aren’t the ones with the smartest algorithms—they’re the ones who understood that RAG is fundamentally a data engineering problem dressed up in AI language.

This is where most technical leaders get it wrong. They evaluate RAG implementations the way they evaluate traditional software: Does it work? Is the latency acceptable? Does it scale to 10,000 queries per day? But enterprise RAG systems fail for reasons that don’t show up in those metrics. A system can handle 10,000 queries per day and still fail because it can’t reliably index new documents as they arrive. A retrieval system can achieve sub-second latency and still fail because it returns results from outdated or irrelevant documents 15% of the time. A production RAG pipeline can run without errors for three months and then silently degrade when it encounters documents with a different format than the training data.

The organizations that are winning in production RAG have recognized that success requires rethinking the entire architecture stack—not just the retrieval model, but how documents flow into the system, how they’re indexed, how results are ranked, and critically, how the system alerts you when something goes wrong. This post breaks down exactly where those 73% of systems fail and what the successful 27% are doing differently.

The Infrastructure Blind Spot: Why Your Document Pipeline Is Your Biggest Liability

Every enterprise RAG failure that looks like a retrieval problem is actually a data ingestion problem.

Consider this scenario: A financial services firm deploys a RAG system to answer regulatory questions. The system works perfectly for three weeks. Compliance officers love it. Then, someone uploads a new quarterly report in a slightly different format—maybe the headers changed, or the footnotes are structured differently. The system continues to run without errors. No alerts fire. No exceptions are thrown. But retrieval quality quietly drops by 22% because the document processor was designed for one specific format.

This is the silent failure that characterizes 40% of production RAG degradation. The system doesn’t crash. It doesn’t report errors. It just slowly stops being useful while everyone assumes it’s working fine.

The engineering gap appears in four critical places:

Document Processing Bottlenecks. Most teams build RAG systems to handle 5,000 documents in initial testing, then wonder why the system can’t scale to 50,000 documents without exploding memory usage or retrieval latency. The issue: they never designed the document processing pipeline with scale in mind. Chunking strategies that work for technical manuals fail for financial reports. Metadata extraction that handles PDFs breaks on images embedded in Word documents. Vector embedding batching that’s efficient at 1,000 documents becomes the bottleneck at 100,000.

Enterprise teams that succeed build abstraction layers into their document pipelines. Instead of one monolithic “process and embed” pipeline, they create modular processing chains: one path for PDFs, one for structured data, one for images. They implement incremental indexing so new documents don’t require reprocessing the entire corpus. They build observability into the pipeline so they know exactly which document formats are causing latency or processing failures.

Indexing Strategy Failures. You need two indexes in production, and most teams only build one. A keyword index (BM25) for precise term matching and a vector index for semantic search. But here’s where the engineering gap kills systems: the teams that succeed recognize that these aren’t equivalent. BM25 excels at finding documents containing specific terms—”regulation 10.2.3″ or “product SKU 457-B”—while vector search excels at conceptual retrieval—”rules about financial disclosure.” Hybrid search combining both significantly improves recall and precision.

The failure pattern: Teams build a beautiful vector search system, watch it work perfectly on semantic queries, then get hit with production queries that need exact term matching. The system retrieves documents that contain semantically related information but miss the specific requirement the user asked for. They add BM25 as an afterthought, implement poor weighting between the two search types, and end up with a system that’s slower and no more accurate than either approach alone.

The right approach requires deciding upfront which queries your system will face. Will compliance officers search for “regulations affecting underwriting”? That’s vector search territory. Will they search for “12 CFR 1026.2”? That’s pure BM25. Enterprise teams that win spend weeks analyzing actual production queries before they finalize their indexing strategy.

Latency Degradation Under Load. Sub-second retrieval latency looks impressive in a pilot. Then your organization deploys it across 500 users. Suddenly, average latency creeps to 2.3 seconds. Then 3.8 seconds. The system isn’t crashing, but users are abandoning queries before they complete.

The culprit is almost never the language model. It’s database query contention, insufficient caching, or retrieval logic that wasn’t designed for concurrent access patterns. Vector database performance degrades non-linearly as query volume increases if you haven’t implemented proper caching strategies or query optimization.

Successful systems implement strategic caching at multiple levels: cache the top-100 most-queried documents, cache embedding vectors for frequently accessed chunks, cache reranking scores. They profile their RAG pipelines to identify exactly which stage causes latency under load—is it document retrieval, embedding computation, reranking, or LLM inference? Once identified, they can optimize the actual bottleneck instead of making everything incrementally slower.

Silent Data Drift. Enterprise documents evolve. Formatting changes. New document types appear. Terminology shifts. Your RAG system, built on documents from three months ago, silently becomes less accurate as the document corpus changes. No errors. No alerts. Just gradual quality degradation that nobody notices until users start complaining.

This requires RAG-specific monitoring that goes beyond traditional system metrics. You need to track:

– Retrieval quality degradation over time (are results becoming less relevant?)

– Document format consistency (are new documents matching the expected structure?)

– Embedding quality drift (are new documents being represented differently in vector space?)

– Result relevance changes (do users still find results helpful?)

Successful enterprise systems implement automated quality checks that run daily: sample random retrieved documents, verify they contain relevant information, flag when quality drops below threshold, alert the team.

The Scale Question: Why 20,000 Documents Changes Everything

RAG systems fail predictably at scale. The failure isn’t gradual—it’s a cliff.

You build a beautiful system with 2,000 documents. Latency: 450ms. Retrieval accuracy: 94%. You scale to 5,000 documents. Still working. 10,000 documents. Still working. Then you hit 20,000 documents and everything breaks at once.

Why? Because vector search performance degrades non-linearly with corpus size, and you’re now hitting the actual constraints of your chosen infrastructure. If you’re using an in-memory vector database, you just ran out of memory. If you’re using a cloud service with default settings, you’ve exceeded the query quota. If you haven’t implemented proper indexing, your queries are now examining millions of vector comparisons instead of thousands.

Enterprise teams that succeed plan for this cliff from day one. They don’t pilot with 2,000 documents and assume linear scaling. They load 50,000 documents from the beginning, run performance tests, identify where latency starts breaking down, and build their infrastructure to handle it.

This requires understanding your vector database’s actual performance characteristics:

– How does query latency change as corpus size increases? (Hint: it’s not linear.)

– What’s the maximum throughput your database can handle at your target corpus size?

– How do you partition or shard the index if a single database instance can’t handle your scale?

– What’s the memory footprint of your embedding model at scale?

Successful implementations often use tiered retrieval: broad keyword search first (BM25 scales linearly), then vector search on the filtered results. This reduces the vector search problem from “search 100,000 documents” to “search 500 documents.”

The Reranking Reality: Why Your First-Pass Retrieval Isn’t Good Enough

Here’s a fact that separates production RAG systems from prototypes: you need a reranking step, and most teams don’t implement it.

Your retrieval system (whether BM25, vector search, or hybrid) is designed to maximize recall—find all potentially relevant documents. It’s not optimized for precision. A hybrid search might retrieve 50 documents that could be relevant to a query. Your LLM can only meaningfully process the top 3-5.

Reranking takes those 50 documents and orders them by actual relevance to your specific query. A lightweight reranker (far cheaper than an LLM) can review those 50 documents and confidently rank them. This does two critical things:

First, it improves answer quality. Your LLM sees the most relevant documents first, so it generates better responses. You’re no longer playing retrieval roulette where sometimes the best information appears 30th in the list.

Second, it dramatically reduces token consumption. Instead of feeding your LLM 50 documents hoping one contains the answer, you feed it 5 carefully selected documents. At scale, this is the difference between a $5 daily API bill and a $500 daily bill.

Production systems implement reranking as a standard pipeline stage. The most common approach uses a dedicated reranking model (like Cohere Rerank or open-source alternatives like mxbai-rerank-large) that’s optimized for this specific task. You send your top-K retrieved documents to the reranker, get back a relevance score for each, and keep only the top-N for LLM processing.

The teams that win have learned this lesson: basic retrieval plus LLM is not a production-grade RAG system. You need orchestration—multiple retrieval passes, filtering, reranking, and intelligent result composition.

The Compliance Nightmare: Building RAG Systems That Enterprises Can Actually Audit

Enterprise RAG systems must be auditable. Not just theoretically—actually auditable in a way that satisfies compliance teams, legal departments, and regulators.

This changes everything about how you build the system. You can’t just return an answer. You must return the answer alongside the specific documents that generated it, the exact spans within those documents that were used, confidence scores, and a complete audit trail of what retrieval was attempted and what was returned.

Compliance-grade RAG systems require:

Source Attribution. Every response must be traceable to the documents that generated it. Not “this came from the customer service manual.” Specifically: “this came from page 3, section 2.1, paragraphs 2-4 of the customer service manual from October 2024.”

Document Lineage. You need to know the entire journey of each document through the system: when it was uploaded, who uploaded it, what processing it underwent, when it was indexed, how it’s been accessed.

Hallucination Prevention. The system must be configured to refuse to answer questions when it doesn’t have confident information. A chatbot RAG system might say “I don’t know.” A compliance-grade RAG system must say “I don’t know and here’s the confidence threshold that triggered this refusal.”

Access Control Integration. If a document is restricted (only accessible to certain roles), the RAG system must respect those boundaries. A retrieval system can find a document, but if the current user doesn’t have access to it, the system must not return it.

The engineering required for this is substantial. You’re no longer building a simple retrieval + LLM pipeline. You’re building a secure, auditable system with sophisticated access control, source tracking, and confidence estimation.

Successful enterprise implementations add a retrieval verification layer: after retrieving documents, verify that the LLM’s response actually matches the retrieved content. Did it hallucinate by adding information not in the documents? Did it misrepresent what the documents said? This verification catches failures before users see them.

The RAGOps Imperative: You’re Building an Operations Problem, Not Just a Model Problem

The final gap most teams miss is treating RAG as a one-time deployment instead of an ongoing operations problem.

A RAG system deployed today has a shelf life. Documents change. Terminology evolves. New document types appear. User expectations shift. Your retrieval quality degrades without active monitoring and intervention.

This is where RAGOps enters: the practice of continuously evaluating, monitoring, and improving RAG systems in production. This includes:

Automated Evaluation. You can’t manually evaluate the quality of thousands of daily queries. You need automated evaluation metrics that answer: Are retrieved documents actually relevant? Are LLM responses faithful to the retrieved documents? Are there systematic failure patterns?

Monitoring & Alerting. Track retrieval quality, latency, and system health continuously. Alert when metrics degrade. Implement canary testing: route 5% of traffic through experimental retrieval strategies, measure if they improve quality.

Iterative Improvement. Use production data to identify failure modes and fix them. Did queries about “compliance requirements” systematically fail? Adjust your indexing strategy specifically for that query pattern. Do reranking scores not correlate with user feedback? Retrain or swap reranking models.

The organizations winning in production RAG treat it like a machine learning system—because it is one. You need data pipelines for continuous improvement. You need monitoring for production performance. You need rapid iteration cycles to address failures.

Successful systems implement feedback loops: when users find results unhelpful, capture that feedback, use it to identify retrieval failures, and automatically test potential fixes.

Most of your competitors aren’t doing this. They built a RAG system, deployed it, and now they’re wondering why quality degrades over time. The 27% that are winning have recognized that production RAG is a continuous engineering challenge that demands active operations, monitoring, and iteration.

The path to production-grade RAG success isn’t more sophisticated models or more complex architectures. It’s treating RAG as an engineering discipline with infrastructure, scale planning, observability, compliance requirements, and ongoing operations. Build those foundations correctly, and you’ll be in the small percentage of organizations whose RAG systems actually deliver sustained value.