Enterprise AI leaders are confronting an uncomfortable truth in early 2026: the same RAG architectures delivering breakthrough accuracy are simultaneously hemorrhaging budgets through inefficient API routing. While your team celebrates reduced hallucination rates and improved retrieval precision, finance departments are flagging AI infrastructure costs that have ballooned 15% year-over-year—and the culprit isn’t the models themselves.

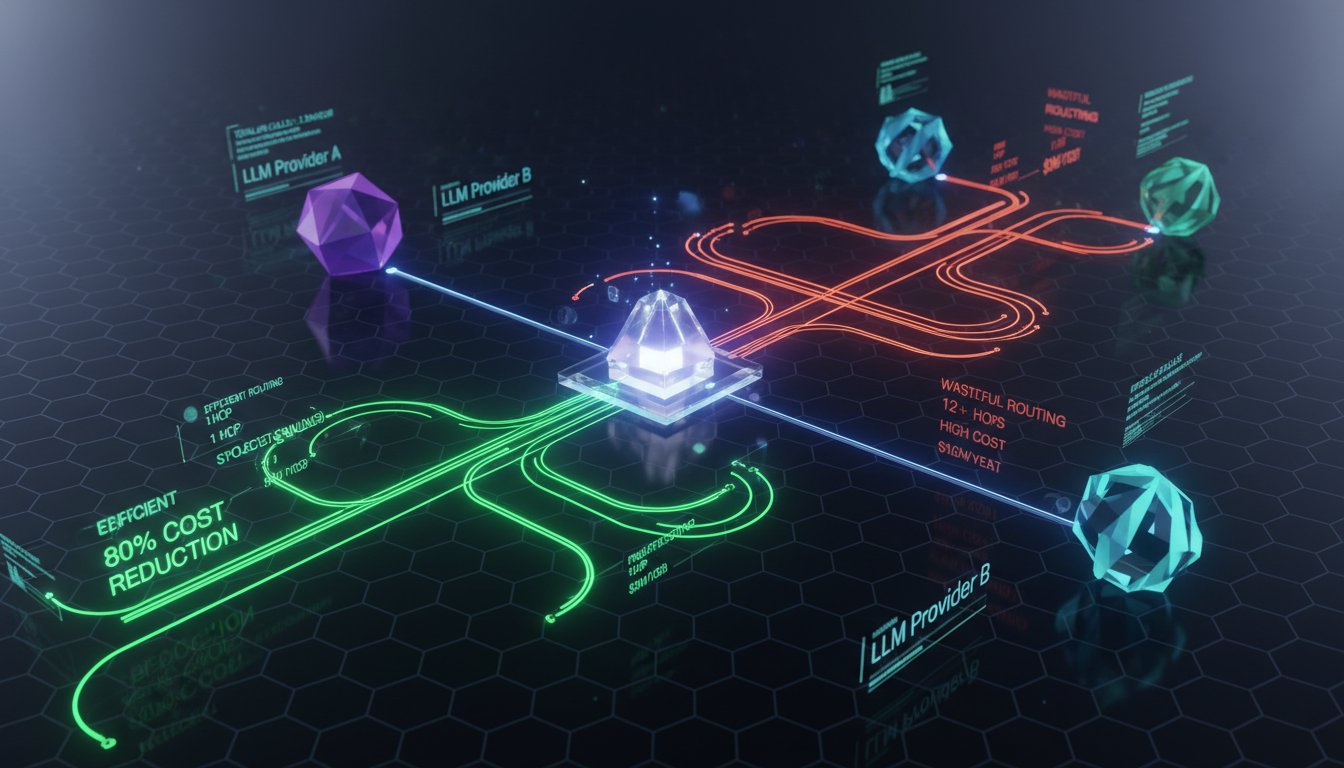

The problem lies in how most enterprise RAG systems connect to LLM providers. Static API configurations—where engineering teams hardcode connections to GPT-4, Claude, or Gemini at deployment—create hidden cost multipliers that compound with every query. As one API aggregation platform announced this week, enterprises are overpaying by up to 80% simply because their RAG architectures lack intelligent routing logic.

This isn’t just a procurement problem. It’s an architectural blindspot that’s transforming RAG from a cost-effective solution into a budget liability, especially as enterprises scale from pilot projects to production workloads processing millions of queries monthly. The March 1st announcement of platforms promising 80% savings isn’t hyperbole—it’s a market correction for a systemic inefficiency most RAG architects haven’t even diagnosed yet.

The Hidden Economics of Static RAG Routing

Most enterprise RAG implementations follow a deceptively simple architecture: retrieval component pulls relevant context, then forwards it to a predetermined LLM provider for generation. The LLM selection happens once—during system design—and remains fixed regardless of query complexity, provider pricing fluctuations, or model availability.

This static approach creates three compounding cost inefficiencies:

Provider Pricing Volatility Ignorance: LLM API pricing changes frequently. GPT-4 tokens cost differently than Claude 3 Opus, and both providers adjust pricing based on demand, new model releases, and competitive pressure. A RAG system locked to GPT-4 in January 2026 might be paying 40% more per query than an equivalent Claude 3.5 Sonnet call in March—but static routing can’t adapt.

Context Length Cost Multipliers: RAG queries with retrieved context often push token counts into the 4K-8K range per request. At $0.03 per 1K tokens (typical GPT-4 pricing), a system processing 100K queries daily with 6K average tokens burns $18,000 monthly on a single model. If 30% of those queries could route to a cheaper model without quality loss, that’s $5,400 in preventable monthly costs.

Task-Model Mismatch Waste: Not every RAG query requires frontier model capabilities. Simple factual retrievals, data extraction, or summarization tasks perform equivalently on mid-tier models costing 70% less. Static routing treats all queries identically, using expensive models for tasks that don’t justify the cost.

The financial impact scales brutally. An enterprise RAG system handling 10 million queries monthly with 5K average tokens at $0.03/1K costs $1.5 million annually. Intelligent routing reducing average cost-per-query by 50% saves $750,000—enough to fund three additional AI initiatives or absorb the 15% enterprise software spending increase reported across industries in 2026.

How API Aggregation Platforms Engineer Cost Intelligence

The emerging API aggregation category solves static routing inefficiencies by inserting an intelligent middleware layer between RAG systems and LLM providers. Rather than hardcoding model endpoints, enterprises route all requests through a single aggregation API that applies real-time decision logic.

Dynamic Model Selection Algorithms

Aggregation platforms analyze each incoming RAG query across multiple dimensions:

- Query complexity scoring: Natural language processing determines whether the query requires advanced reasoning (route to GPT-4/Claude Opus) or simple completion (route to GPT-3.5/Claude Haiku)

- Context length optimization: Queries with minimal retrieved context route to models with lower per-token costs

- Real-time pricing arbitrage: The platform maintains live pricing feeds from all providers, routing to the cheapest option meeting quality thresholds

- Performance-cost balancing: Machine learning models predict quality outcomes for each provider-model combination, optimizing for cost within acceptable accuracy bounds

Platforms like AI.cc’s One API have consolidated 300+ LLM models into unified endpoints, enabling granular routing decisions impossible with static configurations. A single RAG query might route through different models based on time-of-day pricing, provider API availability, or detected query intent.

Bulk Negotiation and Volume Discounting

Aggregation platforms pool API consumption across hundreds of enterprise customers, generating volume sufficient for bulk pricing negotiations. Where individual companies might process 10 million tokens monthly (insufficient for preferential rates), aggregators handle billions—unlocking tier pricing unavailable to direct API consumers.

This creates cost advantages even for large enterprises. A company consuming 100 million tokens monthly might negotiate 15% discounts directly with OpenAI. The same consumption routed through an aggregator benefiting from 10 billion token pooled volume could access 40% discounts, with the aggregator passing through 25-30% savings while retaining margin.

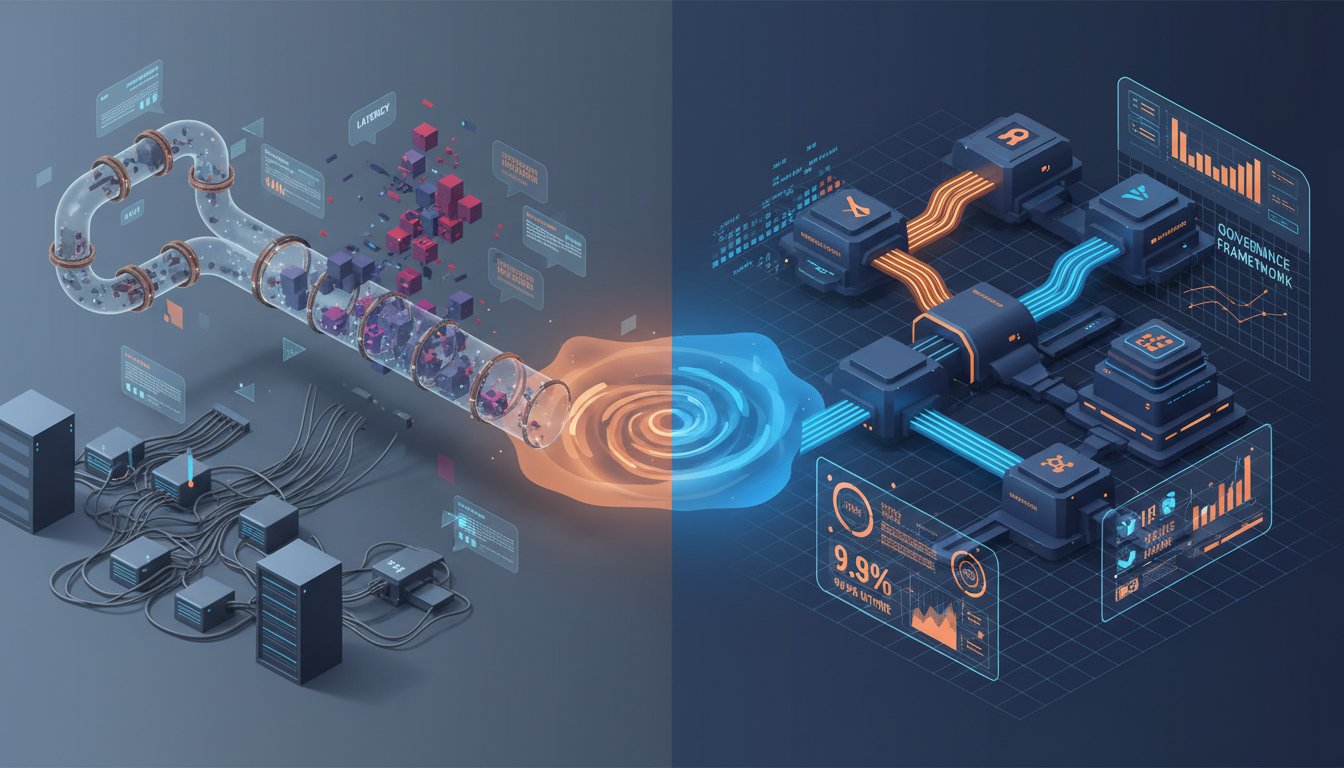

Latency-Cost Trade-Off Management

Intelligent routing must balance cost savings against latency penalties. Aggregation platforms introduce additional network hops—query travels from RAG system to aggregator to LLM provider, then reverses. Poorly designed routing adds 100-200ms per request, unacceptable for real-time applications.

Sophisticated platforms address this through:

- Geographic routing: Maintaining aggregation endpoints in multiple regions to minimize distance to both enterprise RAG systems and LLM provider APIs

- Predictive pre-routing: Analyzing query patterns to pre-establish connections with likely target models, reducing connection overhead

- Latency-aware fallbacks: If the cheapest provider exhibits high latency, automatically routing to slightly more expensive but faster alternatives

Bifrost by Maxim AI, recognized among the top AI gateways for 2026, implements sophisticated latency monitoring that dynamically adjusts routing when provider response times exceed SLA thresholds—ensuring cost optimization doesn’t degrade user experience.

The RAG Architecture Rethink: Implications for System Design

Adopting API aggregation requires more than swapping API endpoints. It fundamentally changes how enterprise RAG systems should be architected, introducing new considerations around observability, security, and failure modes.

Observability Becomes Multi-Dimensional

With static routing, RAG observability focuses on single-provider metrics: OpenAI API latency, error rates, token consumption. Aggregation introduces routing decisions as a critical observability dimension. Teams need visibility into:

- Routing decision rationale: Why did query X route to Claude instead of GPT-4?

- Cost attribution by routing rule: Which routing policies generate the most savings?

- Quality variance across routes: Are cheaper models producing lower-quality outputs for specific query types?

- Provider health correlation: How do routing decisions correlate with provider API stability?

Platforms like CloudZero offer integrated cost analytics that break down AI API expenses by routing decision, enabling enterprises to identify which cost optimization rules deliver ROI versus which degrade quality unacceptably.

Security and Compliance Complexity

Introducing aggregation middleware creates new security considerations. Enterprise data now flows through three tiers: RAG system → aggregation platform → LLM provider. Each hop represents potential exposure, especially for regulated industries handling sensitive data.

Key security requirements:

- Data residency controls: Ensuring routing decisions respect geographic restrictions (e.g., EU data must stay within GDPR-compliant providers)

- Encryption in transit: End-to-end encryption across all hops, not just RAG-to-aggregator

- Audit logging: Complete request tracing showing which queries routed where, essential for compliance investigations

- Provider certification verification: Automated checks that routed providers maintain required certifications (SOC2, HIPAA, etc.)

AI.cc’s One API addresses this with zero-data training commitments and dedicated server options, preventing aggregated data from being used for model improvement or exposing proprietary information across customer boundaries.

Failure Mode Diversification

Static routing creates single points of failure: if your chosen LLM provider experiences an outage, your entire RAG system fails. Aggregation distributes this risk across multiple providers, but introduces new failure modes:

- Routing logic failures: Bugs in model selection algorithms could route all queries to expensive models, eliminating cost benefits

- Aggregator platform outages: If the aggregation layer fails, no queries process regardless of provider availability

- Provider heterogeneity challenges: Different providers return responses in varying formats, requiring robust normalization logic

Enterprises implementing aggregation need comprehensive fallback strategies: local caching for common queries, direct provider connections as emergency backups, and circuit breakers that detect routing anomalies before they impact costs catastrophically.

Enterprise Adoption Realities: Integration Complexity and Vendor Risk

Despite clear cost benefits, API aggregation adoption faces practical barriers that slow enterprise implementation, particularly for RAG systems already in production.

Integration Tax for Legacy Systems

RAG architectures built before aggregation platforms emerged often embed provider-specific logic deep within application code. Retrieval components might format context specifically for GPT-4’s prompt structure, or post-processing might expect Claude’s particular response formatting. Switching to aggregation requires:

- Abstraction layer development: Building provider-agnostic interfaces that work regardless of routing destination

- Regression testing across models: Ensuring quality remains consistent when the same query routes to different providers

- Prompt engineering multiplication: Optimizing prompts for multiple potential target models, not just the original static choice

One enterprise case study noted 6-8 weeks of engineering effort migrating a production RAG system to aggregation, even with platform-provided SDKs. The migration window created risk: any bugs introduced could impact customer-facing applications.

The New Vendor Lock-In Paradox

API aggregation platforms promise to eliminate LLM provider lock-in by enabling seamless switching between OpenAI, Anthropic, Google, and others. However, they create a different dependency: lock-in to the aggregation platform itself.

Enterprises integrating deeply with an aggregator’s routing API, cost analytics, and proprietary optimization algorithms face migration costs if they later want to switch aggregators or return to direct provider relationships. The routing logic, observability integrations, and cost models become tightly coupled to the chosen platform.

Mitigation strategies include:

- Standardized routing interfaces: Using emerging industry standards for LLM routing rather than proprietary APIs

- Multi-aggregator strategies: Routing different RAG workloads through different platforms to maintain competitive pressure

- Escape hatches: Maintaining direct provider integrations as backup paths, accepting higher costs for reduced dependency risk

Cost Monitoring and Governance Challenges

Aggregation platforms shift cost responsibility from engineering teams (who selected the static provider) to automated algorithms (which select dynamically). This creates accountability gaps: when AI infrastructure costs spike unexpectedly, who owns the investigation?

Successful aggregation adoption requires new governance frameworks:

- Routing policy approval processes: Changes to cost-quality trade-off parameters shouldn’t deploy without review

- Cost anomaly alerting: Real-time detection when routing decisions generate unexpected expenses

- Quality gates: Automated checks that prevent cost optimizations from degrading RAG output below acceptable thresholds

- Regular audits: Quarterly reviews of routing effectiveness, comparing actual savings to projections

The 15% increase in enterprise software spending reported in early 2026 includes many cases where aggregation platforms were adopted without corresponding governance, leading to cost optimization algorithms making poor trade-offs that executives later had to reverse manually.

The 2026 Inflection Point: Why Aggregation Becomes Mandatory

Three converging trends make 2026 the year API aggregation transitions from optional optimization to required infrastructure for enterprise RAG systems.

Model Proliferation Accelerates Choice Paralysis

OpenAI, Anthropic, Google, Meta, Mistral, Cohere, and dozens of others now release new models quarterly. Each release changes the performance-cost calculation for RAG applications. GPT-4 might be optimal for complex queries in January, but Claude 3.7 released in March could deliver equivalent quality at 60% of the cost.

Static routing requires manual reevaluation after every model release: engineering teams must benchmark new options, update configurations, and redeploy. This cycle consumes weeks per iteration and lags market opportunities. Companies locked to GPT-4 might overpay for months before completing migration to a cheaper alternative.

Aggregation platforms automate this continuous optimization. When Anthropic releases Claude 3.7, the platform benchmarks it against existing routing rules, identifies queries that could route to the new model cost-effectively, and adjusts algorithms—often within days of release, capturing savings immediately.

AI Cost Scrutiny Intensifies with Scale

RAG systems that processed 10,000 queries monthly in 2024 now handle 10 million as enterprises scale from pilots to production. This 1,000x growth transforms AI from experimental line item to material budget category, attracting CFO-level scrutiny.

Finance teams applying traditional IT cost management expect utilization optimization, volume discounting, and competitive bidding—all impossible with static provider relationships. Aggregation platforms provide the cost transparency and optimization analytics finance departments demand, translating technical routing decisions into financial outcomes leadership understands.

The shift is evident in enterprise software spending patterns: the 15% increase in AI-driven software purchases correlates with growing expectations that AI infrastructure demonstrates ROI as rigorously as traditional IT investments.

Regulatory Compliance Demands Provider Diversity

Emerging AI regulations in the EU, US states, and other jurisdictions increasingly require enterprises to demonstrate they’re not overly dependent on single AI providers, particularly for critical applications. The EU AI Act’s transparency requirements and risk classifications create compliance advantages for systems that can route across multiple providers, showing regulators that no single vendor controls critical decision-making.

API aggregation platforms naturally generate compliance artifacts: audit logs showing queries distributed across providers, routing policies demonstrating non-discrimination, and fallback mechanisms proving resilience. These capabilities address regulatory requirements while delivering cost benefits, making aggregation a rare investment that satisfies both compliance and finance stakeholders.

Strategic Implementation: From Pilot to Production

Enterprises convinced of aggregation’s value still face tactical questions: which RAG workloads to migrate first, how to measure success, and what metrics justify broader rollout.

Workload Prioritization Framework

Not all RAG systems benefit equally from aggregation. Ideal initial candidates share these characteristics:

High Query Volume: Systems processing 1M+ queries monthly generate sufficient scale for meaningful savings. Lower-volume applications might not justify integration effort.

Cost-Sensitive Use Cases: Internal productivity tools where quality requirements are less stringent than customer-facing applications, allowing more aggressive cost-quality trade-offs.

Query Diversity: RAG systems handling varied query types (simple retrievals, complex reasoning, summarization) benefit most from dynamic routing. Single-purpose systems with homogeneous queries see limited gains.

Non-Latency-Critical: Applications where 50-100ms routing overhead doesn’t impact user experience, avoiding the need for premium low-latency aggregation tiers.

One enterprise began with an internal knowledge base RAG system processing 2M queries monthly with 6K average tokens. Static GPT-4 routing cost $360K annually. Migration to aggregation with 60% query routing to cheaper models reduced costs to $160K annually—$200K savings with minimal quality impact on an internal tool.

Success Metrics Beyond Cost

While cost reduction drives aggregation adoption, comprehensive measurement includes:

- Cost per quality-adjusted query: Factoring output quality into cost calculations prevents gaming metrics by routing everything to cheap, low-quality models

- Routing decision latency: Ensuring aggregation overhead stays within acceptable bounds

- Provider diversity index: Measuring how effectively the system distributes across multiple providers, reducing concentration risk

- Optimization velocity: Time from new model release to routing integration, showing how quickly the system captures market opportunities

Successful pilots demonstrate 40-60% cost reductions while maintaining quality metrics within 5% of static routing baselines, with routing decisions adding less than 75ms latency.

Scaling from Pilot to Enterprise Standard

After successful pilots, enterprises face the question of whether to mandate aggregation for all new RAG deployments. Leading organizations are establishing architecture standards that:

- Require aggregation for all RAG systems exceeding 500K monthly queries: Below this threshold, integration costs may exceed savings

- Provide reference architectures: Pre-built templates showing aggregation integration with common RAG frameworks

- Centralize platform relationships: Single enterprise agreement with chosen aggregator, simplifying procurement and governance

- Build internal expertise: Dedicated teams managing routing policies, cost analytics, and continuous optimization across all RAG workloads

The goal is making aggregation the default path, with static routing requiring special justification—inverting the current norm where aggregation is the exception.

The Path Forward: Architectural Principles for Cost-Intelligent RAG

The API aggregation trend reveals a broader principle: enterprise RAG systems must architect for cost optimization from inception, not retrofit it after deployment. Five architectural principles emerge:

Provider Abstraction from Day One: Design RAG systems with provider-agnostic interfaces, even if initially deploying with static routing. This future-proofs for aggregation migration.

Cost as a First-Class Metric: Instrument RAG systems to track cost-per-query alongside latency and quality, making financial impact visible in real-time operational dashboards.

Quality Thresholds, Not Maximization: Define minimum acceptable quality levels rather than always optimizing for maximum quality, creating cost-optimization headroom.

Routing Logic as Configuration: Treat model selection as externalized configuration, not hardcoded logic, enabling experimentation and rapid adaptation to market changes.

Multi-Provider Strategy: Even without aggregation, maintain relationships and integration paths with multiple LLM providers, avoiding dependency that limits negotiation leverage.

These principles position RAG architectures to capitalize on aggregation platforms’ benefits while maintaining optionality if platforms fail to deliver promised savings.

Conclusion: The Cost Optimization Imperative

The 80% cost reduction promised by API aggregation platforms isn’t just marketing—it’s a mathematical inevitability when static routing meets dynamic pricing and model proliferation. As enterprises scale RAG systems from pilots processing thousands of queries to production deployments handling millions, inefficient API routing transforms from minor waste to material budget drain.

The March 2026 announcements around aggregation platforms mark an inflection point where cost optimization transitions from optional to mandatory. Finance teams experiencing 15% year-over-year increases in AI software spending are demanding the same utilization efficiency and competitive sourcing applied to traditional IT infrastructure. RAG architects who dismiss aggregation as premature optimization will find themselves defending cost structures that overpay by 50-80% compared to competitors who embrace intelligent routing.

The question isn’t whether your enterprise RAG systems should adopt API aggregation—it’s whether you’ll lead the migration proactively or be forced to react when finance flags AI infrastructure as a cost control priority. The technical integration challenges are real, the vendor risk considerations are valid, but the financial math is irrefutable: static routing is an architectural luxury enterprise AI can no longer afford.

For organizations running production RAG systems today, the immediate action is assessing your current cost structure. Calculate your monthly LLM API spend, analyze query diversity, and model potential savings from dynamic routing. If the numbers show six-figure annual savings potential—and for most enterprise RAG deployments at scale, they will—initiating an aggregation platform evaluation becomes a fiscal responsibility, not just a technical optimization.

The RAG systems that define enterprise AI success in 2026 and beyond won’t just be the most accurate or fastest—they’ll be the ones architected to deliver quality sustainably, at costs finance teams can justify. API aggregation platforms provide the infrastructure to make that vision achievable, but only for organizations willing to rethink their routing architectures before market pressure forces their hand.