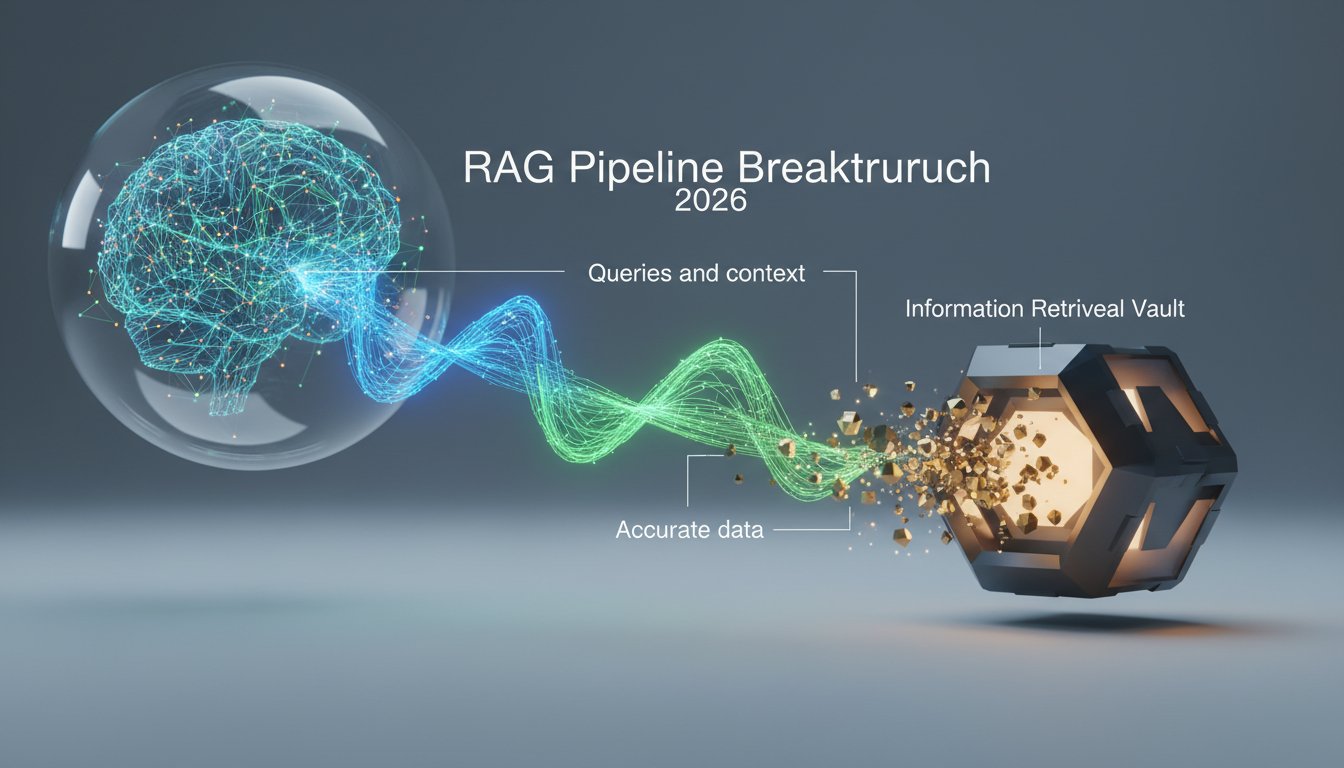

Imagine asking an AI a question and getting an answer that’s not just plausible-sounding, but actually accurate, sourced, and up to date. That’s the promise of Retrieval Augmented Generation, and in early 2026, that promise is starting to look a lot more real.

For years, one of the biggest knocks on large language models was their tendency to hallucinate. They’d sound confident while being completely wrong. RAG was proposed as a fix: instead of relying purely on what a model memorized during training, you give it the ability to pull in real information at query time. Think of it like the difference between asking someone to recall a fact from memory versus letting them look it up first.

But RAG has had its own growing pains. Early implementations were clunky. Retrieval quality was inconsistent. Latency was a problem. And getting the retrieved content to actually integrate well with generated output? That took real engineering effort.

Something shifted in March 2026. A cluster of new research, product releases, and real-world deployments started showing what mature RAG systems actually look like. This post breaks down the key breakthroughs, what they mean in practice, and why they matter if you’re building with or evaluating AI systems today.

Retrieval Quality Finally Got Serious

The weakest link in most RAG pipelines has always been the retrieval step. If you pull the wrong chunks of text, the model has nothing useful to work with, and the output suffers regardless of how good the generator is.

Dense Retrieval Gets a Rethink

Traditional dense retrieval relied on embedding similarity, which works well when the query and the relevant document use similar language. But real-world queries are messy. Users phrase things differently than documents do. Synonyms, abbreviations, domain jargon, all of it creates gaps.

Recent work has focused on training retrieval models that are more robust to this kind of mismatch. New fine-tuning approaches, particularly those using synthetic query generation to expand training data, have pushed retrieval accuracy up significantly on standard benchmarks. Some teams are reporting 15-20% improvements in top-k recall compared to baseline dense retrievers from just 18 months ago.

Hybrid Search Becomes the Default

One of the clearest trends in March 2026 is that hybrid search, combining dense vector retrieval with traditional keyword-based methods like BM25, has stopped being an advanced technique and started being the expected baseline.

The reason is simple: keyword search catches exact matches that embedding models sometimes miss, while dense retrieval handles semantic similarity that keyword search can’t touch. Together, they cover more ground. Most production RAG systems worth deploying now use some form of hybrid retrieval, often with learned rerankers sitting on top to sort results before they hit the generator.

Generation Quality: Closing the Gap Between Retrieved and Generated

Even with good retrieval, getting the model to actually use what it retrieved, rather than defaulting to its parametric memory, has been a persistent challenge.

Context Utilization Training

A growing body of research is specifically targeting this problem. Models are being fine-tuned not just on general tasks, but on learning to prioritize retrieved context over internal knowledge when the two conflict. This sounds straightforward, but it requires careful dataset construction and training signal design.

Early results are promising. Models trained with explicit context-faithfulness objectives show measurably lower hallucination rates on knowledge-intensive tasks, even when the retrieved content is imperfect or only partially relevant.

Long-Context RAG

Another shift worth noting: as context windows have grown, some teams are experimenting with passing much larger retrieved sets to the model rather than aggressively filtering down to a handful of chunks. This trades precision in retrieval for coverage, betting that the model can sort out what’s relevant internally.

It doesn’t always work. Long-context models still struggle with the “lost in the middle” problem, where information buried in the center of a long context gets underweighted. But for certain use cases, particularly those where missing a relevant piece of information is more costly than processing extra tokens, the tradeoff makes sense.

Evaluation: We’re Finally Measuring the Right Things

For a long time, RAG evaluation was a mess. Teams would report ROUGE scores or perplexity numbers that didn’t actually tell you whether the system was useful. That’s changing.

Task-Specific Benchmarks

New benchmarks released in early 2026 are designed around realistic use cases rather than academic proxies. They test things like: does the system correctly refuse to answer when the retrieved context doesn’t support an answer? Does it accurately attribute claims to sources? Does it handle conflicting information across retrieved documents without just picking one arbitrarily?

These are harder to score automatically, which is part of why they took longer to develop. But they give a much clearer picture of whether a RAG system is actually ready for deployment.

LLM-as-Judge Matures

Using a separate language model to evaluate RAG outputs has become more common and, importantly, more reliable. Better prompting strategies, calibration against human judgments, and purpose-built evaluation models have all contributed. It’s not perfect, but it’s now a credible part of the evaluation toolkit rather than a shortcut people feel vaguely guilty about using.

Real-World Deployments: What’s Actually Working

Benchmarks are one thing. Production is another. Some of the most useful signals from March 2026 come from teams sharing what’s actually working at scale.

Enterprise Knowledge Bases

RAG has found a strong foothold in enterprise settings where companies need AI systems that can answer questions grounded in internal documentation, policies, and data. The appeal is obvious: you get the fluency of a large language model without having to retrain it every time your documentation changes.

The practical challenges are real, though. Document quality matters enormously. Chunking strategy, how you split documents before indexing, has a bigger impact on output quality than most people expect going in. And access control, making sure the system only retrieves documents a given user is allowed to see, adds meaningful engineering complexity.

Teams that have gotten this right report strong user satisfaction scores and measurable reductions in time spent searching for information internally.

Customer-Facing Applications

Deploying RAG in customer-facing contexts raises the stakes. Errors are more visible, and the cost of a confident wrong answer is higher. The teams doing this well tend to invest heavily in retrieval quality, build in explicit uncertainty signals so the system can say “I’m not sure” when appropriate, and maintain human review loops for edge cases.

It’s not a plug-and-play situation. But the teams that treat it as a real engineering problem rather than a demo are seeing it work.

What This Means If You’re Building or Evaluating AI Systems

The RAG breakthroughs of early 2026 aren’t a single dramatic leap. They’re more like a maturation, a field that was promising but rough around the edges getting significantly more polished across multiple dimensions at once.

If you’re evaluating AI vendors or platforms, retrieval quality and context faithfulness should be near the top of your checklist. Ask how they handle retrieval, whether they use hybrid approaches, and how they measure hallucination rates on knowledge-intensive tasks.

If you’re building, the good news is that the tooling has improved substantially. Open-source retrieval libraries, better embedding models, and more mature orchestration frameworks mean you’re not starting from scratch. The bad news is that the details still matter a lot. Chunking, reranking, evaluation, none of it is fully automated yet.

The field is moving fast. What looked like a hard problem 18 months ago is becoming standard practice. And the problems that are hard today, reliable multi-hop reasoning across retrieved documents, handling truly adversarial or contradictory sources, are getting serious research attention.

Where RAG Goes From Here

The trajectory is clear: RAG is becoming a foundational layer in how AI systems access and use information, not a niche technique for specialized applications. The breakthroughs in March 2026 are significant not because any single one is revolutionary, but because they represent a field reaching a level of maturity where real, reliable deployment is increasingly achievable.

For anyone working in AI, whether you’re building systems, evaluating vendors, or just trying to understand where the technology is headed, RAG deserves your attention. The gap between what these systems could theoretically do and what they actually do in practice is closing faster than most people expected.

Want to stay current on RAG developments and other AI breakthroughs shaping how we build and use intelligent systems? Subscribe to our newsletter for weekly breakdowns, no hype, just the research and real-world results that actually matter.