Something significant is happening in enterprise AI right now. Companies that once treated retrieval-augmented generation as an experimental side project are now betting their data strategies on it. And the tools driving that shift, particularly LlamaIndex and Google’s Gemini, are evolving fast enough that what was true six months ago barely applies today.

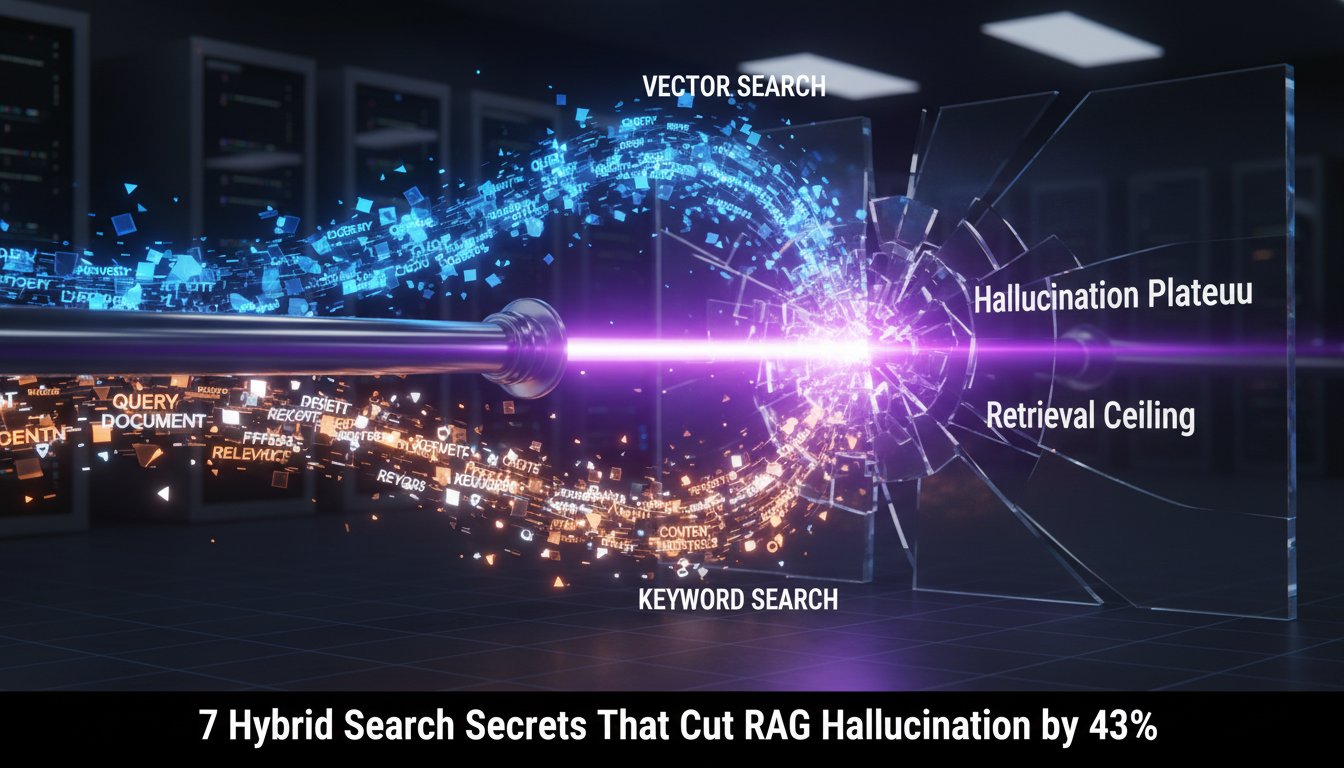

If you’ve been watching the RAG space, you already know the basic promise: instead of relying solely on a model’s training data, RAG systems pull in fresh, relevant information at query time. That means more accurate answers, fewer hallucinations, and outputs that actually reflect what’s happening in your business right now, not what was true when the model was trained.

But here’s where it gets interesting. The enterprise version of this story is a lot more complex than the demos suggest. You’re not just connecting a model to a database. You’re dealing with access controls, latency requirements, data freshness, cost at scale, and the very real challenge of making all of this work reliably across teams that have wildly different technical backgrounds.

That’s the gap April 2026 is starting to close. The latest developments in RAG infrastructure, particularly around vector databases and multimodal retrieval, are addressing the messy middle ground between proof-of-concept and production. Tools are getting more opinionated about enterprise needs. Integrations are getting tighter. And the conversation is shifting from “can we build this?” to “how do we run this well?”

This post breaks down what’s actually moving in the RAG space right now, what LlamaIndex and Gemini are doing that matters for enterprise teams, and what you should be paying attention to if you’re building or evaluating RAG systems in 2026.

LlamaIndex and the Vector Database Evolution

From Prototype to Production Infrastructure

LlamaIndex has quietly become one of the most important pieces of the enterprise RAG stack. What started as a data framework for connecting language models to external sources has grown into something closer to a full orchestration layer. The April 2026 updates reflect that maturity.

The core shift is in how LlamaIndex handles vector storage at scale. Earlier versions were great for getting something working quickly, but enterprise teams kept running into the same wall: performance degraded as data volumes grew, and managing multiple indexes across different data sources became a real operational headache.

The latest releases address this directly. Improved indexing strategies mean retrieval stays fast even as your corpus grows into the hundreds of millions of documents. And the expanded connector ecosystem means you’re not hand-rolling integrations for every internal data source your team relies on.

Structured and Unstructured Data, Together

One of the more practical improvements is better handling of mixed data types. Most enterprise knowledge isn’t neatly structured. You’ve got PDFs, Slack threads, database exports, code repositories, and internal wikis all sitting in the same retrieval pipeline.

LlamaIndex’s updated query engines are getting better at treating these as a unified source rather than forcing you to build separate retrieval paths for each format. That’s a meaningful reduction in complexity for teams trying to build systems that actually cover their organization’s knowledge base.

Gemini’s Role in Multimodal Enterprise RAG

Beyond Text-Only Retrieval

Google’s Gemini models are pushing enterprise RAG into territory that text-only systems simply can’t reach. The multimodal capabilities, specifically the ability to reason across text, images, and structured data simultaneously, open up use cases that weren’t practical before.

Think about what that means for industries like manufacturing, healthcare, or financial services. A RAG system that can pull a technical diagram, cross-reference it with a maintenance log, and surface a relevant procedure from a PDF is genuinely useful in ways that a text-only system isn’t. Gemini’s integration with enterprise RAG pipelines is making that kind of retrieval more accessible.

Context Windows and Retrieval Strategy

Gemini’s large context windows also change how you think about retrieval strategy. When a model can handle significantly more context per query, you have more flexibility in what you retrieve and how you chunk your documents. You’re less constrained by the need to be surgical about what goes into the prompt.

That said, bigger context windows don’t eliminate the need for good retrieval. They change the tradeoffs. Enterprise teams are finding that the combination of precise vector retrieval with Gemini’s ability to reason over longer contexts produces better results than either approach alone.

What’s Actually Changing for Enterprise Teams

The Reliability Question

The biggest shift in enterprise RAG conversations right now isn’t about capabilities. It’s about reliability. Can you build a RAG system that performs consistently across different query types, different users, and different data states? That’s the question driving most of the tooling improvements in April 2026.

Evaluation frameworks are getting more sophisticated. Teams are moving beyond simple accuracy metrics and building pipelines that test for retrieval quality, answer faithfulness, and response consistency over time. This is unglamorous work, but it’s what separates systems that impress in demos from systems that hold up in production.

Cost and Latency at Scale

Enterprise RAG at scale is expensive if you’re not careful. Every query involves embedding generation, vector search, and model inference. Multiply that across thousands of users and the costs add up fast.

The tooling improvements in this cycle are paying attention to this. Better caching strategies, smarter retrieval that avoids unnecessary embedding calls, and more efficient indexing all contribute to making RAG economically viable at the scale enterprise teams actually need. It’s not the most exciting part of the story, but it’s one of the most important.

Security and Access Control

This one doesn’t get enough attention in the general RAG discourse. Enterprise data isn’t public. Different users should see different information, and a RAG system that ignores access controls is a liability, not an asset.

The April 2026 developments include better tooling for building access-aware retrieval pipelines. That means the system can respect the same permissions that govern your underlying data sources, so a query from a junior analyst doesn’t surface documents that should only be visible to the finance team. Getting this right is a prerequisite for enterprise adoption, and the ecosystem is finally treating it that way.

Where This Is All Heading

Agentic RAG Is Getting Real

One of the more significant trends emerging in early 2026 is the move toward agentic RAG systems. Instead of a single retrieve-then-generate cycle, these systems can plan multi-step retrieval strategies, decide when to search again based on what they find, and synthesize information across multiple sources before producing an answer.

LlamaIndex’s agent frameworks and Gemini’s reasoning capabilities are both pointing in this direction. For enterprise teams, this means RAG systems that can handle more complex queries without requiring the user to break the question down manually. It’s a meaningful step toward AI that actually works the way people think, not the way databases are organized.

The Integration Layer Matters More Than the Model

Here’s something that’s becoming clearer as enterprise RAG matures: the model is often not the bottleneck. The quality of your retrieval pipeline, the freshness of your data, the accuracy of your chunking strategy, and the reliability of your integrations matter just as much, sometimes more.

This is good news for teams that don’t have access to the latest frontier models. A well-built RAG system on a solid model will outperform a poorly built system on the best model available. The tooling improvements in April 2026 are making it easier to get the fundamentals right, which raises the floor for what enterprise teams can build.

Wrapping Up

The RAG space in April 2026 looks meaningfully different from where it was a year ago. LlamaIndex is maturing into serious production infrastructure. Gemini is expanding what’s possible with multimodal retrieval. And the enterprise conversation has shifted from experimentation to execution.

The challenges that remain, reliability at scale, cost management, access control, and the complexity of mixed data environments, are real. But the tools to address them are catching up. Teams that invest in getting the fundamentals right now are building on a foundation that will hold up as the technology continues to move.

If you’re evaluating RAG for your organization or trying to take an existing system from prototype to production, the developments happening right now are worth paying close attention to. The gap between what’s possible and what’s practical is closing faster than most people expected.

Want to stay current on enterprise AI developments as they happen? Subscribe to our newsletter for weekly breakdowns of what’s actually moving in the RAG and enterprise AI space, no hype, just the stuff that matters.