Every enterprise RAG deployment faces the same critical question: How do you ensure your retrieval system respects user permissions without sacrificing performance at scale?

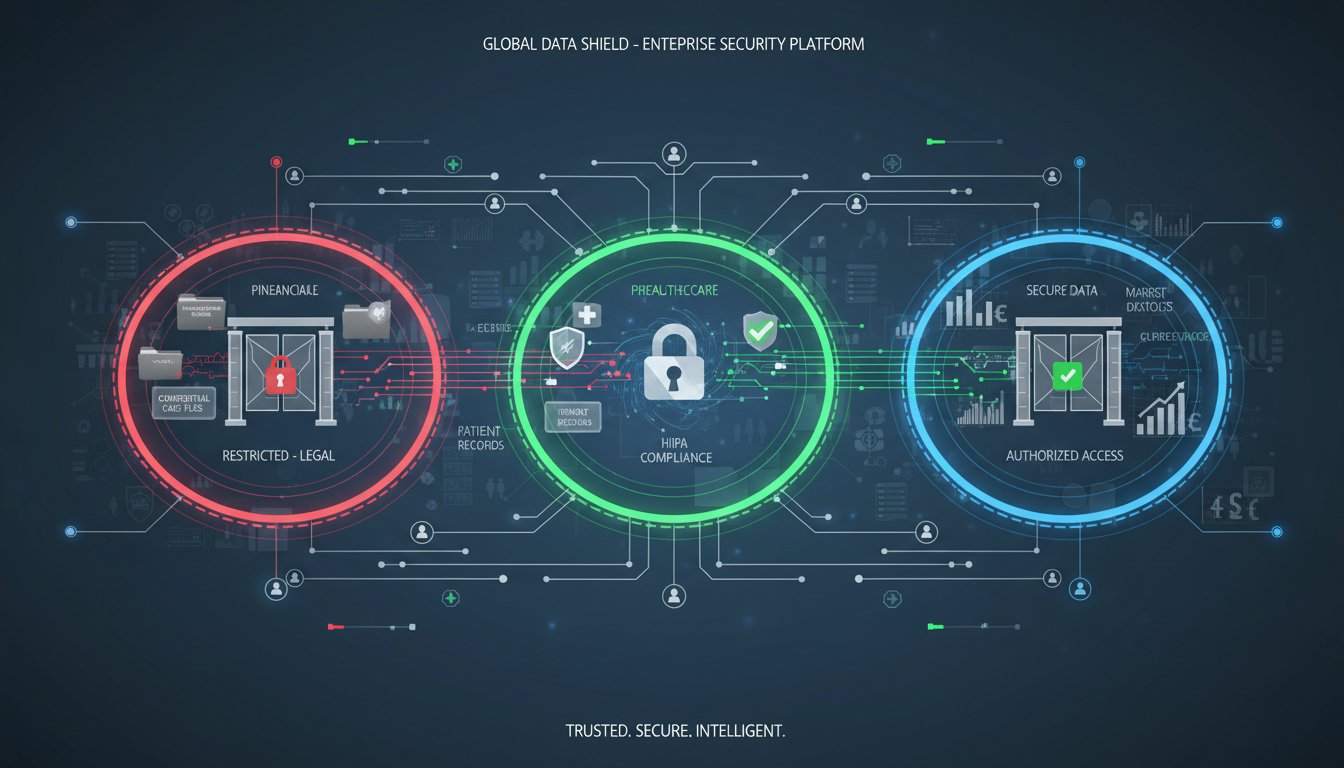

The stakes couldn’t be higher. A financial services firm builds a sophisticated RAG system to synthesize market data, earnings reports, and regulatory filings. It works perfectly—until a junior analyst accidentally gets access to confidential client portfolios that should require executive clearance. A healthcare organization deploys RAG for clinical decision support, only to discover it’s surfacing patient records across organizational boundaries that HIPAA explicitly forbids. A legal tech startup’s RAG system returns precedent cases from client-confidential matters, triggering potential malpractice liability.

These aren’t theoretical problems. They’re happening now, in production systems, because most enterprise RAG implementations treat permission management as an afterthought. The architecture that works brilliantly for general knowledge retrieval completely breaks down when you add the constraint: “This user can only see data they have explicit access to.”

The complexity multiplies when you consider scale. A small RAG system serving 20 users with simple role-based access is manageable. But enterprise systems serving thousands of users across dozens of permission domains, with data classifications changing weekly, require a fundamentally different approach. Most teams discover this the hard way—after building something that works until it doesn’t.

This guide reveals three architectural patterns that solve permission-based retrieval at enterprise scale. You’ll see how leading organizations handle the intersection of speed, security, and compliance, and which pattern matches your specific constraints. More importantly, you’ll understand why naive approaches fail and what design decisions prevent costly rebuilds down the line.

The Permission Problem Nobody Talks About

Traditional RAG systems operate on a dangerous assumption: all documents in the knowledge base are accessible to all users. The retriever simply finds the most relevant documents, and the LLM generates an answer. Permission management, if it exists at all, happens as a post-processing step—filter results after retrieval.

This approach has three catastrophic failure modes in enterprise settings.

First, it’s a security liability. Post-retrieval filtering doesn’t prevent the LLM from seeing restricted documents during generation. Even if you filter the final context, the model has already processed the sensitive information. An attacker with access to model weights or intermediate states can extract restricted data. Regulated industries (finance, healthcare, law) can’t accept this risk.

Second, it destroys retrieval quality. Imagine your vector database contains 1 million documents, but a specific user can access only 50,000 of them due to organizational permissions. A naive post-filter approach retrieves the top 50 most relevant documents across all 1 million, then filters to only those the user can access. Worst case: none of the top 50 are accessible, so the user gets results from rank 51-100, which are far less relevant. Your retrieval quality collapses when permission-based user populations are small or permissions are granular.

Third, it scales poorly. Every retrieval operation must check permissions for every candidate document. With thousands of users, complex permission hierarchies, and documents changing access classifications weekly, this becomes a computational bottleneck that either slows down retrieval or requires expensive caching that becomes stale.

Enterprises need permission enforcement integrated into the retrieval architecture itself, not bolted on afterward.

Pattern 1: Pre-Retrieval Filtering (The Simple Foundation)

The simplest pattern enforces permissions before the retriever even executes.

How it works: Before sending a query to your vector database or search engine, you build a permission filter based on the current user’s access rights. This filter is passed to the retriever, which only searches within the user’s accessible documents. The LLM then generates an answer from documents that have already been pre-filtered for permission compliance.

Architecture:

User Query → Permission Resolver → Filtered Index/Vector DB → Retriever → LLM → Response

The Permission Resolver is the critical component. It takes the authenticated user and returns a list of accessible document IDs or a permission filter (like “department:finance AND classification:public”). This filter is then passed to your retriever.

Strengths:

– Simplest to implement. Most vector databases and search engines support filters.

– Guaranteed security. Documents the user can’t access are never even considered.

– Better retrieval quality than post-filtering. You search within the user’s accessible subset, so top results are genuinely relevant to that user’s universe.

– Fast when permission sets are relatively static.

Weaknesses:

– Permission resolution becomes a bottleneck. If your permission system is slow or complex, every query slows down.

– Doesn’t handle dynamic permissions well. If a user loses access to a document mid-conversation, the system won’t immediately reflect that.

– Doesn’t work for hierarchical or attribute-based permissions that change frequently.

– Fails when users have access to thousands of documents but can only access a small subset of those. The filter becomes massive.

When to use it: Pre-retrieval filtering is your starting point for most enterprise RAG systems. Use it when:

– Permission sets are relatively stable (monthly or quarterly changes)

– Most users have access to a reasonable percentage of the knowledge base (>10%)

– Your permission system has sub-second query latency

– Compliance requirements are strict (healthcare, finance, law)

Implementation pattern:

Pseudo-code for a financial services RAG:

function retrieveWithPermissions(user_id, query):

user_permissions = permissionCache.getOrFetch(user_id)

accessible_doc_ids = user_permissions.getDocumentAccessList()

# Build filter for vector database

filter = {"doc_id": {"$in": accessible_doc_ids}}

# Retrieve only from accessible documents

results = vectorDB.search(

query_embedding=embedModel.encode(query),

filter=filter,

top_k=10

)

return results

The permission cache is crucial. Don’t call your permission system for every query. Cache user permissions with an appropriate TTL (time-to-live). For most organizations, 5-15 minute caches are acceptable. For highly sensitive environments, shorter caches (1-2 minutes) might be necessary.

Pattern 2: Hybrid Filtering (The Performance Optimizer)

When pre-retrieval filtering becomes a bottleneck—either because permission resolution is slow or users have highly granular, frequently-changing permissions—hybrid filtering bridges the gap.

How it works: You perform retrieval in two stages. First, retrieve a larger candidate set (100-200 documents) without permission filtering, assuming the documents are generally relevant. Second, filter that candidate set by permissions and rerank.

The key difference from naive post-filtering: you don’t filter a small set of top results and hope some pass the permission check. You retrieve a larger candidate pool, filter it, then rerank by relevance within the permission-compliant set.

Architecture:

User Query → Vector DB (no filter) → Retrieve Top 200 → Permission Filter → Reranker → Top 10 → LLM → Response

This pattern leverages the mathematical property that if you retrieve 200 documents instead of 50, the permission-compliant subset of those 200 is likely to include genuinely relevant documents, even after filtering.

Strengths:

– Decouples retrieval speed from permission complexity. Your vector database doesn’t need permission-aware queries.

– Handles dynamic permissions well. Users can gain/lose access without affecting retrieval performance.

– Works with complex attribute-based permissions that are expensive to compute.

– Reranking recovers much of the quality lost to permission filtering.

Weaknesses:

– More complex to implement. Requires semantic reranking (which adds latency).

– Security consideration: the initial retrieval sees unrestricted data, though it doesn’t reach the LLM or user interface.

– Reranking adds computational cost. If documents are changing permissions constantly, the reranking step might have stale permission metadata.

– Slightly higher latency than pre-filtering due to the reranking step.

When to use it: Hybrid filtering is your choice when:

– Permission queries are slow or complex (>100ms latency)

– Permissions change frequently (daily or more)

– You need attribute-based access control (ABAC) rather than simple role-based access control (RBAC)

– Users have highly granular permissions (they can access specific documents, not just categories)

Implementation pattern:

For a legal tech platform with client-confidential documents:

function retrieveWithHybridFiltering(user_id, query):

# Stage 1: Retrieve candidate set without permission filtering

candidates = vectorDB.search(

query_embedding=embedModel.encode(query),

top_k=200 # Larger candidate pool

)

# Stage 2: Apply permissions

user_permissions = permissionCache.get(user_id)

permission_compliant = [doc for doc in candidates

if user_permissions.canAccess(doc.id)]

# Stage 3: Rerank by relevance

reranked = crossEncoderModel.rerank(

query,

permission_compliant,

top_k=10

)

return reranked

The cross-encoder reranker is critical here. It provides fine-grained relevance scoring that recovers quality after permission filtering. Models like BGE-Reranker or LLM-based rerankers work well.

Pattern 3: Federated Retrieval (The Enterprise Scale Solution)

For organizations with complex permission hierarchies, multiple data sources with different permission systems, or requirements for real-time permission enforcement, federated retrieval is the gold standard.

How it works: Instead of a single centralized retrieval system, you maintain separate indexes for different permission domains. When a user queries, you determine which domain(s) they have access to, then run parallel retrieval against only those domain-specific indexes. Results are merged and reranked.

Architecture:

User Query → Permission Router → [Index A (user accessible), Index B (user accessible), Index C (blocked)]

↓ ↓

Retrieve Retrieve

↓ ↓

Merge & Rerank → Top 10 → LLM → Response

Domain separation is the key. You might have:

– Index A: Public documents, accessible to all users

– Index B: Finance department documents, accessible only to finance

– Index C: Executive-only strategic documents, accessible only to C-suite

– Index D: Legal documents, with fine-grained client-based access

A finance analyst querying the system retrieves from indexes A and B in parallel, never touching C or D.

Strengths:

– Handles massive scale elegantly. Each domain index is optimized for its permission model.

– Real-time permission changes are simple. User loses access to a domain? Remove them from that domain’s retrieval path.

– Clear security boundaries. Sensitive domains are completely isolated from lower-sensitivity ones.

– Supports heterogeneous permission systems. Finance might use RBAC, legal might use client-based access, compliance might use attribute-based access.

– Performance is actually better at scale. Searching 500K documents in a focused index is faster than searching 5M across all domains.

Weaknesses:

– Complex operational overhead. You’re managing multiple indexes, each requiring updates, monitoring, and backup.

– Cross-domain queries require explicit permission grants. Some organizations need users to search “across everything they can access,” which requires careful router logic.

– Duplicate documents across indexes consume more storage.

– Complex permissions that don’t fit neatly into domain boundaries require custom router logic.

When to use it: Federated retrieval is your choice when:

– You have clear organizational or data boundaries (departments, client groups, security classifications)

– Different permission systems govern different data domains

– You’re dealing with massive knowledge bases (>10M documents)

– You have strict compliance requirements with strong data compartmentalization (heavily regulated industries)

– Multiple teams need to independently manage their domain’s access controls

Implementation pattern:

For a multi-tenant SaaS healthcare platform:

function federatedRetrieve(user_id, query):

# Determine which domains user can access

user_permissions = permissionService.getPermissions(user_id)

accessible_domains = user_permissions.getAccessibleDomains()

# e.g., ["clinical_data", "public_guidelines", "patient_records"]

# Parallel retrieval from accessible domain indexes

retrieval_tasks = []

for domain in accessible_domains:

task = asyncio.create_task(

vectorDBDomains[domain].search(

query_embedding=embedModel.encode(query),

top_k=10 # Top 10 from each domain

)

)

retrieval_tasks.append((domain, task))

# Collect results from all domains

all_results = []

for domain, task in retrieval_tasks:

results = await task

all_results.extend([(r, domain) for r in results])

# Merge and rerank across all domains

merged = crossEncoderModel.rerank(

query,

all_results,

top_k=10

)

return merged

The async/parallel execution is crucial. You’re not querying domains sequentially; you fire all domain queries in parallel and merge results. This keeps latency low even with many domains.

Common Pitfalls and How to Avoid Them

Pitfall 1: Stale Permission Caches

You implement pre-retrieval filtering with a 1-hour permission cache. A user’s access is revoked, but the system continues retrieving “their” documents for another 45 minutes. This is a compliance violation in regulated industries.

How to avoid it: Implement cache invalidation, not just TTL expiration. When permissions change, immediately invalidate that user’s cache. Use event-driven architecture: when your permission system updates a user’s access, it publishes an event that invalidates their cache in your RAG system.

Permission System → Event (User X lost access to Document Y)

→ Cache Invalidation Service

→ Remove cached permissions for User X

→ User's next query uses fresh permissions

Pitfall 2: Over-Filtering Leading to Empty Results

A user’s permissions are so granular that after filtering, the retriever returns zero documents. The LLM generates a response saying “I don’t have access to information about this,” but the user doesn’t know if that’s accurate or just a permission issue.

How to avoid it: When post-filter results are empty or very small, fall back to a broader retrieval or explicitly inform the user about permission constraints. For high-stakes queries, you might require user confirmation before accepting permission-filtered results.

Pitfall 3: Permission Checks Happening at Query Time Are Too Slow

Your permission system queries a complex graph database to determine if User A can access Document B. This takes 50ms per document. With 100 candidate documents, you’re adding 5 seconds of latency just to permission checks.

How to avoid it: Pre-compute and cache permission matrices. Instead of querying permissions at retrieval time, periodically (every few minutes) compute “User X can access documents [list]” and cache it. This moves the expensive computation out of the critical path.

Alternatively, use bloom filters or other probabilistic data structures for fast permission checks. Trade a tiny false-positive rate for massive speed improvements.

Pitfall 4: Document Classification Changes Breaking Retrieval

A document starts as “public” and is later classified as “confidential.” Your pre-filter is built on stale metadata, so it still retrieves the document for non-executive users.

How to avoid it: Separate document content from document metadata (including permissions). Store permissions in a mutable, fast-to-query system (Redis, DynamoDB, or a dedicated permission service), not in the vector database or search index. Update permissions without re-indexing content.

Ideally:

– Vector database stores: document ID, embedding, content, basic metadata

– Permission service stores: document ID → list of users/roles that can access it

When you retrieve documents, you query the permission service separately, not the vector database.

Building Your Permission Strategy

Choosing the right pattern depends on answering these questions:

1. How frequently do permissions change?

– Rarely (monthly or less): Pre-retrieval filtering is sufficient

– Often (daily or more): Hybrid or federated retrieval

2. What’s your permission model complexity?

– Simple role-based (sales, finance, engineering): Pre-retrieval filtering

– Attribute-based or client-based (healthcare, legal): Hybrid or federated

– Highly complex with many permission domains: Federated

3. What’s your knowledge base size?

– <1M documents: Pre-retrieval or hybrid

– >5M documents with clear domains: Federated

4. What are your compliance requirements?

– Strict audit requirements (healthcare, finance): Federated (clean separation)

– Standard compliance: Pre-retrieval or hybrid

5. What’s your acceptable latency?

– <500ms: Pre-retrieval filtering

– <1s: Hybrid filtering

– <2s: Federated retrieval with 10+ domains

Most enterprises start with Pattern 1 (pre-retrieval) and graduate to Pattern 2 (hybrid) or Pattern 3 (federated) as their knowledge base and permission complexity grow.

The Real-World Test

Before deploying any permission-based retrieval pattern, test these scenarios:

-

Permission revocation test: Revoke a user’s access to a document. Within 2 minutes, verify that user can no longer retrieve that document. Don’t rely on TTL expiration alone.

-

Scale test: With your full permission matrix (all users, all documents), measure retrieval latency. If it exceeds your SLA, you need Pattern 2 or 3.

-

Compliance audit: Audit every document retrieved over a week. Verify that no user accessed a document they shouldn’t have. This catches subtle bugs in permission logic.

-

Permission boundary test: For federated systems, verify that users cannot retrieve documents from restricted domains, even if they construct creative queries.

-

Empty result test: Deliberately create a scenario where a user’s permissions are so restrictive that retrieval returns zero documents. Verify the system handles this gracefully.

The enterprise RAG systems that survive production—that actually deliver value without creating security incidents—are built on permission-aware architectures from day one. Permission management isn’t a feature to add later; it’s a foundational design decision.

The cost of getting it wrong—compliance violations, data breaches, rebuilding the entire retrieval system—is far too high. Start with Pattern 1. Plan for Pattern 2. Design for Pattern 3. Your future self (and your compliance team) will thank you.