Category: RAG Evaluation

-

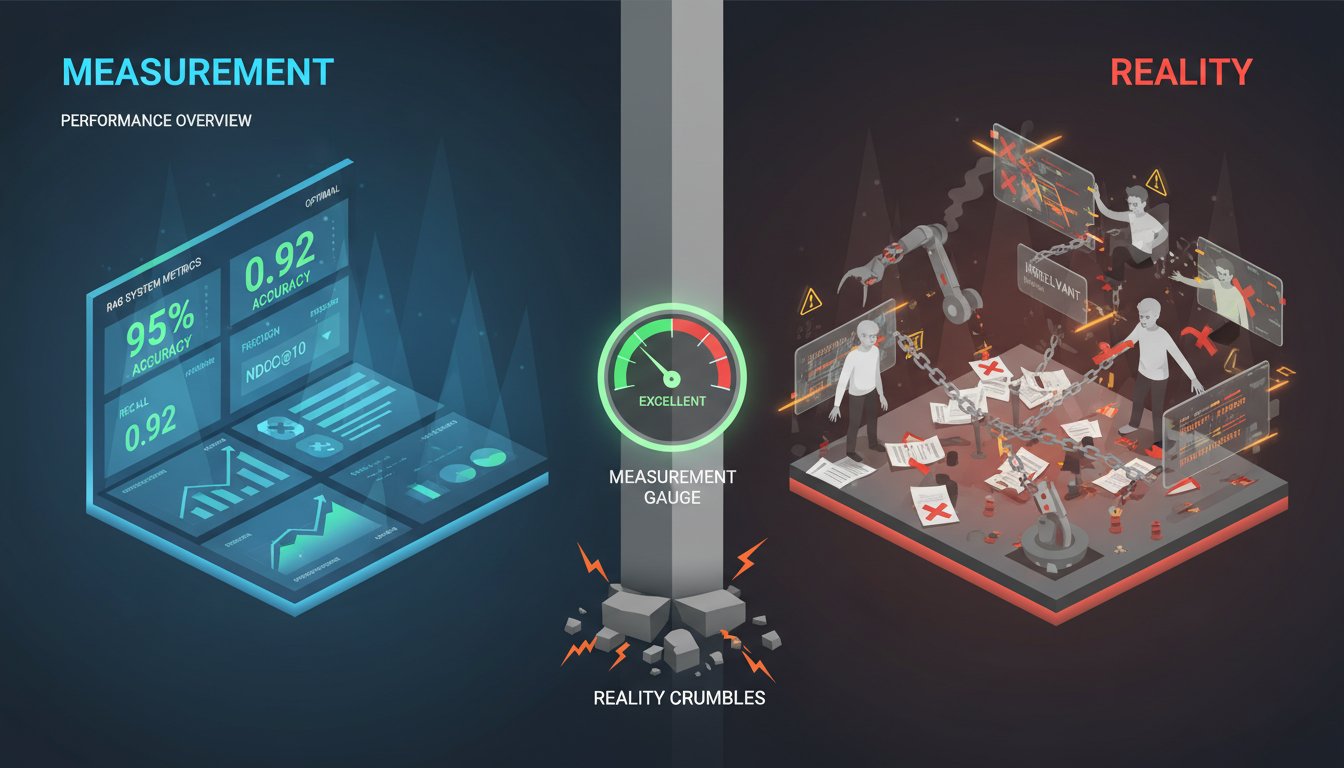

The Measurement Paradox: Why Your RAG Metrics Are Tracking Success While Your System Fails

Your RAG system shows 95% retrieval accuracy in testing. Your precision metrics are stellar. Your NDCG scores would make any ML engineer proud. Yet somehow, three months into production, your enterprise RAG deployment is quietly collapsing—and your dashboards never saw it coming. This is the measurement paradox that’s plaguing enterprise RAG deployments in 2024: the…

-

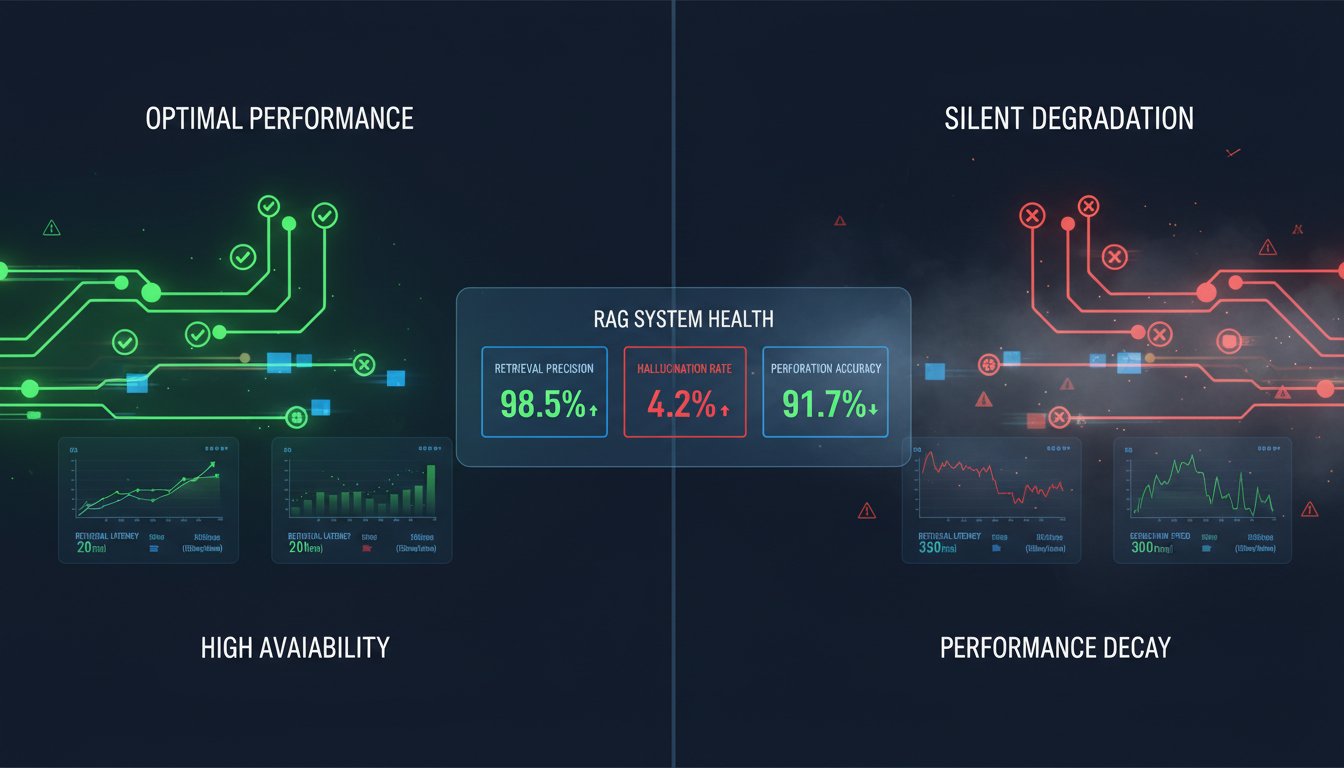

The RAG Measurement Framework: How to Evaluate What Actually Matters in Production

Imagine deploying a RAG system that feels like it’s working perfectly—your LLM generates coherent responses, your retrieval returns relevant documents, and your team ships it to production confident in the outcome. Six months later, you discover a silent degradation: retrieval precision has dropped 15%, hallucination rates are climbing, and your users are quietly getting worse…