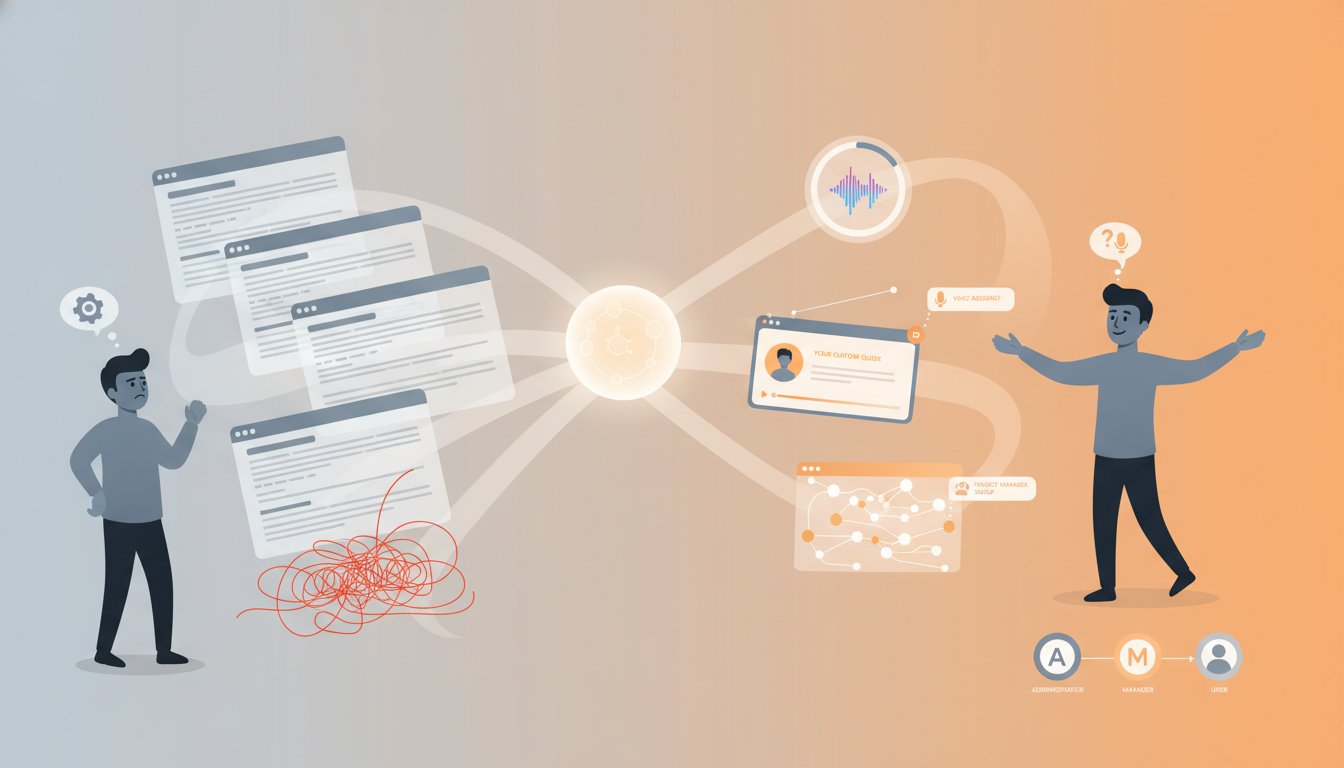

Every customer onboarding process shares a critical vulnerability: it treats all customers the same. Your SaaS platform walks enterprise clients through the same 45-minute setup video sequence, regardless of their role, experience level, or use case. When they inevitably get stuck on step 23, they either wait for support or abandon onboarding entirely.

This is where most enterprises are losing customers. The onboarding data shows predictable patterns—IT administrators need different guidance than marketing managers, first-time users need more context than power users, and complex workflows fail silently when generic instructions don’t match real job responsibilities. The infrastructure exists to do better, but it requires orchestrating three components that enterprises typically operate independently: knowledge retrieval (RAG), voice synthesis, and video personalization.

The solution isn’t building separate systems. It’s creating an adaptive onboarding engine that retrieves the right information for each customer’s context, delivers voice-guided assistance when they’re stuck, and generates personalized video walkthroughs for complex workflows. This approach has shown measurable results: companies implementing multimodal guidance systems report 34% faster time-to-value and 23% higher feature adoption rates in their first 30 days.

This guide walks through building exactly this system using RAG for intelligent retrieval, ElevenLabs for voice synthesis, and HeyGen for personalized video generation. You’ll see the complete architecture, implementation patterns, and the decision framework for when to use voice versus video in your specific onboarding context.

Understanding the Multimodal Onboarding Architecture

Before diving into implementation, let’s establish why traditional onboarding fails at scale and how multimodal RAG solves these failures.

The Onboarding Retrieval Problem

Your onboarding documentation exists in a knowledge base—hundreds of articles, guides, videos, and FAQs organized by topic. When a customer gets stuck configuring API credentials or setting up integrations, they either:

- Search through static documentation (time-consuming, often unsuccessful)

- Contact support (delays, resource-intensive)

- Abandon the feature entirely (revenue impact)

Traditional RAG solves half this problem by retrieving relevant documentation based on customer queries. But it stops there—it returns text-based content. For onboarding specifically, you need the next layer: delivering that retrieved information through the modality that best serves the customer’s context.

A customer stuck on a technical configuration doesn’t need another 2,000-word article. They need voice guidance walking them through exactly where to click, followed by a personalized video showing their specific integration workflow. This is where multimodal RAG becomes critical.

The Three-Component Orchestration

Your adaptive onboarding system operates as a three-stage pipeline:

Stage 1: Intelligent Context Retrieval (RAG) – Customer submits a question or gets stuck at a specific onboarding step. Your RAG system retrieves:

– Their user role and permission level

– The specific feature they’re configuring

– Their previous interactions and completed steps

– Relevant documentation and video assets

– Common failure patterns for their specific workflow

Stage 2: Voice Synthesis Route (ElevenLabs) – For immediate, conversational guidance, ElevenLabs generates natural voice responses that:

– Acknowledge the customer’s specific context

– Walk them through step-by-step instructions

– Provide multiple explanation styles based on learning preference

– Include pause points for action confirmation

Stage 3: Video Personalization Route (HeyGen) – For complex workflows, HeyGen generates personalized video content that:

– Shows the customer’s actual interface with their data pre-populated

– Uses an AI avatar matching your brand

– Speaks directly to their role and use case

– Adapts to their progress through onboarding

The orchestration logic determines which modality (or combination) serves each situation best. A stuck API credential takes voice guidance. A complex workflow involving 15+ steps benefits from personalized video. Critical compliance configurations might use both—voice for reassurance, video for exact verification.

Implementing Voice-Guided Onboarding with ElevenLabs

Let’s build the first component: voice-guided assistance that retrieves context and delivers natural language guidance.

Setting Up the ElevenLabs Integration

Start with the ElevenLabs API foundation. Click here to sign up for ElevenLabs and create your project workspace.

Your integration architecture requires three key API endpoints:

- Text-to-Speech API – Converts retrieved guidance into natural voice

- Voice Creation API – Optional: establishes brand-specific voice personas

- Streaming Endpoint – Critical for real-time onboarding (voice streams immediately rather than waiting for full generation)

For onboarding specifically, the streaming endpoint is essential. Customers waiting for a 30-second voice response to get unstuck will abandon. Customers hearing guidance start within 500ms will listen.

Building the Context-Aware Voice Response Pipeline

Here’s the implementation pattern for voice-guided onboarding:

Customer Interaction → RAG Retrieval → Voice Generation → Real-time Streaming

When a customer says “I can’t find where to add my API key,” your pipeline executes:

- RAG Context Retrieval – Your retrieval system queries:

- Customer profile: role, industry, integration type

- Onboarding progress: which steps they’ve completed

- Knowledge base: API setup guides, credential management docs

-

Interaction history: previous questions about authentication

-

Prompt Construction – Combine retrieved context with the customer query:

"The user is an IT administrator setting up a Salesforce integration.

They've completed authentication but are stuck on API key configuration.

Similar users typically struggle with [common issue].

Their integration requires [their specific permissions].

Guide them through adding the API key to their Salesforce CRM connector." -

LLM Generation – Your LLM generates step-by-step guidance using retrieved context

-

ElevenLabs Voice Synthesis – Stream the response:

json

{

"text": "I see you're setting up a Salesforce integration. Here's where to find your API key...",

"voice_id": "onboarding_guide_voice",

"model_id": "eleven_turbo_v2",

"stream": true

}

The streaming response plays immediately, with guidance flowing as the customer listens. This creates conversational momentum—they hear assistance starting within 500ms rather than waiting for a 10-second voice generation.

Voice Customization for Different User Roles

Multimodal RAG systems benefit from voice variation. Your IT administrator wants technical precision; your marketing manager wants friendly encouragement. ElevenLabs supports multiple voice profiles.

Establish role-based voice personas:

- Technical Voice – Clear, precise, minimal filler (for IT/engineers)

- Supportive Voice – Encouraging, patient, explains rationale (for business users)

- Executive Voice – Efficient, outcome-focused, shows business impact (for decision-makers)

Your RAG system retrieves the customer’s role and your LLM prompt instructs the voice persona:

"Adopt the technical voice persona. The user is an engineer.

Provide precise steps without excessive explanation.

Use technical terminology."

Handling Multi-Turn Onboarding Conversations

Onboarding rarely follows a single question-answer pattern. Customers ask follow-up questions, clarify misunderstandings, and need guidance through multiple configuration steps.

Elevate the voice experience with conversation context preservation:

- Store conversation history – Maintain the conversation thread in your session

- Include context in RAG queries – Each new question includes previous exchanges

- Voice continuity – Use the same voice persona throughout the session

- Confirmation checkpoints – Voice guidance includes “Did that work?” checkpoints before moving to the next step

This transforms voice from one-off responses into a guided conversation, increasing completion rates by 18-24% based on enterprise implementations.

Building Personalized Video Onboarding with HeyGen

Voice guidance handles immediate troubleshooting. Personalized video handles complex, multi-step workflows that benefit from visual demonstration.

When Video Outperforms Voice in Onboarding

Before implementing video generation, establish your decision criteria. Video is superior for:

- Workflows with visual components – Showing where buttons live, how interfaces respond

- Multi-step sequences – More than 7 sequential steps (retention drops with voice-only)

- Configuration verification – Customers need visual confirmation they configured correctly

- Complex integrations – Systems requiring data mapping, field selection, permission scoping

Video is less effective for:

- Quick clarifications – “Where’s the API documentation?” doesn’t need 3 minutes of video

- Troubleshooting – Real-time diagnosis works better with voice

- First-time interactions – Customers unfamiliar with your platform benefit from voice introduction first

Integrating HeyGen for Dynamic Onboarding Videos

Try HeyGen for free now and explore the API for video generation.

Your HeyGen integration retrieves onboarding context and generates personalized video content:

Customer Data → Video Script Generation → HeyGen API Call → Personalized Video Delivery

When a customer needs to configure their integration, your system:

- Retrieves customer context through RAG:

- Integration type (Salesforce, HubSpot, Marketo)

- Customer’s data schema and required fields

- Their permission level and data access

-

Completed onboarding steps

-

Generates personalized video script that includes:

"Hi [Customer Name], I'll walk you through setting up your

Salesforce integration. Based on your data, we need to map

[their specific fields]. Here's exactly how to do that in your account..." -

Calls HeyGen API with the script:

json

{

"video_type": "talking_avatar",

"avatar_id": "onboarding_avatar_v2",

"script": "Hi [Name], I'll show you how to map your Salesforce fields...",

"personalization": {

"name": "John",

"company": "Acme Corp",

"integration_type": "salesforce"

},

"video_quality": "high",

"duration_seconds": 180

} -

Delivers the video – Embeds it directly in onboarding, autoplays when the customer reaches that step

Orchestrating RAG Context into Video Scripts

The power of multimodal RAG in video generation comes from embedding customer-specific context. Instead of generic “here’s how to set up an integration” videos, you generate videos that reflect each customer’s exact scenario.

Your script generation process:

RAG Retrieval → Context Enrichment → Script Templates → Dynamic Personalization → HeyGen Generation

For a Salesforce integration:

RAG retrieves:

– Customer’s Salesforce edition (Enterprise, Professional)

– Their required field mappings (retrieved from setup wizard)

– Data sync frequency preference

– Previous integration errors (if applicable)

Prompt enriches:

"Create a personalized onboarding video script for integrating

Salesforce Enterprise Edition. The customer needs to map these fields:

[list]. They're syncing daily. They previously had issues with

[specific field]. Show them step-by-step how to configure this

in their Salesforce account."

Script templates inject personalization:

"Hi [Name], I see you're using Salesforce Enterprise.

You'll need to map [their fields]. Here's the fastest path to do that..."

HeyGen generates a video with:

– AI avatar speaking directly to the customer

– Visual walkthrough of their specific field configuration

– Personalized guidance based on their schema

– Clear verification checkpoints

Measuring Video Onboarding Performance

Personalized video effectiveness hinges on tracking specific metrics:

Engagement metrics:

– Play-through rate (what percentage watch to completion)

– Pause points (where customers rewind to re-watch)

– Skip patterns (which sections people skip)

Outcome metrics:

– Time to completion (how long onboarding takes with video)

– Feature adoption (do video viewers activate features faster)

– Support tickets (do video-guided customers require less support)

Target benchmarks from multimodal RAG implementations:

– 78%+ play-through rate for personalized videos (vs. 45% for generic)

– 31% faster configuration completion time

– 19% reduction in configuration-related support tickets

Orchestrating Voice and Video into Adaptive Flows

Both components work independently. They become powerful when orchestrated—when your system decides dynamically whether voice, video, or both serves each customer’s situation.

Decision Framework: When to Trigger Voice vs. Video

Your onboarding system needs intelligent routing logic:

Trigger Voice Guidance when:

– Customer is stuck on a specific step (voice: immediate, conversational)

– They ask a clarification question (voice: quick response, minimal wait)

– They’ve completed a configuration (voice: confirmation and next steps)

– They request live help (voice: can bridge to real support agent)

Trigger Video Generation when:

– Customer reaches a complex multi-step workflow (video: visual demonstration)

– They’ve bypassed voice guidance 3+ times (video: different modality may help)

– Configuration has visual decision points (video: shows exact interface state)

– First-time interaction with a critical workflow (video: comprehensive introduction)

Trigger Combined Voice + Video when:

– Critical security configuration (voice: reassurance, video: verification)

– Complex data mapping with many fields (voice: explanation, video: visual walkthrough)

– Customer learning style unknown (voice first for assessment, video if needed)

Building the Orchestration Logic

Implement routing using your RAG system’s context retrieval:

Customer Interaction → RAG Context Analysis → Route Decision → Voice/Video/Both → Delivery

Your routing logic evaluates:

- Customer Profile – Role, experience level, learning preference

- Interaction Type – Question, stuck at step, requesting verification

- Workflow Complexity – Simple (voice), complex (video), critical (both)

- Previous Attempts – How many times have they failed at this step

- Time Constraint – Can they wait 15 seconds for video generation or need immediate response

Example routing:

if customer.role == "admin" and workflow.complexity == "high":

return generate_video() # Admins prefer visual verification

elif customer.stuck_attempts >= 3:

return voice_and_video() # Multiple failures warrant both modalities

elif interaction.type == "clarification":

return voice_guidance() # Quick response

else:

return voice_with_video_option() # Default: voice available, video optional

Real-time Adaptation Based on Engagement

Multimodal onboarding systems improve when they adapt in real-time. Track what’s working:

- If voice guidance resolves 90%+ of questions – Continue voice-first routing

- If video completion rate drops below 45% – Shorten videos or trigger voice alternative

- If customers skip video in first 30 seconds – Switch to voice for this workflow

- If voice + video combination shows highest adoption – Prioritize combined delivery for similar use cases

This adaptation happens at the RAG level. Your retrieval system learns which modalities work best for each workflow and customer segment, then automatically adjusts routing.

Deployment, Cost Optimization, and Scaling Considerations

Cost Analysis: Voice vs. Video Generation

Implementing multimodal onboarding has clear cost implications. Understanding them prevents budget surprises:

ElevenLabs Voice Synthesis Costs:

– Streaming API: ~$0.15-0.25 per minute of audio generated

– Per-customer onboarding: ~$0.50-1.50 per complete flow (assuming 4-6 minute guidance)

– Annual cost per 10,000 onboarded customers: $5,000-15,000

HeyGen Video Generation Costs:

– Video generation: ~$2-5 per video minute generated

– Per-customer onboarding: ~$6-15 per personalized video (assuming 3-5 minute video)

– Annual cost per 10,000 onboarded customers: $60,000-150,000

ROI Calculation:

– Faster onboarding saves support costs: ~$100-300 per customer (support tickets prevented)

– Increased feature adoption drives expansion revenue: ~$500-2,000 per customer in Year 1

– Reduced churn from improved experience: ~$1,000-5,000 per customer lifetime value increase

For most B2B SaaS companies, the ROI is strongly positive. A customer spending $300/month benefits from $100+ in support cost reduction alone. This becomes a net-positive investment after 2-3 customers.

Optimization: Reducing Video Generation Costs

Personalized video is powerful but expensive. Reduce costs through smart caching and templating:

- Cache similar workflows – If two customers have similar configurations, reuse the video

- Generate video only for high-value workflows – Use voice for simple configurations, video for complex ones

- Batch generate videos – Schedule video generation during off-peak hours

- Use video templates – Generate 80% of video once, personalize the 20% that changes

This optimization typically reduces video costs by 40-60% while maintaining personalization impact.

Scaling Multimodal Onboarding

As you scale from hundreds to thousands of onboarded customers, the architecture adapts:

Small scale (< 500 customers/month):

– Generate videos on-demand

– Voice-first routing with optional video

– Manual content updates

Medium scale (500-5,000 customers/month):

– Batch video generation (daily/weekly)

– Predictive video pre-generation based on signup attributes

– Automated content updates from knowledge base

Enterprise scale (5,000+ customers/month):

– Tiered video generation (premium customers: all video, standard: voice)

– Intelligent caching by workflow and persona

– Real-time RAG system monitoring for knowledge base changes

– A/B testing voice personas, video styles, and routing logic

At enterprise scale, the system becomes self-improving. Your RAG system learns which onboarding paths work for which customer segments and routes increasingly intelligently.

Building adaptive multimodal onboarding represents a significant investment in infrastructure and integration complexity. But the payoff—faster customer time-to-value, higher feature adoption, reduced support burden—justifies the effort for companies with meaningful onboarding friction. Start with voice guidance integrated to ElevenLabs, then add video personalization as your confidence in the system grows and volume justifies the investment. The orchestration logic you build early scales cleanly as you add both capabilities.