The promise of Retrieval Augmented Generation is straightforward: answers grounded in your enterprise data, free from the creative confabulations of raw LLMs. Yet across countless proof-of-concepts, a frustrating pattern keeps showing up. The system performs well on simple, predictable queries during the demo, then falls apart under the nuanced, high-stakes questions real users ask in production.

The most dangerous failures aren’t complete nonsense. They’re subtle distortions: confident assertions built on a misinterpreted sliver of context, or critical omissions masked by fluent prose. These are the hallucinations that slip past cursory review, eroding user trust and making RAG feel like a liability rather than a solution.

This persistent gap between controlled testing and real-world reliability comes down to a fundamental architectural oversight. Most RAG pipelines are built as passive retrieval engines, fine-tuned for finding the “most similar” text but not for understanding the intent and constraints behind a real question. The fix isn’t chasing higher recall scores or more powerful embeddings. It’s building active validation directly into the retrieval flow.

By moving beyond similarity search to a system of layered checkpoints that interrogates both the query and the proposed evidence, you can build RAG that doesn’t just find information. It reasons about it. What follows covers the core architectural flaw driving production hallucinations and a practical, deterministic framework for eliminating them.

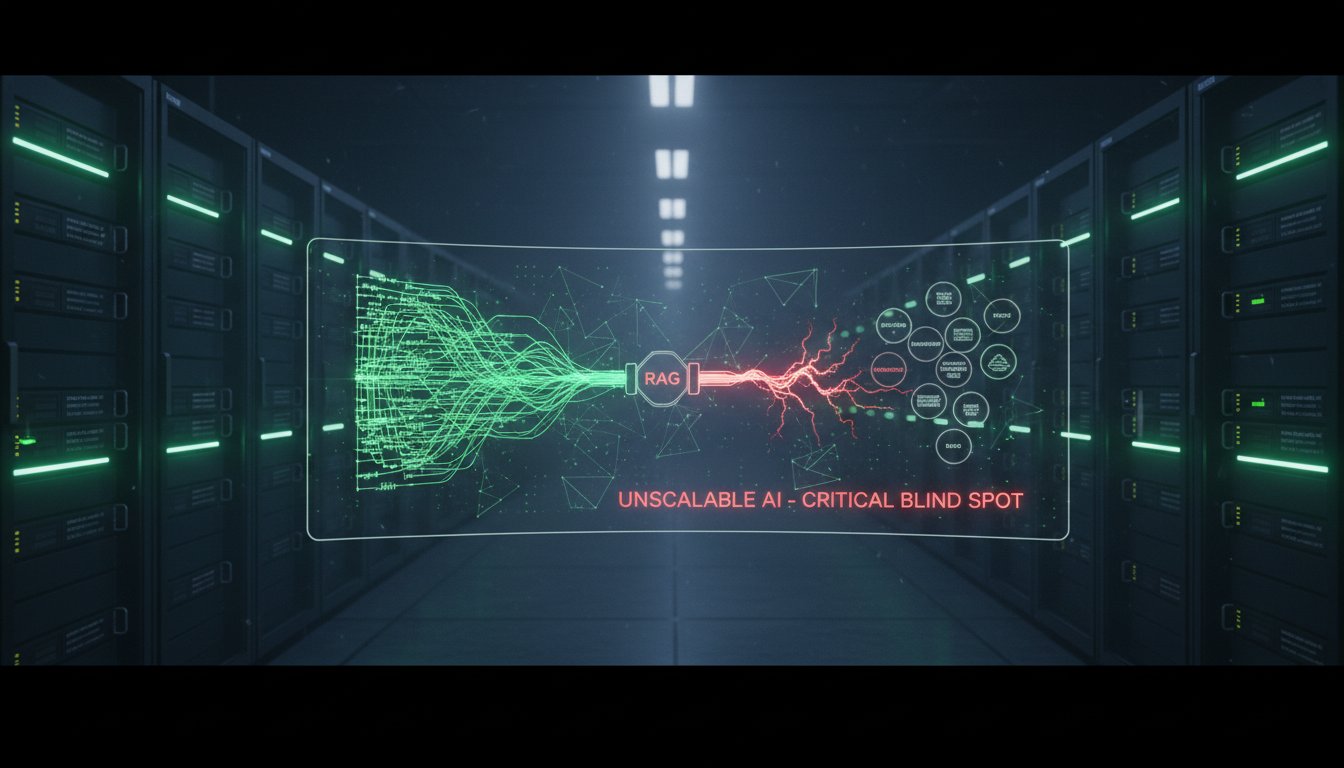

The Core Architectural Flaw: Passive Retrieval

Most enterprise RAG systems rest on a deceptively simple premise: translate a user’s question into a vector, find the most similar chunks of text in a database, and hand those chunks to an LLM for synthesis. This pipeline treats retrieval as a one-way street, a fetch operation. There’s no mechanism to ask, “Does this retrieved information actually answer the question?” or “Is this evidence complete and consistent?” That passivity is the root cause of hallucinations that persist even when vector similarity scores look great.

The Similarity Trap

The core metric driving most RAG systems, cosine similarity between query and chunk vectors, measures semantic relatedness, not answerability. A document chunk can be highly related to the query’s topic while being entirely irrelevant to its specific intent.

Take a query like “What is the escalation protocol for a Severity-1 data breach?” The system might retrieve a chunk detailing the definition of a Severity-1 incident with 95% similarity. The LLM, conditioned to use whatever context it’s given, will confidently synthesize an answer about the definition and hallucinate an escalation protocol because one simply wasn’t in the retrieved evidence. The system retrieved something, just not the right thing, and had no checkpoint to catch the mismatch.

The Fragmentation Problem

Chunking strategies, necessary as they are for manageability, often sever critical logical connections. A key piece of information needed to fully answer a question may sit in a separate chunk that falls just below the similarity threshold. The LLM, working with an incomplete picture, fills in the gaps, producing an answer that’s partially correct but ultimately flawed or misleading.

A passive system retrieves the top-K chunks and assumes that’s enough. An active system has to verify completeness before moving forward.

The Solution: Active Retrieval with Deterministic Checkpoints

Building reliable RAG means transforming the pipeline from a passive fetcher into an active investigator. That requires embedding discrete, rule-based validation steps, deterministic checkpoints, that interrogate the relationship between the query and the candidate evidence before synthesis happens. These checkpoints act as circuit breakers, stopping incomplete or irrelevant context from ever reaching the final answer generation step.

Checkpoint 1: Intent-to-Context Alignment

The first validation happens immediately after initial retrieval. Before passing chunks to the LLM for answer synthesis, a lightweight, fast model (or a rules-based classifier) analyzes the query and the retrieved set. Its job is to answer one binary question: Does this retrieved context contain the specific information needed to fulfill the user’s explicit intent?

This isn’t another similarity score. It’s a task-oriented evaluation. For the escalation protocol query, the checkpoint parses the query for key required entities: “escalation protocol,” “Severity-1,” “data breach.” It then scans the retrieved chunks for the presence of a procedure or steps related to those entities. If the chunks only contain definitions or background, the checkpoint triggers a corrective action, typically a query reformulation or a secondary targeted search, instead of proceeding to synthesis with flawed evidence.

As an AI Infrastructure Lead from a major cloud provider put it: “The teams seeing sub-2% hallucination rates are those who’ve stopped trusting cosine similarity alone. They’ve added an intent-matching layer that acts as a gatekeeper before the generative LLM even gets involved.”

Checkpoint 2: Evidence Consistency and Conflict Resolution

Real enterprise knowledge bases are living documents. Policies get updated, product specifications change, and contradictory information can exist across different versions or departments. A passive RAG system will happily retrieve conflicting chunks, leaving the LLM to arbitrarily choose or blend contradictions, producing a hallucinated “average” of the truth.

An active system uses a second checkpoint for evidence consistency. When multiple chunks are retrieved, this step runs a cross-comparison. Are there direct factual conflicts, like “maximum file size is 5GB” in one chunk and “maximum file size is 10GB” in another? Are there temporal contradictions?

If conflicts are detected, the checkpoint doesn’t pass the messy bundle to the LLM. Instead, it can implement a resolution strategy: trigger a search for clarifying metadata like document date, apply a pre-defined rule such as “most recent wins,” or route the query to a human-in-the-loop escalation path with the conflict clearly flagged. This turns a source of error into a mechanism for data quality discovery.

Checkpoint 3: Completeness Verification

The final pre-synthesis checkpoint asks: Is the evidence sufficient to construct a complete answer? This goes beyond keyword presence to assess whether the logical structure of an answer is actually supported by the retrieved context.

For procedural queries (“How do I…?”), it checks for sequential steps. For comparative queries (“What’s the difference between X and Y?”), it verifies that attributes for both X and Y are present. For conditional queries (“What happens if…?”), it looks for if-then logic.

If the completeness check fails, the system can reformulate the query or kick off a recursive retrieval process, explicitly searching for the missing components. This breaks the cycle of the LLM inventing the missing steps or conditions. A benchmark study of production RAG systems found that teams implementing a completeness verification layer reduced user-reported “incomplete answer” tickets by over 60%, with a direct, measurable drop in hallucination-based errors.

Implementing the Framework: A Practical Architecture

Building this active validation framework doesn’t mean scrapping your existing stack. It’s an architectural pattern that layers on top of your core retrieval components.

Step 1: Augment Your Query Router. Before the vector search, implement a query classifier that categorizes intent, such as factual lookup, procedural, comparative, or conditional. This intent label travels through the pipeline and informs the validation logic at each checkpoint.

Step 2: Implement the Checkpoint Microservices. Each checkpoint (Alignment, Consistency, Completeness) should be a discrete, independently callable service. This keeps things modular, allows for different implementations like rules, small ML models, or heuristics, and makes precise monitoring and tuning possible. The Consistency Checkpoint might rely primarily on rule-based pattern matching, while the Completeness Verifier could use a fine-tuned small model.

Step 3: Design the Control Flow. The pipeline logic becomes a directed graph. Primary retrieval runs first, then the evidence set flows through Checkpoint 1 (Alignment). A pass sends it to Checkpoint 2 (Consistency). Another pass sends it to Checkpoint 3 (Completeness). A fail at any point diverts the flow to a dedicated failure handler module, which decides on the corrective action: query reformulation, hybrid search with added keyword search, metadata-aware re-retrieval, or human escalation. Only evidence that clears all three checkpoints reaches the final answer synthesis LLM.

Step 4: Instrument for Observability. Every checkpoint decision, pass, fail, and the reason for failure, needs to be logged and surfaced in your observability dashboard. This creates a goldmine of data. You’re no longer just measuring retrieval score. You’re measuring things like “percentage of queries failing alignment due to missing entities” or “frequency of policy conflicts detected.” That data drives continuous improvement of both your RAG system and your underlying knowledge base.

The Tangible Outcome: From Liability to Trusted System

Adopting an active validation architecture fundamentally changes the relationship between your RAG system and its users. Hallucinations don’t just become rarer. When the system lacks confidence, it says so transparently or asks for clarification rather than pretending. That builds trust in a way that fluent-but-wrong answers never can.

The operational data from the checkpoints also gives you visibility into the gaps and contradictions in your enterprise knowledge that you’d never see otherwise. Your RAG implementation becomes a driver for data quality improvement, not just a Q&A interface.

And practically speaking, by preventing flawed context from reaching the expensive, powerful synthesis LLM, you cut wasted inference costs and reduce latency on queries that were always going to produce poor results anyway.

The goal of enterprise RAG isn’t to be clever. It’s to be correct. That path to correctness doesn’t run through hoping the LLM will be careful with whatever context it receives. It runs through building a system that’s meticulous about the context it provides in the first place.

By implementing deterministic validation checkpoints that actively interrogate the bridge between question and evidence, you move beyond the fragile promise of RAG and build something engineered for reliable, trustworthy accuracy when it matters most.

Start by auditing a sample of your production query logs. For each answer flagged as problematic, ask whether the root cause was a failure of intent alignment, evidence consistency, or completeness. That diagnosis will map directly to the first checkpoint you need to build. If you’re working through this architecture and want a structured approach to the implementation, that’s exactly where a technical review can save significant time.