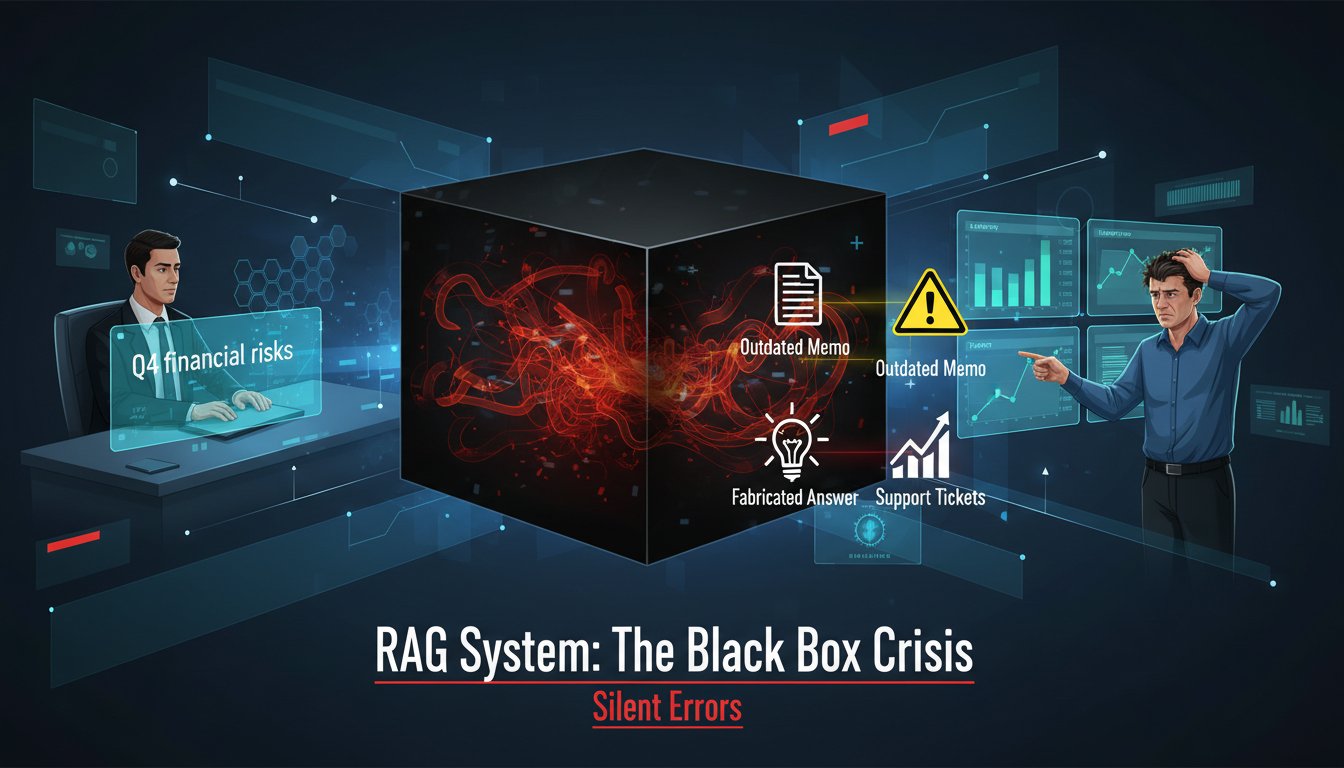

For nine months, their RAG pipeline had been flawless. The internal engineering team’s proof of concept handled technical queries about codebases with near-perfect accuracy. Leadership gave the green light. They pushed the system to production, expecting a smooth transition. Within a week, support tickets flooded in. The system was slow. The answers were wrong. The once-celebrated AI assistant was generating plausible-sounding nonsense about internal APIs that didn’t exist.

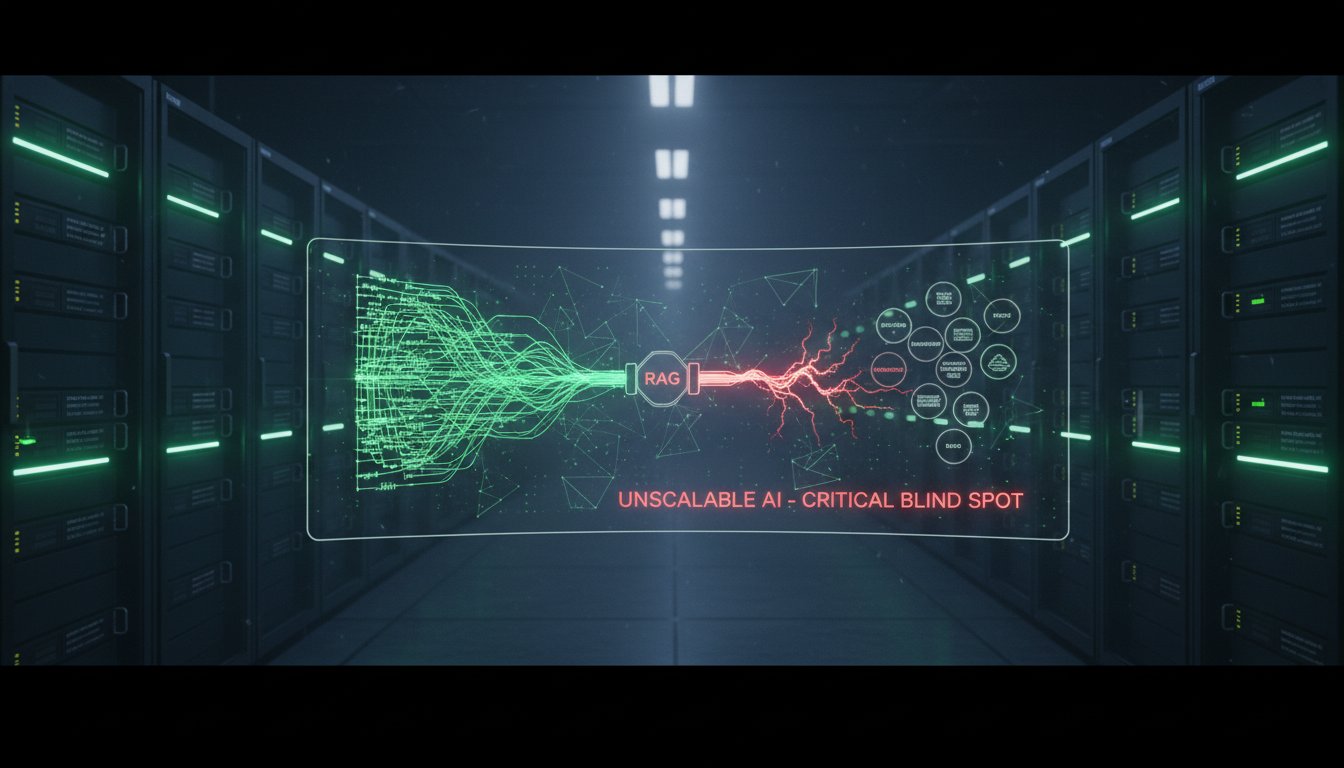

The team scrambled, pointing fingers at the vector store, then the embedding model, then the LLM itself. The root cause, though, was invisible: they had scaled a system they fundamentally didn’t understand. They had no observability. Only hope.

This story isn’t unique. It’s the silent crisis of enterprise AI scaling. Organizations pour resources into building sophisticated Retrieval-Augmented Generation systems, only to watch them fall apart under real user load. The failure isn’t in the retrieval or the generation. It’s in the blind spot between them. Scaling a RAG pipeline without deterministic metrics is like launching a rocket without telemetry. You might get off the ground, but you’ll never know why you crashed.

The way out of this cycle isn’t more complex architecture. It’s simpler, more brutal measurement. Success hinges on tracking a handful of non-negotiable metrics before you commit to scaling. This isn’t about post-mortem analysis. It’s about pre-emptive validation. By instrumenting your pipeline to answer five critical questions, you turn scaling from a gamble into a calculated engineering rollout. You move from reacting to hallucinations to preventing them, from guessing about costs to controlling them, and from hoping for performance to guaranteeing it.

Below are the five metrics that separate functional prototypes from production-grade RAG systems. This is the deterministic observability framework that lets you scale with confidence, not crossed fingers.

The Foundation: Why Metrics Before Scale Is Non-Negotiable

Scaling amplifies everything, both strengths and weaknesses. A minor retrieval hiccup in a proof of concept becomes a systemic failure at 10,000 queries per hour. The core philosophy shift, as emphasized in AWS’s guidance on operationalizing generative AI, is moving from a “build-first” to an “observe-first” mentality. You must instrument to understand, not understand to instrument.

The Scaling Debt Trap

Most teams fall into a predictable pattern: they build a minimal pipeline, achieve acceptable accuracy on a curated test set, and declare victory. This creates “scaling debt,” the invisible accumulation of unmeasured inefficiencies and edge cases that compound under load. As Praful Krishna, an AI architecture expert, notes, “Traditional RAG hits a ceiling at enterprise scale. The next phase requires agentic chunking and governed data lakehouses to maintain accuracy without inflating latency.” That ceiling is built from unobserved failure modes. Without metrics, you can’t identify it until you hit it at full speed.

From Subjective to Deterministic

The transition to production requires replacing subjective “seems good” assessments with deterministic, numerical benchmarks. This framework forces objectivity. It answers the engineering question: “By what measurable standard does this system perform adequately?”

Metric 1: End-to-End Latency Percentiles

User patience is the ultimate bottleneck. Tracking average latency is deceptive. It masks the tail-end experiences that drive frustration and abandonment.

What to Measure and Why

You need to measure latency at the p95 (95th percentile) and p99 (99th percentile), not just the mean. The p95 latency tells you the experience for 95% of your users. The p99 reveals your worst-case scenarios, which often correlate with complex, multi-hop queries or system bottlenecks. If your p99 is 15 seconds, you’re guaranteeing a terrible experience for 1 out of every 100 queries. At scale, that’s thousands of frustrated users daily.

Instrumentation and Thresholds

Break down the pipeline into three components:

- Retrieval Latency: Time from query to retrieved chunk list.

- LLM Generation Latency: Time from prompt construction to final token.

- Total End-to-End Latency: The sum, plus any overhead.

Set aggressive, tiered thresholds based on query complexity. For simple fact retrieval, target p95 under 2 seconds. For complex analytical queries, a p95 under 5 seconds may be acceptable. The key is establishing the baseline before scaling so you can detect degradation immediately.

Metric 2: Citation Fidelity and Verifiability

A RAG system that can’t prove its answers is a liability. Citation fidelity measures whether the generated answer is directly supported by the retrieved evidence.

The Hallucination Firewall

According to LabelStud Research, hybrid search plus re-ranking pipelines can reduce hallucination rates by 40 to 60%. But reduction isn’t elimination. You need a metric to catch the hallucinations that slip through. Citation fidelity is calculated by having a human or automated checker verify whether the key claims in the LLM’s output are present in the source chunks. A score of 95% means 5% of statements are unsupported fabrications.

Implementing Verification

Pre-production, this requires a human-in-the-loop evaluation set. In production, you can use lighter-weight checks:

- N-gram Overlap: Check for key phrase matching between output and sources.

- Semantic Similarity: Use a small, fast embedding model to compare sentence-level claims to chunks.

- Audit Trail Logging: Store the exact query, retrieved chunk IDs, and final answer. This creates a verifiable trail for compliance and debugging.

As one AWS machine learning blog puts it directly: “99% of RAG systems in production fail not at retrieval, but at observability. Without deterministic tracing, hallucinations become unmanageable.”

Metric 3: Token Cost Per Query Profile

LLM cost is non-linear and query-dependent. Ignore this metric and you’ll face financial shock.

Cost-Aware Routing

Not all queries need a $10 GPT-4 Turbo completion. Many can be handled by a $0.10 Claude Haiku or a local Llama 3 model. The metric to track is tokens-in and tokens-out per query, segmented by query type. Profile your queries across two main categories:

- Simple Lookup: Low input tokens, low output tokens.

- Analytical Synthesis: High input tokens (many retrieved chunks), high output tokens.

This profiling enables intelligent routing. You can build a classifier that sends simple queries to cheaper, faster models and reserves the expensive, powerful models for complex tasks. This single strategy can cut costs by 70% without impacting user satisfaction.

Forecasting Scale

Multiply your average token cost per query by your projected query volume. This forecast is essential for budget provisioning. Scaling without this number means operating in the dark.

Metric 4: Retrieval Recall and Precision

This is the accuracy of your search before the LLM ever sees it. If retrieval fails, generation fails.

Recall vs. Precision

- Recall: Of all the relevant chunks in your knowledge base, what percentage did your retriever actually find? Low recall means missing critical information.

- Precision: Of all the chunks your retriever returned, what percentage were actually relevant? Low precision means flooding the LLM with noise, which degrades answer quality and drives up token costs.

The Hybrid Search Imperative

Pure vector search often improves one at the expense of the other. As IBM’s AI engineering whitepaper indicates, SQL RAG and GraphRAG frameworks can improve complex query accuracy by 3x over vector-only approaches. You need to measure both. Establish a benchmark: for your critical query types, you might require over 80% recall and over 60% precision. Tune your hybrid search weights, balancing keyword versus vector, and adjust your re-ranker to hit those targets.

Metric 5: Operational Reliability and Error Rate

This metric tracks system stability: the percentage of queries that complete successfully versus those that fail due to timeouts, model errors, or infrastructure faults.

Defining Failure

A failure isn’t just a 500 error. It includes:

- Timeout Failures: Queries exceeding your p99 latency threshold.

- Model Degradation: Responses that trigger your citation fidelity or content safety filters.

- Hard Infrastructure Errors: API failures, database timeouts.

The Error Budget

Adopt a Site Reliability Engineering (SRE) principle: the error budget. If you target 99.9% reliability, your error budget is 0.1% of queries. This budget forces real trade-off decisions. Is launching a new, experimental re-ranker worth consuming 0.05% of the budget? This metric turns reliability from an abstract goal into a consumable resource you can actually manage.

Implementing Your Observability Dashboard

Tracking these metrics requires instrumentation, but you don’t need a monolithic vendor platform to get started.

The Minimum Viable Dashboard

- Log Everything: Structure your logs to include query ID, retrieval chunks, final answer, token counts, latencies, and error flags.

- Stream to a Data Warehouse: Pipe logs to Snowflake, BigQuery, or even a PostgreSQL database.

- Build Simple Dashboards: Use Grafana, Looker, or Metabase to create five core charts, one for each metric above.

- Set Alerts: Configure alerts for when p95 latency breaches threshold, citation fidelity drops below 90%, or error rate consumes the weekly budget.

The Pre-Scale Checklist

Before you approve the scaling order for more GPUs or higher API tiers, run this audit:

- [ ] p95/p99 Latency is stable and under target for all query types.

- [ ] Citation Fidelity is above 95% on a held-out evaluation set.

- [ ] Token cost per query is profiled and a routing strategy is drafted.

- [ ] Retrieval Recall and Precision meet benchmark targets.

- [ ] Error Rate is measured and an error budget is established.

If you can’t check all five boxes, you’re not scaling engineering. You’re scaling hope. And hope isn’t a production strategy.

The team that watched their RAG pipeline fail had mastered the building blocks but neglected the blueprint: measurement. The path to production isn’t paved with more sophisticated models. It’s paved with simpler, clearer numbers. By adopting these five metrics, latency percentiles, citation fidelity, token cost, retrieval accuracy, and error rates, you install the telemetry needed to handle scale. You replace reactive panic with proactive control. This framework turns the black box of RAG into a transparent, governable system.

The next step is to instrument one metric. Pick the one that keeps you up at night, likely latency or hallucinations, and build its dashboard. Share the results with your team. That first chart is the line between a prototype that works in a demo and a system that works in the real world. Download our free RAG Production Readiness Checklist to structure your pre-scale audit and establish these metrics systematically.