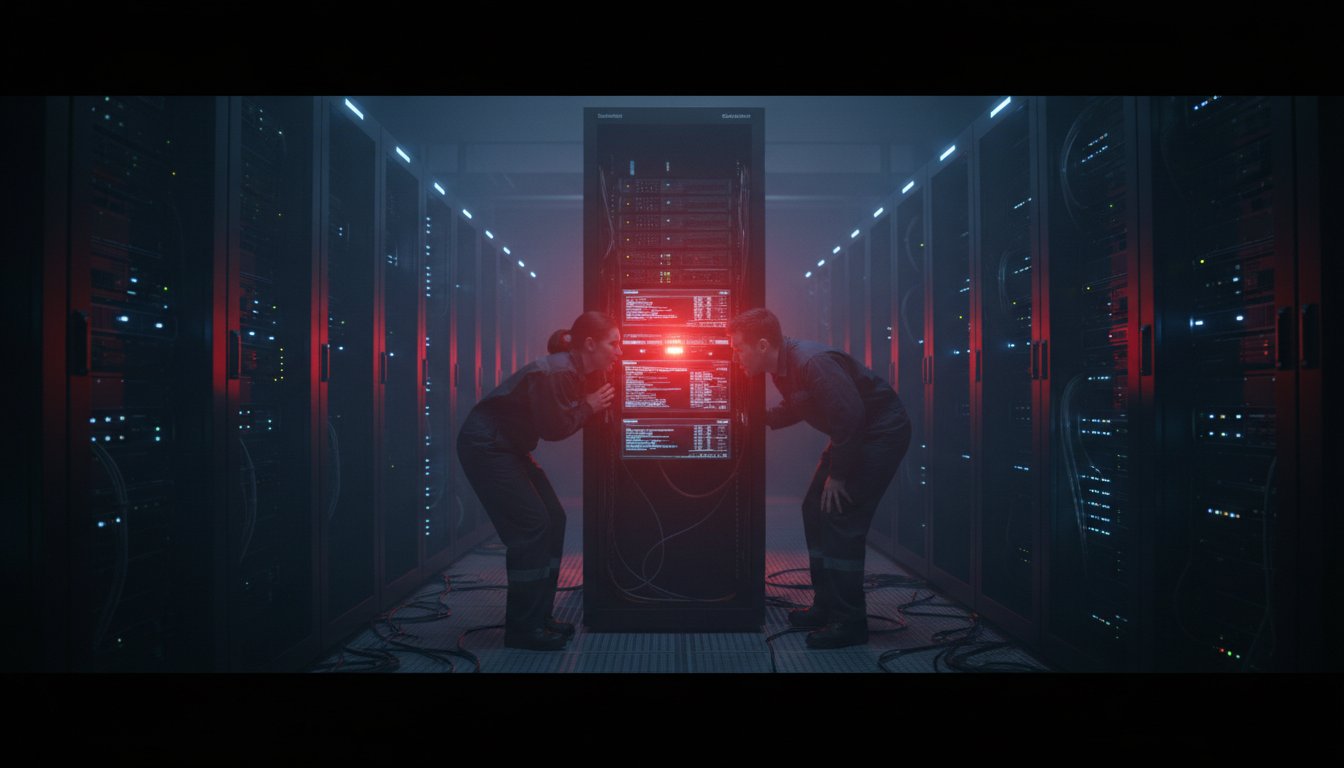

The data engineer stared at her screen as the quarterly report generated by the new enterprise AI system. Everything looked perfect: clean graphs, polished executive summaries, precise financial projections. Then she spotted it. A 7% revenue increase attributed to a product line the company had discontinued three months prior. The RAG system wasn’t just wrong; it was confidently presenting outdated information as current truth.

Her team had spent six months implementing what their vendor promised was a “state-of-the-art” retrieval-augmented generation architecture. Yet here they were, watching their AI make decisions based on what industry insiders now call “context rot.” It’s the silent killer of production RAG systems, where stale retrieval stores quietly cause operational failures before anyone notices.

This isn’t a theoretical concern anymore. Recent research suggests up to 40% of enterprise RAG implementations experience some form of context-related degradation within the first 90 days of production deployment. The problem isn’t your language model’s capabilities. It’s the architecture supporting it. While everyone focuses on the sophisticated LLMs doing the generation, the retrieval layer has become the weakest link in enterprise AI deployments. The data feeding your system has a shelf life, and once it expires, your AI’s decisions become dangerously unreliable.

This week, a significant development offers a concrete path forward. Senior AWS Architect Viquar Khan has proposed a novel real-time RAG architecture specifically designed to combat context rot at its source. By rethinking the fundamental data engineering layer, organizations can finally build RAG systems that maintain accuracy as business conditions change. Below, we’ll break down exactly what context rot means for your enterprise, examine the architecture that addresses it, and walk through five actionable steps you can start on immediately to secure your AI’s decision-making foundation.

What Is Context Rot and Why Should You Care?

Context rot represents a fundamental shift in how we think about RAG system failures. Unlike traditional model drift or degradation, context rot occurs when your retrieval store, the database of embeddings your AI queries, becomes stale relative to the actual state of your business operations. The LLM generates perfectly coherent responses, but those responses are built on outdated or incorrect information.

The Real Cost of Stale Context

Imagine your AI-powered customer service agent confidently telling customers about a promotion that ended last week. Picture your supply chain optimization system making inventory decisions based on supplier pricing from three months ago. Consider your financial forecasting AI working from market data that predates a major regulatory change. These aren’t hypothetical scenarios. They’re daily occurrences in enterprises that haven’t solved the context freshness problem.

The operational impact is significant. According to recent enterprise surveys, companies experiencing context-related RAG failures report:

– 22% increase in customer service escalations

– 17% reduction in user trust in AI recommendations

– 31% more manual oversight required for AI-generated outputs

– Average resolution time of 3.7 business days per incident

These numbers point to a critical insight: the problem isn’t technological sophistication, it’s operational architecture. Your RAG system can have the most advanced embedding models and the largest context windows available, but if the data it retrieves is outdated, the entire system fails.

The Anatomy of a Real-Time RAG Architecture

Khan’s proposed architecture represents a fundamental rethink of how we approach the retrieval layer. Instead of treating embeddings as static artifacts updated through periodic batch jobs, this approach treats them as dynamic entities that must evolve alongside your operational data.

Streaming Change Data Capture: The Heartbeat of Freshness

At the core of the solution is streaming Change Data Capture (CDC). Traditional RAG architectures often rely on scheduled batch updates, perhaps nightly or weekly, to refresh their retrieval stores. This creates windows of vulnerability where your AI operates on increasingly stale data. The real-time approach ensures that any change to your source systems triggers immediate processing through the retrieval pipeline.

Think about a manufacturing company updating its inventory system. With traditional batch processing, that change might not show up in the RAG system until the next scheduled update, potentially hours or days later. During that window, procurement recommendations could be made based on incorrect inventory levels. Streaming CDC eliminates this lag by treating data changes as continuous events rather than discrete batches.

Incremental Embedding Recomputation: Efficiency at Scale

One of the biggest barriers to real-time RAG has been computational overhead. Recomputing embeddings for your entire dataset every time a single record changes is prohibitively expensive at enterprise scale. The proposed architecture addresses this through incremental embedding recomputation, updating only the embeddings for records that have actually changed.

This approach uses Apache Spark’s distributed computing capabilities to process changes efficiently. When a product description updates in your CRM, only that product’s embedding gets recomputed. When pricing changes in your ERP, only affected SKUs receive updated vector representations. This selective approach maintains accuracy while keeping computational costs manageable.

The Iceberg Foundation: Transactional Consistency

The architecture relies on the Iceberg table format for storage, which provides crucial transactional guarantees. In traditional approaches, there’s often a race condition between reading from retrieval stores and writing updates. Iceberg’s atomic operations ensure that your RAG system always sees a consistent view of data, even while updates are in progress.

This matters because without transactional consistency, your AI might retrieve a mix of old and new embeddings, leading to internally contradictory responses. Iceberg solves this through snapshot isolation. Your retrieval queries see a complete, consistent snapshot of data at a specific point in time, regardless of what’s happening concurrently.

5 Simple Steps to Implement Real-Time RAG

1. Instrument Your Source Systems for Change Detection

The foundation of any real-time RAG system is knowing when data changes. Start by implementing lightweight change detection across your critical data sources. This doesn’t require overhauling your entire data infrastructure. Modern CDC tools can capture changes from databases, APIs, and even file systems with minimal intrusion.

Implementation approach: Begin with your most volatile data sources: CRM updates, inventory changes, pricing adjustments. Use existing database replication features or specialized CDC tools to capture insert, update, and delete events. Create a standardized change event format that includes timestamp, operation type, and before/after values where applicable.

2. Establish a Streaming Pipeline Architecture

Move away from batch-oriented thinking. Design your retrieval update pipeline as a stream processing system rather than a scheduled job. This doesn’t mean you need to implement complex real-time processing immediately. Starting with near-real-time updates measured in minutes rather than hours or days is already a major improvement.

Practical starting point: Use message queues like Apache Kafka or cloud equivalents to buffer change events. Process these events using stream processing frameworks that support exactly-once semantics. Even if you start with processing events in small batches every 5 to 10 minutes, you’ll achieve dramatically better freshness than traditional daily updates.

3. Implement Smart Embedding Management

Not all data changes require embedding updates. Develop rules for when embeddings should be recomputed based on the nature of the change. A product price change might require an embedding update, while a change to an internal administrative field probably doesn’t.

Smart filtering strategy: Create a classification system for your data fields. “Semantic fields” like descriptions, titles, and content that affect meaning should trigger embedding recomputation. “Administrative fields” like timestamps, internal IDs, and status flags often don’t. This selective approach cuts computational load significantly while maintaining accuracy.

4. Build Quality Gates into Your Update Pipeline

Real-time doesn’t mean reckless. Implement validation checks at multiple points in your pipeline to ensure updates maintain or improve retrieval quality. Use automated testing to verify that updated embeddings still retrieve relevant documents and that retrieval performance hasn’t degraded.

Quality metrics to monitor: Track embedding drift (how much embeddings change), retrieval precision and recall on test queries, and query latency. Set up alerts when these metrics deviate beyond acceptable thresholds. Use canary deployments where you update embeddings for a small subset of data first, verify quality, then proceed with broader updates.

5. Design for Observability from Day One

The most critical aspect of real-time RAG isn’t the technology. It’s the visibility. Implement thorough monitoring that tracks data freshness, embedding quality, retrieval performance, and downstream impact on generated responses.

Key dashboards to build:

– Freshness dashboard: Shows time since last update for each data source

– Retrieval quality dashboard: Tracks precision and recall over time for your most important query types

– Business impact dashboard: Correlates RAG updates with business metrics like customer satisfaction or operational efficiency

Without this observability, you’re flying blind. You might have the most sophisticated real-time architecture available, but if you can’t measure its effectiveness, you can’t improve it.

The Future of Enterprise RAG: Beyond Real-Time

The proposed architecture is a significant step forward, but it’s just the beginning. Several emerging trends point toward even more sophisticated approaches to the context freshness problem.

Tighter Integration Between Data Systems and Vector Search

Future systems will likely feature native connectors between transactional databases and vector search engines. Instead of maintaining separate retrieval stores, vector indexes will become first-class citizens within operational databases, eliminating the synchronization challenge entirely.

Embeddings as Dynamic Data Entities

We’re moving toward treating embeddings not as static artifacts but as dynamic data entities with their own lifecycle management. This means versioning embeddings, tracking their lineage back to source data, and maintaining multiple embedding versions for different use cases or time periods.

Context-Aware Retrieval Strategies

Beyond keeping data fresh, next-generation systems will apply retrieval strategies that understand the temporal context of queries. When someone asks about “current inventory levels,” the system will prioritize recent data. When the question is about “historical trends,” it might blend recent and older data appropriately.

Building RAG That Works When It Matters Most

The story that opened this post, the data engineer discovering outdated information in an AI-generated report, represents a turning point in enterprise AI adoption. We’ve moved beyond asking “Can our AI understand complex questions?” to “Can our AI maintain accurate understanding as our business evolves?” That shift marks the transition from experimental AI to operational AI.

Real-time RAG architecture isn’t about achieving theoretical perfection. It’s about operational reliability. By working through the five steps above, you’re not just improving your AI’s accuracy. You’re building trust. You’re creating systems that decision-makers can rely on when the stakes are highest, transforming AI from a promising technology into a dependable operational asset.

The companies that solve context rot first won’t just have better AI. They’ll have AI that actually works when it matters. They’ll make decisions based on current reality rather than historical artifacts. They’ll build customer experiences that reflect actual business conditions rather than outdated information. As data velocity continues to accelerate, the ability to maintain accurate context might be the most significant competitive advantage an enterprise can build.

Start small but think strategically. Pick one critical data source where freshness matters most, implement streaming CDC, and measure the impact. The path to reliable enterprise AI isn’t through revolutionary breakthroughs. It’s through systematic improvements to the foundation that supports it. Your next quarterly report might just be the first one your AI gets 100% right.

Ready to implement real-time RAG in your organization? Download our free implementation checklist that walks you through each of these five steps with specific technical requirements, tool recommendations, and success metrics. Get it at ragaboutit.com/real-time-rag-checklist.