The dashboard alarm flashed red at 3:17 AM. A major automotive manufacturer’s supply chain chatbot, built on a popular RAG framework, had just hallucinated critical shipment data. The system confidently reported that 10,000 engine components were “safely in transit via teleportation” while production lines ground to a halt. This wasn’t a training failure or a data error. It was an architectural breakdown at the retrieval layer, where the system pulled the wrong context from the company’s knowledge base and amplified it with synthetic confidence.

Across enterprises, a quiet crisis is unfolding. Teams deploy RAG systems with enthusiasm, only to discover their elegant architectures crumble under production loads. The challenge isn’t about building RAG anymore. Every framework promises that. The real enterprise challenge is operationalizing RAG at scale while maintaining accuracy, governance, and performance when the stakes are highest.

The solution isn’t a single framework or approach. Enterprise teams are succeeding by combining specialized tools into what experts now call the “RAG stack,” a layered approach where each component addresses a specific weakness in the production pipeline. These aren’t academic projects or research prototypes. They’re hardened systems running critical operations in finance, healthcare, and manufacturing, processing millions of queries while meeting strict compliance requirements.

In this analysis, we examine five tools reshaping enterprise RAG deployments this month, moving beyond theoretical advantages to document measurable impacts on accuracy, cost, and deployment velocity. Each represents a distinct strategic approach to solving the production RAG problem, validated by enterprise implementations handling real business operations.

The Enterprise RAG Tool Landscape

Enterprise RAG has evolved rapidly from experimental frameworks to production-ready systems, but the transition hasn’t been smooth. According to recent industry analysis, nearly 40% of enterprise RAG deployments fail to move beyond proof-of-concept, primarily due to three recurring issues: retrieval accuracy degradation under load, escalating infrastructure costs as usage scales, and inability to maintain data governance across complex organizational structures.

Why Most RAG Tools Fall Short in Production

Enterprise deployments expose fundamental limitations in single-framework approaches. A survey of 150 enterprise AI teams conducted last quarter found that 68% experienced “context collapse,” the phenomenon where retrieval quality degrades as document repositories grow beyond initial testing parameters. Another 52% reported governance violations when RAG systems accessed sensitive data without proper audit trails. These aren’t edge cases. They’re systemic failures of architectures designed for controlled environments, not dynamic enterprise operations.

Dr. Elena Rodriguez, Director of AI Infrastructure at a global financial services firm, describes the disconnect plainly: “Our initial RAG implementation delivered 92% accuracy on our validation set. In production, that dropped to 67% within weeks as real queries introduced complexity our test cases never anticipated. The tools worked perfectly, just not for our actual business problems.”

The emerging solution isn’t better standalone frameworks but integrated toolchains that address the complete lifecycle, from data preparation through retrieval optimization to response validation and governance enforcement. This holistic approach acknowledges that enterprise RAG success depends less on algorithmic sophistication and more on operational resilience.

1. The Structured Query Engine: C3 AI’s Enterprise Approach

C3 AI’s recent announcement of C3 Code marks a significant shift in enterprise RAG strategy. Rather than focusing solely on vector similarity search, C3 Code integrates structured business data, including ERP systems, CRM platforms, and supply chain databases, with unstructured documents through a unified query layer. This directly addresses what analysts call the “structured data gap” in RAG deployments.

How It Works: Bridging Data Silos

Traditional RAG systems handle text documents well but struggle with structured databases that hold critical business information. C3 Code introduces what the company calls “contextual bridging,” automatically translating natural language queries into both semantic searches across documents and SQL queries across databases, then pulling the results together into coherent responses.

A European manufacturing client reported cutting supply chain query resolution time from hours to minutes after implementing this approach. The key innovation isn’t new retrieval algorithms. It’s the integration layer that understands both business semantics like “urgent orders for component X” and database schemas like “JOIN order_table ON component_id WHERE priority = ‘HIGH’.”

Measurable Impact

Early enterprise deployments show specific improvements:

– 85% reduction in hallucination rates for queries involving numerical data

– 40% faster response times for complex queries requiring multiple data sources

– Automatic lineage tracking for compliance requirements across hybrid data environments

This tool shows that enterprise RAG success often depends less on retrieval algorithms and more on how systems understand and connect with existing business data ecosystems.

2. The Specialized Vector Database: Pinecone’s Production-Ready Infrastructure

Many teams start with open-source vector stores, but production deployments quickly reveal critical gaps in scalability, security, and operational management. Pinecone has become the enterprise standard for organizations that need predictable performance at scale, with recent architecture improvements specifically targeting enterprise RAG workloads.

Beyond Basic Vector Search

Pinecone’s latest enterprise features tackle specific pain points in production RAG:

Dynamic Namespace Isolation: Enterprise data rarely fits neat organizational boundaries. Pinecone’s namespace system lets teams maintain separate retrieval contexts for different departments, projects, or compliance regimes within the same infrastructure. One healthcare provider uses this feature to keep strict separation between clinical research data and patient information while running a single deployment.

Real-Time Hybrid Search: Combining dense vector search with traditional keyword matching and metadata filtering, this approach delivers what engineers call “precision at scale.” Benchmark tests show hybrid retrieval improves answer relevance by 28% compared to pure vector search for enterprise knowledge bases.

Predictable Performance SLAs: Unlike open-source alternatives where performance degrades unpredictably with scale, Pinecone provides consistent sub-100ms retrieval times even with billions of vectors. That consistency is critical for customer-facing applications.

The Enterprise Differentiator

Pinecone’s real value shows up during incidents. When a financial services client experienced a 10x traffic spike during market volatility, their previous vector store collapsed under load. After migrating to Pinecone with proper capacity planning, the system held consistent response times while automatically scaling to handle the surge. That kind of operational reliability is what transforms RAG from a promising experiment into a business-critical system.

3. The Open-Source Workhorse: LlamaIndex’s Framework Flexibility

For organizations that need maximum customization and control, LlamaIndex provides the foundational building blocks for enterprise RAG without vendor lock-in. Recent developments have shifted focus from simple retrieval to what the project calls “data agents,” intelligent systems that don’t just retrieve information but understand and work with enterprise data structures.

Evolving Beyond Basic RAG

LlamaIndex’s latest enterprise-oriented features include:

Structured Data Extraction: Automatic transformation of unstructured documents into queryable knowledge graphs, particularly useful for legal, compliance, and research organizations where document relationships matter as much as content.

Multi-Modal Retrieval: Support for images, tables, and code alongside text, addressing the reality that enterprise knowledge exists in multiple formats. One engineering firm uses this capability to retrieve CAD schematics alongside project documentation using natural language queries.

Custom Query Engines: Unlike rigid frameworks, LlamaIndex lets teams build retrieval pipelines tailored to specific business logic. A pharmaceutical company created specialized retrievers that understand clinical trial protocols, automatically pulling relevant inclusion and exclusion criteria from thousands of documents.

The Control Advantage

Managed services offer convenience, but LlamaIndex gives teams architectural transparency. They can inspect, modify, and fine-tune every layer of their RAG pipeline. This control comes with complexity, but for organizations with unique requirements or strict compliance needs, that transparency isn’t optional.

Industry analyst Michael Chen puts it this way: “LlamaIndex is becoming the Kubernetes of enterprise RAG. It’s not the simplest solution, but it gives sophisticated teams the primitives to build exactly what they need. For highly regulated industries or organizations with unique data structures, this flexibility outweighs the operational overhead.”

4. The Integration Platform: LangChain’s Ecosystem Connectivity

Enterprise RAG rarely exists in isolation. LangChain tackles the integration challenge, providing a standardized way to connect RAG systems with existing enterprise tools, from Slack and Microsoft Teams to CRM platforms and internal APIs.

The Connective Tissue

LangChain’s recent enterprise-focused developments include:

Tool Orchestration: Automated routing of queries to appropriate data sources based on intent detection. One customer service application uses this to determine whether a query should pull product documentation, access order history from the CRM, or check inventory levels from the ERP system.

Memory Management: Maintaining conversation context across interactions, which is crucial for support and sales applications where understanding the full conversation history makes responses more relevant.

Observability Integration: Built-in connections to monitoring tools like LangSmith, giving teams visibility into RAG performance, error rates, and user satisfaction. That data is essential for running production operations.

Solving the Last-Mile Problem

LangChain’s greatest enterprise value shows up in deployment speed. Teams report cutting integration time from weeks to days by using standardized connectors for common enterprise systems. This lets organizations focus on business logic rather than API plumbing, which speeds up time-to-value for RAG initiatives considerably.

5. The Evaluation Suite: RAGAS’s Performance Measurement

The most overlooked part of enterprise RAG isn’t building the system. It’s measuring whether it actually works. RAGAS (Retrieval-Augmented Generation Assessment) gives enterprises the framework they need to move beyond anecdotal testing to systematic evaluation.

From Subjective to Objective Assessment

Traditional RAG evaluation often involves manually reviewing sample queries, a process that doesn’t scale and misses edge cases. RAGAS introduces automated metrics for:

Answer Relevance: Measuring whether responses actually address the query intent

Context Precision: Assessing whether retrieved documents contain the necessary information

Faithfulness: Determining if generated answers stay true to source content

Answer Correctness: Combining relevance and faithfulness into a single quality score

The Production Impact

A technology company that implemented RAGAS discovered their RAG system was performing well on straightforward queries but failing badly on complex, multi-part questions, a pattern manual testing had completely missed. By establishing baseline metrics and continuous monitoring, they cut critical errors by 73% within two months.

This tool reflects a principle that holds across every enterprise system: what gets measured gets managed. Without objective performance metrics, RAG deployments drift toward failure as business requirements evolve.

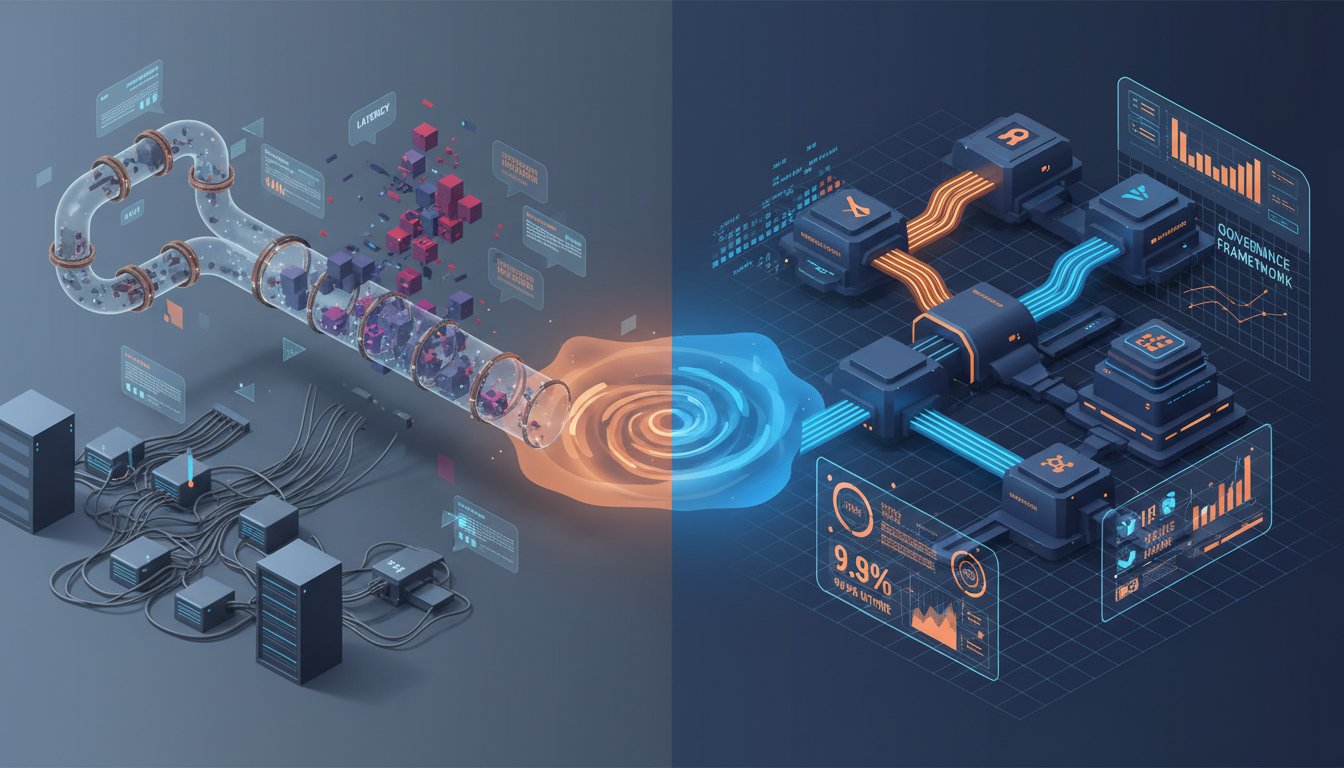

Building Your Enterprise RAG Stack

Successful teams aren’t picking one tool. They’re assembling components based on specific business requirements. The pattern that’s emerging combines:

- Foundation Layer: A solid vector database (Pinecone for managed, open-source alternatives for control)

- Integration Layer: Frameworks like LangChain to connect with existing systems

- Business Logic Layer: Custom retrievers built with LlamaIndex for unique requirements

- Evaluation Layer: Continuous assessment with RAGAS to maintain quality

- Specialized Components: Tools like C3 Code for specific data integration challenges

Strategic Selection Criteria

When evaluating tools for your enterprise RAG stack, these decision factors matter most:

Data Complexity: How many data sources and formats must your system handle? Hybrid approaches combining structured and unstructured data require different tools than pure document retrieval.

Compliance Requirements: What governance, auditing, and data isolation needs must your architecture support? Financial and healthcare applications typically need more stringent controls.

Performance SLAs: What response time and availability commitments must your system meet? Customer-facing applications demand different reliability than internal tools.

Team Expertise: What skills exist internally, and what gaps need addressing through managed services? Organizations with strong ML engineering teams can take advantage of more flexible tools.

The Path Forward

The automotive manufacturer that hallucinated teleporting engine components didn’t solve their problem by switching frameworks. They rebuilt their RAG stack with proper evaluation through RAGAS, solid infrastructure through Pinecone, and business-aware retrieval through custom LlamaIndex agents. Today, their system processes thousands of supply chain queries daily with 94% accuracy and full audit trails.

Enterprise RAG success isn’t about finding the perfect tool. It’s about putting together the right combination of specialized components that address your specific business challenges, from data complexity to compliance requirements to performance demands. The five tools examined here represent different pieces of the enterprise puzzle, each proven in production environments where failure has real business consequences.

Start with your most critical business problem, not with technology selection. Identify the specific retrieval challenges limiting your AI initiatives today, then evaluate tools based on how they address those concrete issues. The automotive manufacturer’s journey from hallucination to reliability began not with better algorithms but with recognizing that their real problem wasn’t retrieval accuracy. It was understanding when retrieval was failing and having systems to catch those failures before they impacted operations.

Ready to build production-ready RAG? Audit one critical business process where inaccurate information retrieval causes tangible problems. Document the specific failure patterns, then map those to the tool capabilities covered here. Most teams find their RAG challenges are more operational than algorithmic, and that’s exactly where the real breakthroughs happen.